## Summary

This PR adds a first-class API for defining registry indexes, beyond our

existing `--index-url` and `--extra-index-url` setup.

Specifically, you now define indexes like so in a `uv.toml` or

`pyproject.toml` file:

```toml

[[tool.uv.index]]

name = "pytorch"

url = "https://download.pytorch.org/whl/cu121"

```

You can also provide indexes via `--index` and `UV_INDEX`, and override

the default index with `--default-index` and `UV_DEFAULT_INDEX`.

### Index priority

Indexes are prioritized in the order in which they're defined, such that

the first-defined index has highest priority.

Indexes are also inherited from parent configuration (e.g., the

user-level `uv.toml`), but are placed after any indexes in the current

project, matching our semantics for other array-based configuration

values.

You can mix `--index` and `--default-index` with the legacy

`--index-url` and `--extra-index-url` settings; the latter two are

merely treated as unnamed `[[tool.uv.index]]` entries.

### Index pinning

If an index includes a name (which is optional), it can then be

referenced via `tool.uv.sources`:

```toml

[[tool.uv.index]]

name = "pytorch"

url = "https://download.pytorch.org/whl/cu121"

[tool.uv.sources]

torch = { index = "pytorch" }

```

If an index is marked as `explicit = true`, it can _only_ be used via

such references, and will never be searched implicitly:

```toml

[[tool.uv.index]]

name = "pytorch"

url = "https://download.pytorch.org/whl/cu121"

explicit = true

[tool.uv.sources]

torch = { index = "pytorch" }

```

Indexes defined outside of the current project (e.g., in the user-level

`uv.toml`) can _not_ be explicitly selected.

(As of now, we only support using a single index for a given

`tool.uv.sources` definition.)

### Default index

By default, we include PyPI as the default index. This remains true even

if the user defines a `[[tool.uv.index]]` -- PyPI is still used as a

fallback. You can mark an index as `default = true` to (1) disable the

use of PyPI, and (2) bump it to the bottom of the prioritized list, such

that it's used only if a package does not exist on a prior index:

```toml

[[tool.uv.index]]

name = "pytorch"

url = "https://download.pytorch.org/whl/cu121"

default = true

```

### Name reuse

If a name is reused, the higher-priority index with that name is used,

while the lower-priority indexes are ignored entirely.

For example, given:

```toml

[[tool.uv.index]]

name = "pytorch"

url = "https://download.pytorch.org/whl/cu121"

[[tool.uv.index]]

name = "pytorch"

url = "https://test.pypi.org/simple"

```

The `https://test.pypi.org/simple` index would be ignored entirely,

since it's lower-priority than `https://download.pytorch.org/whl/cu121`

but shares the same name.

Closes#171.

## Future work

- Users should be able to provide authentication for named indexes via

environment variables.

- `uv add` should automatically write `--index` entries to the

`pyproject.toml` file.

- Users should be able to provide multiple indexes for a given package,

stratified by platform:

```toml

[tool.uv.sources]

torch = [

{ index = "cpu", markers = "sys_platform == 'darwin'" },

{ index = "gpu", markers = "sys_platform != 'darwin'" },

]

```

- Users should be able to specify a proxy URL for a given index, to

avoid writing user-specific URLs to a lockfile:

```toml

[[tool.uv.index]]

name = "test"

url = "https://private.org/simple"

proxy = "http://<omitted>/pypi/simple"

```

## Summary

This PR declares and documents all environment variables that are used

in one way or another in `uv`, either internally, or externally, or

transitively under a common struct.

I think over time as uv has grown there's been many environment

variables introduced. Its harder to know which ones exists, which ones

are missing, what they're used for, or where are they used across the

code. The docs only documents a handful of them, for others you'd have

to dive into the code and inspect across crates to know which crates

they're used on or where they're relevant.

This PR is a starting attempt to unify them, make it easier to discover

which ones we have, and maybe unlock future posibilities in automating

generating documentation for them.

I think we can split out into multiple structs later to better organize,

but given the high influx of PR's and possibly new environment variables

introduced/re-used, it would be hard to try to organize them all now

into their proper namespaced struct while this is all happening given

merge conflicts and/or keeping up to date.

I don't think this has any impact on performance as they all should

still be inlined, although it may affect local build times on changes to

the environment vars as more crates would likely need a rebuild. Lastly,

some of them are declared but not used in the code, for example those in

`build.rs`. I left them declared because I still think it's useful to at

least have a reference.

Did I miss any? Are their initial docs cohesive?

Note, `uv-static` is a terrible name for a new crate, thoughts? Others

considered `uv-vars`, `uv-consts`.

## Test Plan

Existing tests

As per

https://matklad.github.io/2021/02/27/delete-cargo-integration-tests.html

Before that, there were 91 separate integration tests binary.

(As discussed on Discord — I've done the `uv` crate, there's still a few

more commits coming before this is mergeable, and I want to see how it

performs in CI and locally).

## Summary

This is a longstanding piece of technical debt. After we resolve, we

have a bunch of `ResolvedDist` entries. We then convert those to

`Requirement` (which is lossy -- we lose information like "the index

that the package was resolved to"), and then back to `Dist`.

## Summary

Historically, we've allowed the use of wheels that were downloaded from

PyPI even when the user passes `--no-binary`, if the wheel exists in the

cache. This PR modifies the cache lookup code such that we respect

`--no-build` and `--no-binary` in those paths.

Closes https://github.com/astral-sh/uv/issues/2154.

## Summary

If `--config-settings` are provided, we cache the built wheels under one

more subdirectory.

We _don't_ invalidate the actual source (i.e., trigger a re-download) or

metadata, though -- those can be reused even when `--config-settings`

change.

Closes https://github.com/astral-sh/uv/issues/7028.

## Summary

This PR adds a more flexible cache invalidation abstraction for uv, and

uses that new abstraction to improve support for dynamic metadata.

Specifically, instead of relying solely on a timestamp, we now pass

around a `CacheInfo` struct which (as of now) contains

`Option<Timestamp>` and `Option<Commit>`. The `CacheInfo` is saved in

`dist-info` as `uv_cache.json`, so we can test already-installed

distributions for cache validity (along with testing _cached_

distributions for cache validity).

Beyond the defaults (`pyproject.toml`, `setup.py`, and `setup.cfg`

changes), users can also specify additional cache keys, and it's easy

for us to extend support in the future. Right now, cache keys can either

be instructions to include the current commit (for `setuptools_scm` and

similar) or file paths (for `hatch-requirements-txt` and similar):

```toml

[tool.uv]

cache-keys = [{ file = "requirements.txt" }, { git = true }]

```

This change should be fully backwards compatible.

Closes https://github.com/astral-sh/uv/issues/6964.

Closes https://github.com/astral-sh/uv/issues/6255.

Closes https://github.com/astral-sh/uv/issues/6860.

<!--

Thank you for contributing to uv! To help us out with reviewing, please

consider the following:

- Does this pull request include a summary of the change? (See below.)

- Does this pull request include a descriptive title?

- Does this pull request include references to any relevant issues?

-->

## Summary

Separate exceptions for different timeouts to make it easier to debug

issues like #6105.

<!-- What's the purpose of the change? What does it do, and why? -->

## Test Plan

<!-- How was it tested? -->

Not tested at all.

## Summary

Use a dedicated source type for non-package requirements. Also enables

us to support non-package `path` dependencies _and_ removes the need to

have the member `pyproject.toml` files available when we sync _and_

makes it explicit which dependencies are virtual vs. not (as evidenced

by the snapshot changes). All good things!

## Summary

This is similar to https://github.com/astral-sh/uv/pull/6171 but more

expansive... _Anywhere_ that we test requirements for platform

compatibility, we _need_ to respect the resolver-friendly markers. In

fixing the motivating issue (#6621), I also realized that we had a bunch

of bugs here around `pip install` with `--python-platform` and

`--python-version`, because we always performed our `satisfy` and `Plan`

operations on the interpreter's markers, not the adjusted markers!

Closes https://github.com/astral-sh/uv/issues/6621.

For users who were using absolute paths in the `pyproject.toml`

previously, this is a behavior change: We now convert all absolute paths

in `path` entries to relative paths. Since i assume that no-one relies

on absolute path in their lockfiles - they are intended to be portable -

I'm tagging this as a bugfix.

Closes https://github.com/astral-sh/uv/pull/6438

Fixes https://github.com/astral-sh/uv/issues/6371

Right now, the URL gets out-of-sync with the install path, since the

install path is canonicalized. This leads to a subtle error on Windows

(in CI) in which we don't preserve caching across resolution and

installation.

Surprisingly, this is a lockfile schema change: We can't store relative

paths in urls, so we have to store a `filename` entry instead of the

whole url.

Fixes#4355

## Summary

This PR adds a `DistExtension` field to some of our distribution types,

which requires that we validate that the file type is known and

supported when parsing (rather than when attempting to unzip). It

removes a bunch of extension parsing from the code too, in favor of

doing it once upfront.

Closes https://github.com/astral-sh/uv/issues/5858.

## Summary

Very subtle bug. The scenario is as follows:

- We resolve: `elmer-circuitbuilder = { git =

"https://github.com/ElmerCSC/elmer_circuitbuilder.git" }`

- The user then changes the request to: `elmer-circuitbuilder = { git =

"https://github.com/ElmerCSC/elmer_circuitbuilder.git", rev =

"44d2f4b19d6837ea990c16f494bdf7543d57483d" }`

- When we go to re-lock, we note two facts:

1. The "default branch" resolves to

`44d2f4b19d6837ea990c16f494bdf7543d57483d`.

2. The metadata for `44d2f4b19d6837ea990c16f494bdf7543d57483d` is

(whatever we grab from the lockfile).

- In the resolver, we then ask for the metadata for

`44d2f4b19d6837ea990c16f494bdf7543d57483d`. It's already in the cache,

so we return it; thus, we never add the

`44d2f4b19d6837ea990c16f494bdf7543d57483d` ->

`44d2f4b19d6837ea990c16f494bdf7543d57483d` mapping to the Git resolver,

because we never have to resolve it.

This would apply for any case in which a requested tag or branch was

replaced by its precise SHA. Replacing with a different commit is fine.

It only applied to `tool.uv.sources`, and not PEP 508 URLs, because the

underlying issue is that we aren't consistent about "automatically"

extracting the precise commit from a Git reference.

Closes https://github.com/astral-sh/uv/issues/5860.

## Summary

We allow the use of (e.g.) `.whl.metadata` files when `--no-binary` is

enabled, so it makes sense that we'd also also allow wheels to be

downloaded for metadata extraction. So now, we validate `--no-binary` at

install time, rather than metadata-fetch time.

Closes https://github.com/astral-sh/uv/issues/5699.

## Summary

The package was being installed as editable, but it wasn't marked as

such in `uv pip list`, as the `direct-url.json` was wrong.

Closes https://github.com/astral-sh/uv/issues/5543.

## Summary

The idea here is similar to what we do for wheels: we create the

`CachedEnvironment` in the `archive-v0` bucket, then symlink it to its

content-addressed location. This ensures that we can always recreate

these environments without concern for whether anyone else is accessing

them.

Part of the challenge here is that we want the virtual environments to

be relocatable, because we're now building them in one location but

persisting them in another. This requires that we write relative (rather

than absolute) paths to scripts and entrypoints. The main risk with

relocatable virtual environments is that the scripts and entrypoints

_themselves_ are not relocatable, because they use a relative shebang.

But that's fine for cached environments, which are never intended to

leave the cache.

Closes https://github.com/astral-sh/uv/issues/5503.

## Summary

Adds a `--relocatable` CLI arg to `uv venv`. This flag does two things:

* ensures that the associated activation scripts do not rely on a

hardcoded

absolute path to the virtual environment (to the extent possible; `.csh`

and

`.nu` left as-is)

* persists a `relocatable` flag in `pyvenv.cfg`.

The flag in `pyvenv.cfg` in turn instructs the wheel `Installer` to

create script

entrypoints in a relocatable way (use `exec` trick + `dirname $0` on

POSIX;

use relative path to `python[w].exe` on Windows).

Fixes: #3863

## Test Plan

* Relocatable console scripts covered as additional scenarios in

existing test cases.

* Integration testing of boilerplate generation in `venv`.

* Manual testing of `uv venv` with and without `--relocatable`

## Summary

I don't think that "always reinstall" is tenable for `uv run`. My

perspective on this is that if you want "always reinstall", you can now

set it persistently in your `pyproject.toml` or `uv.toml`.

As a smaller change, we could instead disable this _only_ for the

Project API.

Closes https://github.com/astral-sh/uv/issues/4946.

## Summary

The current code was checking every constraint against every

requirement, regardless of whether they were applicable. In general,

this isn't a big deal, because this method is only used as a fast-path

to skip resolution -- so we just had way more false-negatives than we

should've when constraints were applied. But it's clearly wrong :)

## Test Plan

- `uv venv`

- `uv pip install flask`

- `uv pip install --verbose flask -c constraints.txt` (with `numpy<1.0`)

Prior to this change, Flask was reported as not satisfied.

## Summary

Move completely off tokio's multi-threaded runtime. We've slowly been

making changes to be smarter about scheduling in various places instead

of depending on tokio's general purpose work-stealing, notably

https://github.com/astral-sh/uv/pull/3627 and

https://github.com/astral-sh/uv/pull/4004. We now no longer benefit from

the multi-threaded runtime, as we run on all I/O on the main thread.

There's one remaining instance of `block_in_place` that can be swapped

for `rayon::spawn`.

This change is a small performance improvement due to removing some

unnecessary overhead of the multi-threaded runtime (e.g. spawning

threads), but nothing major. It also removes some noise from profiles.

## Test Plan

```

Benchmark 1: ./target/profiling/uv (resolve-warm)

Time (mean ± σ): 14.9 ms ± 0.3 ms [User: 3.0 ms, System: 17.3 ms]

Range (min … max): 14.1 ms … 15.8 ms 169 runs

Benchmark 2: ./target/profiling/baseline (resolve-warm)

Time (mean ± σ): 16.1 ms ± 0.3 ms [User: 3.9 ms, System: 18.7 ms]

Range (min … max): 15.1 ms … 17.3 ms 162 runs

Summary

./target/profiling/uv (resolve-warm) ran

1.08 ± 0.03 times faster than ./target/profiling/baseline (resolve-warm)

```

Whew this is a lot.

The user-facing changes are:

- `uv toolchain` to `uv python` e.g. `uv python find`, `uv python

install`, ...

- `UV_TOOLCHAIN_DIR` to` UV_PYTHON_INSTALL_DIR`

- `<UV_STATE_DIR>/toolchains` to `<UV_STATE_DIR>/python` (with

[automatic

migration](https://github.com/astral-sh/uv/pull/4735/files#r1663029330))

- User-facing messages no longer refer to toolchains, instead using

"Python", "Python versions" or "Python installations"

The internal changes are:

- `uv-toolchain` crate to `uv-python`

- `Toolchain` no longer referenced in type names

- Dropped unused `SystemPython` type (previously replaced)

- Clarified the type names for "managed Python installations"

- (more little things)

Updates `--no-binary <package>` to take precedence over `--only-binary

:all:` and `--only-binary <package>` to take precedence over

`--no-binary :all:`.

I'm not entirely sure about this behavior, e.g. maybe I provided

`--only-binary :all:` later on the command line and really want it to

override those earlier arguments of `--no-binary <package>` for safety.

Right now we just fail to solve though since we can't satisfy the

overlapping requests.

Closes https://github.com/astral-sh/uv/issues/4063

## Summary

This is what I consider to be the "real" fix for #8072. We now treat

directory and path URLs as separate `ParsedUrl` types and

`RequirementSource` types. This removes a lot of `.is_dir()` forking

within the `ParsedUrl::Path` arms and makes some states impossible

(e.g., you can't have a `.whl` path that is editable). It _also_ fixes

the `direct_url.json` for direct URLs that refer to files. Previously,

we wrote out to these as if they were installed as directories, which is

just wrong.

## Summary

Right now, we're _always_ reinstalling local wheel archives, even if the

timestamp didn't change.

I want to fix the TODO properly but I will do so in a separate PR.

By splitting `path` into a lockable, relative (or absolute) and an

absolute installable path and by splitting between urls and paths by

dist type, we can store relative paths in the lockfile.

## Summary

As with other `.egg-info` and `.egg-link` distributions, it's easy to

support _existing_ `.egg-link` files. Like pip, we refuse to uninstall

these, since there's no way to know which files are part of the

distribution.

Closes https://github.com/astral-sh/uv/issues/4059.

## Test Plan

Verify that `vtk` is included here, which is installed as a `.egg-link`

file:

```

> conda create -c conda-forge -n uv-test python h5py vtk pyside6 cftime psutil

> cargo run pip freeze --python /opt/homebrew/Caskroom/miniforge/base/envs/uv-test/bin/python

aiohttp @ file:///Users/runner/miniforge3/conda-bld/aiohttp_1713964997382/work

aiosignal @ file:///home/conda/feedstock_root/build_artifacts/aiosignal_1667935791922/work

attrs @ file:///home/conda/feedstock_root/build_artifacts/attrs_1704011227531/work

cached-property @ file:///home/conda/feedstock_root/build_artifacts/cached_property_1615209429212/work

cftime @ file:///Users/runner/miniforge3/conda-bld/cftime_1715919201099/work

frozenlist @ file:///Users/runner/miniforge3/conda-bld/frozenlist_1702645558715/work

h5py @ file:///Users/runner/miniforge3/conda-bld/h5py_1715968397721/work

idna @ file:///home/conda/feedstock_root/build_artifacts/idna_1713279365350/work

loguru @ file:///Users/runner/miniforge3/conda-bld/loguru_1695547410953/work

msgpack @ file:///Users/runner/miniforge3/conda-bld/msgpack-python_1715670632250/work

multidict @ file:///Users/runner/miniforge3/conda-bld/multidict_1707040780513/work

numpy @ file:///Users/runner/miniforge3/conda-bld/numpy_1707225421156/work/dist/numpy-1.26.4-cp312-cp312-macosx_11_0_arm64.whl

pip==24.0

psutil @ file:///Users/runner/miniforge3/conda-bld/psutil_1705722460205/work

pyside6==6.7.1

setuptools==70.0.0

shiboken6==6.7.1

vtk==9.2.6

wheel==0.43.0

wslink @ file:///home/conda/feedstock_root/build_artifacts/wslink_1716591560747/work

yarl @ file:///Users/runner/miniforge3/conda-bld/yarl_1705508643525/work

```

## Summary

Avoid using work-stealing Tokio workers for bytecode compilation,

favoring instead dedicated threads. Tokio's work-stealing does not

really benefit us because we're spawning Python workers and scheduling

tasks ourselves — we don't want Tokio to re-balance our workers. Because

we're doing scheduling ourselves and compilation is a primarily

compute-bound task, we can also create dedicated runtimes for each

worker and avoid some synchronization overhead.

This is part of a general desire to avoid relying on Tokio's

work-stealing scheduler and be smarter about our workload. In this case

we already had the custom scheduler in place, Tokio was just getting in

the way (though the overhead is very minor).

## Test Plan

This improves performance by ~5% on my machine.

```

$ hyperfine --warmup 1 --prepare "target/profiling/uv-dev clear-compile .venv" "target/profiling/uv-dev compile .venv" "target/profiling/uv-dev-dedicated compile .venv"

Benchmark 1: target/profiling/uv-dev compile .venv

Time (mean ± σ): 1.279 s ± 0.011 s [User: 13.803 s, System: 2.998 s]

Range (min … max): 1.261 s … 1.296 s 10 runs

Benchmark 2: target/profiling/uv-dev-dedicated compile .venv

Time (mean ± σ): 1.220 s ± 0.021 s [User: 13.997 s, System: 3.330 s]

Range (min … max): 1.198 s … 1.272 s 10 runs

Summary

target/profiling/uv-dev-dedicated compile .venv ran

1.05 ± 0.02 times faster than target/profiling/uv-dev compile .venv

$ hyperfine --warmup 1 --prepare "target/profiling/uv-dev clear-compile .venv" "target/profiling/uv-dev compile .venv" "target/profiling/uv-dev-dedicated compile .venv"

Benchmark 1: target/profiling/uv-dev compile .venv

Time (mean ± σ): 3.631 s ± 0.078 s [User: 47.205 s, System: 4.996 s]

Range (min … max): 3.564 s … 3.832 s 10 runs

Benchmark 2: target/profiling/uv-dev-dedicated compile .venv

Time (mean ± σ): 3.521 s ± 0.024 s [User: 48.201 s, System: 5.392 s]

Range (min … max): 3.484 s … 3.566 s 10 runs

Summary

target/profiling/uv-dev-dedicated compile .venv ran

1.03 ± 0.02 times faster than target/profiling/uv-dev compile .venv

```

Add a `--package` option that allows switching the current project in

the workspace. Wherever you are in a workspace, you should be able to

run with any other project as root. This is the uv equivalent of `cargo

run -p`.

I don't love the `--package` name, esp. since `-p` is already taken and

in general to many things start with p already.

Part of this change is moving the workspace discovery of

`ProjectWorkspace` to `Workspace` itself.

## Usage

In albatross-virtual-workspace:

```console

$ uv venv

$ uv run --preview --package bird-feeder python -c "import albatross"

Built file:///home/konsti/projects/uv/scripts/workspaces/albatross-virtual-workspace/packages/bird-feeder

Built file:///home/konsti/projects/uv/scripts/workspaces/albatross-virtual-workspace/packages/seeds

Built 2 editables in 167ms

Resolved 5 packages in 4ms

Installed 5 packages in 1ms

+ anyio==4.4.0

+ bird-feeder==1.0.0 (from file:///home/konsti/projects/uv/scripts/workspaces/albatross-virtual-workspace/packages/bird-feeder)

+ idna==3.6

+ seeds==1.0.0 (from file:///home/konsti/projects/uv/scripts/workspaces/albatross-virtual-workspace/packages/seeds)

+ sniffio==1.3.1

Traceback (most recent call last):

File "<string>", line 1, in <module>

ModuleNotFoundError: No module named 'albatross'

$ uv venv

$ uv run --preview --package albatross python -c "import albatross"

Built file:///home/konsti/projects/uv/scripts/workspaces/albatross-virtual-workspace/packages/albatross

Built file:///home/konsti/projects/uv/scripts/workspaces/albatross-virtual-workspace/packages/bird-feeder

Built file:///home/konsti/projects/uv/scripts/workspaces/albatross-virtual-workspace/packages/seeds

Built 3 editables in 173ms

Resolved 7 packages in 6ms

Installed 7 packages in 1ms

+ albatross==0.1.0 (from file:///home/konsti/projects/uv/scripts/workspaces/albatross-virtual-workspace/packages/albatross)

+ anyio==4.4.0

+ bird-feeder==1.0.0 (from file:///home/konsti/projects/uv/scripts/workspaces/albatross-virtual-workspace/packages/bird-feeder)

+ idna==3.6

+ seeds==1.0.0 (from file:///home/konsti/projects/uv/scripts/workspaces/albatross-virtual-workspace/packages/seeds)

+ sniffio==1.3.1

+ tqdm==4.66.4

```

In albatross-root-workspace:

```console

$ uv venv

$ uv run --preview --package bird-feeder python -c "import albatross"

Using Python 3.12.3 interpreter at: /home/konsti/.local/bin/python3

Creating virtualenv at: .venv

Activate with: source .venv/bin/activate

Finished `dev` profile [unoptimized + debuginfo] target(s) in 0.10s

Running `/home/konsti/projects/uv/target/debug/uv run --preview --package bird-feeder python -c 'import albatross'`

Built file:///home/konsti/projects/uv/scripts/workspaces/albatross-root-workspace/packages/bird-feeder

Built file:///home/konsti/projects/uv/scripts/workspaces/albatross-root-workspace/packages/seeds Built 2 editables in 161ms

Resolved 5 packages in 4ms

Installed 5 packages in 1ms

+ anyio==4.4.0

+ bird-feeder==1.0.0 (from file:///home/konsti/projects/uv/scripts/workspaces/albatross-root-workspace/packages/bird-feeder)

+ idna==3.6

+ seeds==1.0.0 (from file:///home/konsti/projects/uv/scripts/workspaces/albatross-root-workspace/packages/seeds)

+ sniffio==1.3.1

Traceback (most recent call last):

File "<string>", line 1, in <module>

ModuleNotFoundError: No module named 'albatross'

$ uv venv

$ cargo run run --preview --package albatross python -c "import albatross"

Using Python 3.12.3 interpreter at: /home/konsti/.local/bin/python3

Creating virtualenv at: .venv

Activate with: source .venv/bin/activate

Finished `dev` profile [unoptimized + debuginfo] target(s) in 0.13s

Running `/home/konsti/projects/uv/target/debug/uv run --preview --package albatross python -c 'import albatross'`

Built file:///home/konsti/projects/uv/scripts/workspaces/albatross-root-workspace

Built file:///home/konsti/projects/uv/scripts/workspaces/albatross-root-workspace/packages/bird-feeder

Built file:///home/konsti/projects/uv/scripts/workspaces/albatross-root-workspace/packages/seeds

Built 3 editables in 168ms

Resolved 7 packages in 5ms

Installed 7 packages in 1ms

+ albatross==0.1.0 (from file:///home/konsti/projects/uv/scripts/workspaces/albatross-root-workspace)

+ anyio==4.4.0

+ bird-feeder==1.0.0 (from file:///home/konsti/projects/uv/scripts/workspaces/albatross-root-workspace/packages/bird-feeder)

+ idna==3.6

+ seeds==1.0.0 (from file:///home/konsti/projects/uv/scripts/workspaces/albatross-root-workspace/packages/seeds)

+ sniffio==1.3.1

+ tqdm==4.66.4

```

With the change, we remove the special casing of workspace dependencies

and resolve `tool.uv` for all git and directory distributions. This

gives us support for non-editable workspace dependencies and path

dependencies in other workspaces. It removes a lot of special casing

around workspaces. These changes are the groundwork for supporting

`tool.uv` with dynamic metadata.

The basis for this change is moving `Requirement` from

`distribution-types` to `pypi-types` and the lowering logic from

`uv-requirements` to `uv-distribution`. This changes should be split out

in separate PRs.

I've included an example workspace `albatross-root-workspace2` where

`bird-feeder` depends on `a` from another workspace `ab`. There's a

bunch of failing tests and regressed error messages that still need

fixing. It does fix the audited package count for the workspace tests.

## Summary

There are a few behavior changes in here:

- We now enforce `--require-hashes` for editables, like pip. So if you

use `--require-hashes` with an editable requirement, we'll reject it. I

could change this if it seems off.

- We now treat source tree requirements, editable or not (e.g., both `-e

./black` and `./black`) as if `--refresh` is always enabled. This

doesn't mean that we _always_ rebuild them; but if you pass

`--reinstall`, then yes, we always rebuild them. I think this is an

improvement and is close to how editables work today.

Closes#3844.

Closes#2695.

When parsing requirements from any source, directly parse the url parts

(and reject unsupported urls) instead of parsing url parts at a later

stage. This removes a bunch of error branches and concludes the work

parsing url parts once and passing them around everywhere.

Many usages of the assembled `VerbatimUrl` remain, but these can be

removed incrementally.

Please review commit-by-commit.

This is split out from workspaces support, which needs editables in the

bluejay commands. It consists mainly of refactorings:

* Move the `editable` module one level up.

* Introduce a `BuiltEditableMetadata` type for `(LocalEditable,

Metadata23, Requirements)`.

* Add editables to `InstalledPackagesProvider` so we can use

`EmptyInstalledPackages` for them.

## Summary

The main motivation here is that the `.filename()` method that we

implement on `Url` will do URL decoding for the last segment, which we

were missing here.

The errors are a bit awkward, because in

`crates/uv-resolver/src/lock.rs`, we wrap in `failed to extract filename

from URL: {url}`, so in theory we want the underlying errors to _omit_

the URL? But sometimes they use `#[error(transparent)]`?

## Summary

This PR introduces parallelism to the resolver. Specifically, we can

perform PubGrub resolution on a separate thread, while keeping all I/O

on the tokio thread. We already have the infrastructure set up for this

with the channel and `OnceMap`, which makes this change relatively

simple. The big change needed to make this possible is removing the

lifetimes on some of the types that need to be shared between the

resolver and pubgrub thread.

A related PR, https://github.com/astral-sh/uv/pull/1163, found that

adding `yield_now` calls improved throughput. With optimal scheduling we

might be able to get away with everything on the same thread here.

However, in the ideal pipeline with perfect prefetching, the resolution

and prefetching can run completely in parallel without depending on one

another. While this would be very difficult to achieve, even with our

current prefetching pattern we see a consistent performance improvement

from parallelism.

This does also require reverting a few of the changes from

https://github.com/astral-sh/uv/pull/3413, but not all of them. The

sharing is isolated to the resolver task.

## Test Plan

On smaller tasks performance is mixed with ~2% improvements/regressions

on both sides. However, on medium-large resolution tasks we see the

benefits of parallelism, with improvements anywhere from 10-50%.

```

./scripts/requirements/jupyter.in

Benchmark 1: ./target/profiling/baseline (resolve-warm)

Time (mean ± σ): 29.2 ms ± 1.8 ms [User: 20.3 ms, System: 29.8 ms]

Range (min … max): 26.4 ms … 36.0 ms 91 runs

Benchmark 2: ./target/profiling/parallel (resolve-warm)

Time (mean ± σ): 25.5 ms ± 1.0 ms [User: 19.5 ms, System: 25.5 ms]

Range (min … max): 23.6 ms … 27.8 ms 99 runs

Summary

./target/profiling/parallel (resolve-warm) ran

1.15 ± 0.08 times faster than ./target/profiling/baseline (resolve-warm)

```

```

./scripts/requirements/boto3.in

Benchmark 1: ./target/profiling/baseline (resolve-warm)

Time (mean ± σ): 487.1 ms ± 6.2 ms [User: 464.6 ms, System: 61.6 ms]

Range (min … max): 480.0 ms … 497.3 ms 10 runs

Benchmark 2: ./target/profiling/parallel (resolve-warm)

Time (mean ± σ): 430.8 ms ± 9.3 ms [User: 529.0 ms, System: 77.2 ms]

Range (min … max): 417.1 ms … 442.5 ms 10 runs

Summary

./target/profiling/parallel (resolve-warm) ran

1.13 ± 0.03 times faster than ./target/profiling/baseline (resolve-warm)

```

```

./scripts/requirements/airflow.in

Benchmark 1: ./target/profiling/baseline (resolve-warm)

Time (mean ± σ): 478.1 ms ± 18.8 ms [User: 482.6 ms, System: 205.0 ms]

Range (min … max): 454.7 ms … 508.9 ms 10 runs

Benchmark 2: ./target/profiling/parallel (resolve-warm)

Time (mean ± σ): 308.7 ms ± 11.7 ms [User: 428.5 ms, System: 209.5 ms]

Range (min … max): 287.8 ms … 323.1 ms 10 runs

Summary

./target/profiling/parallel (resolve-warm) ran

1.55 ± 0.08 times faster than ./target/profiling/baseline (resolve-warm)

```

## Summary

Uses the editable handling from `pip sync`, and improves the

abstractions such that we can pass those resolved editables into the

resolver.

---------

Co-authored-by: konstin <konstin@mailbox.org>

## Summary

I think this is overall good change because it explicitly encodes (in

the type system) something that was previously implicit. I'm not a huge

fan of the names here, open to input.

It covers some of https://github.com/astral-sh/uv/issues/3506 but I

don't think it _closes_ it.

## Summary

This PR consolidates the concurrency limits used throughout `uv` and

exposes two limits, `UV_CONCURRENT_DOWNLOADS` and

`UV_CONCURRENT_BUILDS`, as environment variables.

Currently, `uv` has a number of concurrent streams that it buffers using

relatively arbitrary limits for backpressure. However, many of these

limits are conflated. We run a relatively small number of tasks overall

and should start most things as soon as possible. What we really want to

limit are three separate operations:

- File I/O. This is managed by tokio's blocking pool and we should not

really have to worry about it.

- Network I/O.

- Python build processes.

Because the current limits span a broad range of tasks, it's possible

that a limit meant for network I/O is occupied by tasks performing

builds, reading from the file system, or even waiting on a `OnceMap`. We

also don't limit build processes that end up being required to perform a

download. While this may not pose a performance problem because our

limits are relatively high, it does mean that the limits do not do what

we want, making it tricky to expose them to users

(https://github.com/astral-sh/uv/issues/1205,

https://github.com/astral-sh/uv/issues/3311).

After this change, the limits on network I/O and build processes are

centralized and managed by semaphores. All other tasks are unbuffered

(note that these tasks are still bounded, so backpressure should not be

a problem).

## Summary

Ensures that we track the origins for requirements regardless of whether

they come from `pyproject.toml` or `setup.py` or `setup.cfg`.

Closes#3480.

This commit touches a lot of code, but the conceptual change here is

pretty simple: make it so we can run the resolver without providing a

`MarkerEnvironment`. This also indicates that the resolver should run in

universal mode. That is, the effect of a missing marker environment is

that all marker expressions that reference the marker environment are

evaluated to `true`. That is, they are ignored. (The only markers we

evaluate in that context are extras, which are the only markers that

aren't dependent on the environment.)

One interesting change here is that a `Resolver` no longer needs an

`Interpreter`. Previously, it had only been using it to construct a

`PythonRequirement`, by filling in the installed version from the

`Interpreter` state. But we now construct a `PythonRequirement`

explicitly since its `target` Python version should no longer be tied to

the `MarkerEnvironment`. (Currently, the marker environment is mutated

such that its `python_full_version` is derived from multiple sources,

including the CLI, which I found a touch confusing.)

The change in behavior can now be observed through the

`--unstable-uv-lock-file` flag. First, without it:

```

$ cat requirements.in

anyio>=4.3.0 ; sys_platform == "linux"

anyio<4 ; sys_platform == "darwin"

$ cargo run -qp uv -- pip compile -p3.10 requirements.in

anyio==4.3.0

exceptiongroup==1.2.1

# via anyio

idna==3.7

# via anyio

sniffio==1.3.1

# via anyio

typing-extensions==4.11.0

# via anyio

```

And now with it:

```

$ cargo run -qp uv -- pip compile -p3.10 requirements.in --unstable-uv-lock-file

x No solution found when resolving dependencies:

`-> Because you require anyio>=4.3.0 and anyio<4, we can conclude that the requirements are unsatisfiable.

```

This is expected at this point because the marker expressions are being

explicitly ignored, *and* there is no forking done yet to account for

the conflict.

## Summary

Fixes https://github.com/astral-sh/uv/issues/1343. This is kinda a first

draft at the moment, but does at least mostly work locally (barring some

bits of the test suite that seem to not work for me in general).

## Test Plan

Mostly running the existing tests and checking the revised output is

sane

## Outstanding issues

Most of these come down to "AFAIK, the existing tools don't support

these patterns, but `uv` does" and so I'm not sure there's an existing

good answer here! Most of the answers so far are "whatever was easiest

to build"

- [x] ~~Is "-r pyproject.toml" correct? Should it show something else or

get skipped entirely~~ No it wasn't. Fixed in

3044fa8b86

- [ ] If the requirements file is stdin, that just gets skipped. Should

it be recorded?

- [ ] Overrides get shown as "--override<override.txt>". Correct?

- [x] ~~Some of the tests (e.g.

`dependency_excludes_non_contiguous_range_of_compatible_versions`) make

assumptions about the order of package versions being outputted, which

this PR breaks. I'm not sure if the text is fairly arbitrary and can be

replaced or whether the behaviour needs fixing?~~ - fixed by removing

the custom pubgrub PartialEq/Hash

- [ ] Are all the `TrackedFromStr` et al changes needed, or is there an

easier way? I don't think so, I think it's necessary to track these sort

of things fairly comprehensively to make this feature work, and this

sort of invasive change feels necessary, but happy to be proved wrong

there :)

- [x] ~~If you have a requirement coming in from two or more different

requirements files only one turns up. I've got a closed-source example

for this (can go into more detail if needed), mostly consisting of a

complicated set of common deps creating a larger set. It's a rarer case,

but worth considering.~~ 042432b200

- [ ] Doesn't add annotations for `setup.py` yet

- This is pretty hard, as the correct location to insert the path is

`crates/pypi-types/src/metadata.rs`'s `parse_pkg_info`, which as it's

based off a source distribution has entirely thrown away such matters as

"where did this package requirement get built from". Could add "`built

package name`" as a dep, but that's a little odd.

## Summary

All of the resolver code is run on the main thread, so a lot of the

`Send` bounds and uses of `DashMap` and `Arc` are unnecessary. We could

also switch to using single-threaded versions of `Mutex` and `Notify` in

some places, but there isn't really a crate that provides those I would

be comfortable with using.

The `Arc` in `OnceMap` can't easily be removed because of the uv-auth

code which uses the

[reqwest-middleware](https://docs.rs/reqwest-middleware/latest/reqwest_middleware/trait.Middleware.html)

crate, that seems to adds unnecessary `Send` bounds because of

`async-trait`. We could duplicate the code and create a `OnceMapLocal`

variant, but I don't feel that's worth it.

## Summary

Users often find themselves dropped into environments that contain

`.egg-info` packages. While we won't support installing these, it's not

hard to support identifying them (e.g., in `pip freeze`) and

_uninstalling_ them.

Closes https://github.com/astral-sh/uv/issues/2841.

Closes#2928.

Closes#3341.

## Test Plan

Ran `cargo run pip freeze --python

/opt/homebrew/Caskroom/miniforge/base/envs/TEST/bin/python`, with an

environment that includes `pip` as an `.egg-info`

(`/opt/homebrew/Caskroom/miniforge/base/envs/TEST/lib/python3.12/site-packages/pip-24.0-py3.12.egg-info`):

```

cffi @ file:///Users/runner/miniforge3/conda-bld/cffi_1696001825047/work

pip==24.0

pycparser @ file:///home/conda/feedstock_root/build_artifacts/pycparser_1711811537435/work

setuptools==69.5.1

wheel==0.43.0

```

Then ran `cargo run pip uninstall`, verified that `pip` was uninstalled,

and no longer listed in `pip freeze`.

## Introduction

PEP 621 is limited. Specifically, it lacks

* Relative path support

* Editable support

* Workspace support

* Index pinning or any sort of index specification

The semantics of urls are a custom extension, PEP 440 does not specify

how to use git references or subdirectories, instead pip has a custom

stringly format. We need to somehow support these while still stying

compatible with PEP 621.

## `tool.uv.source`

Drawing inspiration from cargo, poetry and rye, we add `tool.uv.sources`

or (for now stub only) `tool.uv.workspace`:

```toml

[project]

name = "albatross"

version = "0.1.0"

dependencies = [

"tqdm >=4.66.2,<5",

"torch ==2.2.2",

"transformers[torch] >=4.39.3,<5",

"importlib_metadata >=7.1.0,<8; python_version < '3.10'",

"mollymawk ==0.1.0"

]

[tool.uv.sources]

tqdm = { git = "https://github.com/tqdm/tqdm", rev = "cc372d09dcd5a5eabdc6ed4cf365bdb0be004d44" }

importlib_metadata = { url = "https://github.com/python/importlib_metadata/archive/refs/tags/v7.1.0.zip" }

torch = { index = "torch-cu118" }

mollymawk = { workspace = true }

[tool.uv.workspace]

include = [

"packages/mollymawk"

]

[tool.uv.indexes]

torch-cu118 = "https://download.pytorch.org/whl/cu118"

```

See `docs/specifying_dependencies.md` for a detailed explanation of the

format. The basic gist is that `project.dependencies` is what ends up on

pypi, while `tool.uv.sources` are your non-published additions. We do

support the full range or PEP 508, we just hide it in the docs and

prefer the exploded table for easier readability and less confusing with

actual url parts.

This format should eventually be able to subsume requirements.txt's

current use cases. While we will continue to support the legacy `uv pip`

interface, this is a piece of the uv's own top level interface. Together

with `uv run` and a lockfile format, you should only need to write

`pyproject.toml` and do `uv run`, which generates/uses/updates your

lockfile behind the scenes, no more pip-style requirements involved. It

also lays the groundwork for implementing index pinning.

## Changes

This PR implements:

* Reading and lowering `project.dependencies`,

`project.optional-dependencies` and `tool.uv.sources` into a new

requirements format, including:

* Git dependencies

* Url dependencies

* Path dependencies, including relative and editable

* `pip install` integration

* Error reporting for invalid `tool.uv.sources`

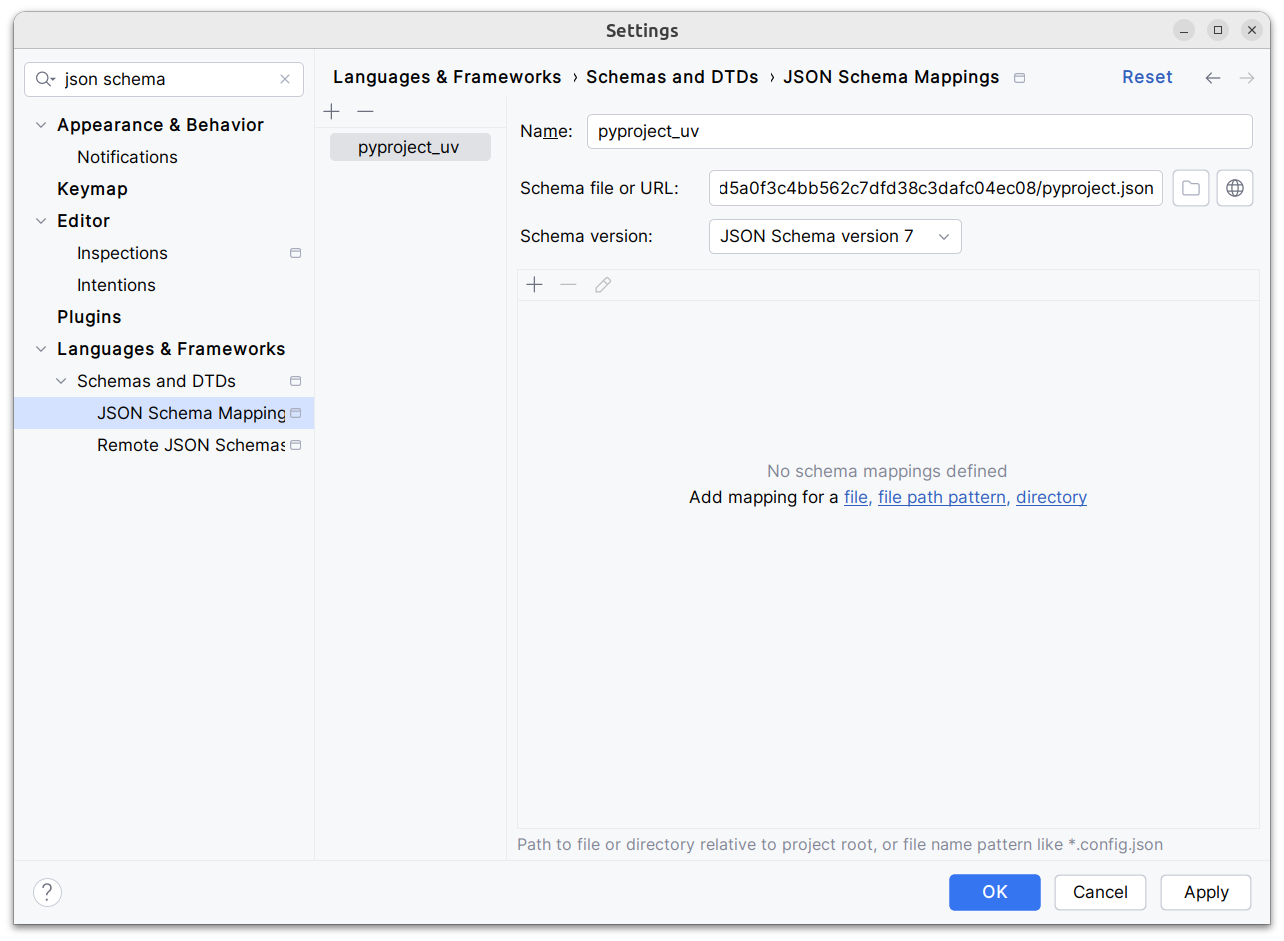

* Json schema integration (works in pycharm, see below)

* Draft user-level docs (see `docs/specifying_dependencies.md`)

It does not implement:

* No `pip compile` testing, deprioritizing towards our own lockfile

* Index pinning (stub definitions only)

* Development dependencies

* Workspace support (stub definitions only)

* Overrides in pyproject.toml

* Patching/replacing dependencies

One technically breaking change is that we now require user provided

pyproject.toml to be valid wrt to PEP 621. Included files still fall

back to PEP 517. That means `pip install -r requirements.txt` requires

it to be valid while `pip install -r requirements.txt` with `-e .` as

content falls back to PEP 517 as before.

## Implementation

The `pep508` requirement is replaced by a new `UvRequirement` (name up

for bikeshedding, not particularly attached to the uv prefix). The still

existing `pep508_rs::Requirement` type is a url format copied from pip's

requirements.txt and doesn't appropriately capture all features we

want/need to support. The bulk of the diff is changing the requirement

type throughout the codebase.

We still use `VerbatimUrl` in many places, where we would expect a

parsed/decomposed url type, specifically:

* Reading core metadata except top level pyproject.toml files, we fail a

step later instead if the url isn't supported.

* Allowed `Urls`.

* `PackageId` with a custom `CanonicalUrl` comparison, instead of

canonicalizing urls eagerly.

* `PubGrubPackage`: We eventually convert the `VerbatimUrl` back to a

`Dist` (`Dist::from_url`), instead of remembering the url.

* Source dist types: We use verbatim url even though we know and require

that these are supported urls we can and have parsed.

I tried to make improve the situation be replacing `VerbatimUrl`, but

these changes would require massive invasive changes (see e.g.

https://github.com/astral-sh/uv/pull/3253). A main problem is the ref

`VersionOrUrl` and applying overrides, which assume the same

requirement/url type everywhere. In its current form, this PR increases

this tech debt.

I've tried to split off PRs and commits, but the main refactoring is

still a single monolith commit to make it compile and the tests pass.

## Demo

Adding

d1ae3b85d5/pyproject.json

as json schema (v7) to pycharm for `pyproject.toml`, you can try the IDE

support already:

[dove.webm](https://github.com/astral-sh/uv/assets/6826232/c293c272-c80b-459d-8c95-8c46a8d198a1)

Another split out from https://github.com/astral-sh/uv/pull/3263. This

abstracts the copy&pasted check whether an installed distribution

satisfies a requirement used by both plan.rs and site_packages.rs into a

shared module. It's less useful here than with the new requirement but

helps with reducing https://github.com/astral-sh/uv/pull/3263 diff size.

Previously, a noop `uv pip install` would only show "Audited {}

package(s)" but no details, not even with `-vv`. Now it debug logs which

requirements were met and it also debug logs which requirement was

missing to trigger the full routine, allowing it investigate caching

behaviour.

First `uv pip install -v jupyter`:

```

DEBUG At least one requirement is not satisfied: jupyter

```

Second `uv pip install -v jupyter`:

```

DEBUG Found a virtualenv named .venv at: /home/konsti/projects/uv-main/.venv

DEBUG Cached interpreter info for Python 3.12.1, skipping probing: .venv/bin/python

DEBUG Using Python 3.12.1 environment at .venv/bin/python

DEBUG Trying to lock if free: .venv/.lock

DEBUG Requirement satisfied: anyio

DEBUG Requirement satisfied: anyio>=3.1.0

DEBUG Requirement satisfied: argon2-cffi-bindings

DEBUG Requirement satisfied: argon2-cffi>=21.1

DEBUG Requirement satisfied: arrow>=0.15.0

DEBUG Requirement satisfied: asttokens>=2.1.0

DEBUG Requirement satisfied: async-lru>=1.0.0

DEBUG Requirement satisfied: attrs>=22.2.0

DEBUG Requirement satisfied: babel>=2.10

...

DEBUG Requirement satisfied: webencodings

DEBUG Requirement satisfied: webencodings>=0.4

DEBUG Requirement satisfied: websocket-client>=1.7

DEBUG Requirement satisfied: widgetsnbextension~=4.0.10

DEBUG All editables satisfied:

Audited 1 package in 12ms

```

This will clash with the `tool.uv.sources` PR, i'll rebase it on top.

Previously, uv-auth would fail to compile due to a missing process

feature. I chose to make all tokio features we use top level features,

so we can share the tokio cache between all test invocations.

Previously, we got `pypi_types::DirectUrl` (the pypa spec

direct_url.json format) and `distribution_types::DirectUrl` (an enum of

all the url types we support). This lead me to confusion, so i'm

renaming the latter one to the more appropriate `ParsedUrl`.

## Summary

This PR enables `--require-hashes` with unnamed requirements. The key

change is that `PackageId` becomes `VersionId` (since it refers to a

package at a specific version), and the new `PackageId` consists of

_either_ a package name _or_ a URL. The hashes are keyed by `PackageId`,

so we can generate the `RequiredHashes` before we have names for all

packages, and enforce them throughout.

Closes#2979.

## Summary

Similar to `Revision`, we now store IDs in the `Archive` entires rather

than absolute paths. This makes the cache robust to moves, etc.

Closes https://github.com/astral-sh/uv/issues/2908.

## Summary

This PR formalizes some of the concepts we use in the cache for

"pointers to things".

In the wheel cache, we have files like

`annotated_types-0.6.0-py3-none-any.http`. This represents an unzipped

wheel, cached alongside an HTTP caching policy. We now have a struct for

this to encapsulate the logic: `HttpArchivePointer`.

Similarly, we have files like `annotated_types-0.6.0-py3-none-any.rev`.

This represents an unzipped local wheel, alongside with a timestamp. We

now have a struct for this to encapsulate the logic:

`LocalArchivePointer`.

We have similar structs for source distributions too.

## Summary

This PR enables hash generation for URL requirements when the user

provides `--generate-hashes` to `pip compile`. While we include the

hashes from the registry already, today, we omit hashes for URLs.

To power hash generation, we introduce a `HashPolicy` abstraction:

```rust

#[derive(Debug, Clone, Copy, PartialEq, Eq)]

pub enum HashPolicy<'a> {

/// No hash policy is specified.

None,

/// Hashes should be generated (specifically, a SHA-256 hash), but not validated.

Generate,

/// Hashes should be validated against a pre-defined list of hashes. If necessary, hashes should

/// be generated so as to ensure that the archive is valid.

Validate(&'a [HashDigest]),

}

```

All of the methods on the distribution database now accept this policy,

instead of accepting `&'a [HashDigest]`.

Closes#2378.

## Summary

This PR adds support for hash-checking mode in `pip install` and `pip

sync`. It's a large change, both in terms of the size of the diff and

the modifications in behavior, but it's also one that's hard to merge in

pieces (at least, with any test coverage) since it needs to work

end-to-end to be useful and testable.

Here are some of the most important highlights:

- We store hashes in the cache. Where we previously stored pointers to

unzipped wheels in the `archives` directory, we now store pointers with

a set of known hashes. So every pointer to an unzipped wheel also

includes its known hashes.

- By default, we don't compute any hashes. If the user runs with

`--require-hashes`, and the cache doesn't contain those hashes, we

invalidate the cache, redownload the wheel, and compute the hashes as we

go. For users that don't run with `--require-hashes`, there will be no

change in performance. For users that _do_, the only change will be if

they don't run with `--generate-hashes` -- then they may see some

repeated work between resolution and installation, if they use `pip

compile` then `pip sync`.

- Many of the distribution types now include a `hashes` field, like

`CachedDist` and `LocalWheel`.

- Our behavior is similar to pip, in that we enforce hashes when pulling

any remote distributions, and when pulling from our own cache. Like pip,

though, we _don't_ enforce hashes if a distribution is _already_

installed.

- Hash validity is enforced in a few different places:

1. During resolution, we enforce hash validity based on the hashes

reported by the registry. If we need to access a source distribution,

though, we then enforce hash validity at that point too, prior to

running any untrusted code. (This is enforced in the distribution

database.)

2. In the install plan, we _only_ add cached distributions that have

matching hashes. If a cached distribution is missing any hashes, or the

hashes don't match, we don't return them from the install plan.

3. In the downloader, we _only_ return distributions with matching

hashes.

4. The final combination of "things we install" are: (1) the wheels from

the cache, and (2) the downloaded wheels. So this ensures that we never

install any mismatching distributions.

- Like pip, if `--require-hashes` is provided, we require that _all_

distributions are pinned with either `==` or a direct URL. We also

require that _all_ distributions have hashes.

There are a few notable TODOs:

- We don't support hash-checking mode for unnamed requirements. These

should be _somewhat_ rare, though? Since `pip compile` never outputs

unnamed requirements. I can fix this, it's just some additional work.

- We don't automatically enable `--require-hashes` with a hash exists in

the requirements file. We require `--require-hashes`.

Closes#474.

## Test Plan

I'd like to add some tests for registries that report incorrect hashes,

but otherwise: `cargo test`