## Summary

There are a few behavior changes in here:

- We now enforce `--require-hashes` for editables, like pip. So if you

use `--require-hashes` with an editable requirement, we'll reject it. I

could change this if it seems off.

- We now treat source tree requirements, editable or not (e.g., both `-e

./black` and `./black`) as if `--refresh` is always enabled. This

doesn't mean that we _always_ rebuild them; but if you pass

`--reinstall`, then yes, we always rebuild them. I think this is an

improvement and is close to how editables work today.

Closes#3844.

Closes#2695.

When parsing requirements from any source, directly parse the url parts

(and reject unsupported urls) instead of parsing url parts at a later

stage. This removes a bunch of error branches and concludes the work

parsing url parts once and passing them around everywhere.

Many usages of the assembled `VerbatimUrl` remain, but these can be

removed incrementally.

Please review commit-by-commit.

## Summary

Closes https://github.com/astral-sh/uv/issues/3715.

## Test Plan

```

❯ echo "/../test" | cargo run pip compile -

error: Couldn't parse requirement in `-` at position 0

Caused by: path could not be normalized: /../test

/../test

^^^^^^^^

❯ echo "-e /../test" | cargo run pip compile -

error: Invalid URL in `-`: `/../test`

Caused by: path could not be normalized: /../test

Caused by: cannot normalize a relative path beyond the base directory

```

## Summary

Uncertain about this, but we don't actually need the full

`SourceDistFilename`, only the name and version -- and we often have

that information already (as in the lockfile routines). So by flattening

the fields onto `RegistrySourceDist`, we can avoid re-parsing for

information we already have.

## Summary

I don't love this, but it turns out that setuptools is not robust to

parallel builds: https://github.com/pypa/setuptools/issues/3119. As a

result, if you run uv from multiple processes, and they each attempt to

build the same source distribution, you can hit failures.

This PR applies an advisory lock to the source distribution directory.

We apply it unconditionally, even if we ultimately find something in the

cache and _don't_ do a build, which helps ensure that we only build the

distribution once (and wait for that build to complete) rather than

kicking off builds from each thread.

Closes https://github.com/astral-sh/uv/issues/3512.

## Test Plan

Ran:

```sh

#!/bin/bash

make_venv(){

target/debug/uv venv $1

source $1/bin/activate

target/debug/uv pip install opentracing --no-deps --verbose

}

for i in {1..8}

do

make_venv ./$1/$i &

done

```

## Summary

I think this is overall good change because it explicitly encodes (in

the type system) something that was previously implicit. I'm not a huge

fan of the names here, open to input.

It covers some of https://github.com/astral-sh/uv/issues/3506 but I

don't think it _closes_ it.

## Summary

This PR consolidates the concurrency limits used throughout `uv` and

exposes two limits, `UV_CONCURRENT_DOWNLOADS` and

`UV_CONCURRENT_BUILDS`, as environment variables.

Currently, `uv` has a number of concurrent streams that it buffers using

relatively arbitrary limits for backpressure. However, many of these

limits are conflated. We run a relatively small number of tasks overall

and should start most things as soon as possible. What we really want to

limit are three separate operations:

- File I/O. This is managed by tokio's blocking pool and we should not

really have to worry about it.

- Network I/O.

- Python build processes.

Because the current limits span a broad range of tasks, it's possible

that a limit meant for network I/O is occupied by tasks performing

builds, reading from the file system, or even waiting on a `OnceMap`. We

also don't limit build processes that end up being required to perform a

download. While this may not pose a performance problem because our

limits are relatively high, it does mean that the limits do not do what

we want, making it tricky to expose them to users

(https://github.com/astral-sh/uv/issues/1205,

https://github.com/astral-sh/uv/issues/3311).

After this change, the limits on network I/O and build processes are

centralized and managed by semaphores. All other tasks are unbuffered

(note that these tasks are still bounded, so backpressure should not be

a problem).

## Summary

All of the resolver code is run on the main thread, so a lot of the

`Send` bounds and uses of `DashMap` and `Arc` are unnecessary. We could

also switch to using single-threaded versions of `Mutex` and `Notify` in

some places, but there isn't really a crate that provides those I would

be comfortable with using.

The `Arc` in `OnceMap` can't easily be removed because of the uv-auth

code which uses the

[reqwest-middleware](https://docs.rs/reqwest-middleware/latest/reqwest_middleware/trait.Middleware.html)

crate, that seems to adds unnecessary `Send` bounds because of

`async-trait`. We could duplicate the code and create a `OnceMapLocal`

variant, but I don't feel that's worth it.

## Introduction

PEP 621 is limited. Specifically, it lacks

* Relative path support

* Editable support

* Workspace support

* Index pinning or any sort of index specification

The semantics of urls are a custom extension, PEP 440 does not specify

how to use git references or subdirectories, instead pip has a custom

stringly format. We need to somehow support these while still stying

compatible with PEP 621.

## `tool.uv.source`

Drawing inspiration from cargo, poetry and rye, we add `tool.uv.sources`

or (for now stub only) `tool.uv.workspace`:

```toml

[project]

name = "albatross"

version = "0.1.0"

dependencies = [

"tqdm >=4.66.2,<5",

"torch ==2.2.2",

"transformers[torch] >=4.39.3,<5",

"importlib_metadata >=7.1.0,<8; python_version < '3.10'",

"mollymawk ==0.1.0"

]

[tool.uv.sources]

tqdm = { git = "https://github.com/tqdm/tqdm", rev = "cc372d09dcd5a5eabdc6ed4cf365bdb0be004d44" }

importlib_metadata = { url = "https://github.com/python/importlib_metadata/archive/refs/tags/v7.1.0.zip" }

torch = { index = "torch-cu118" }

mollymawk = { workspace = true }

[tool.uv.workspace]

include = [

"packages/mollymawk"

]

[tool.uv.indexes]

torch-cu118 = "https://download.pytorch.org/whl/cu118"

```

See `docs/specifying_dependencies.md` for a detailed explanation of the

format. The basic gist is that `project.dependencies` is what ends up on

pypi, while `tool.uv.sources` are your non-published additions. We do

support the full range or PEP 508, we just hide it in the docs and

prefer the exploded table for easier readability and less confusing with

actual url parts.

This format should eventually be able to subsume requirements.txt's

current use cases. While we will continue to support the legacy `uv pip`

interface, this is a piece of the uv's own top level interface. Together

with `uv run` and a lockfile format, you should only need to write

`pyproject.toml` and do `uv run`, which generates/uses/updates your

lockfile behind the scenes, no more pip-style requirements involved. It

also lays the groundwork for implementing index pinning.

## Changes

This PR implements:

* Reading and lowering `project.dependencies`,

`project.optional-dependencies` and `tool.uv.sources` into a new

requirements format, including:

* Git dependencies

* Url dependencies

* Path dependencies, including relative and editable

* `pip install` integration

* Error reporting for invalid `tool.uv.sources`

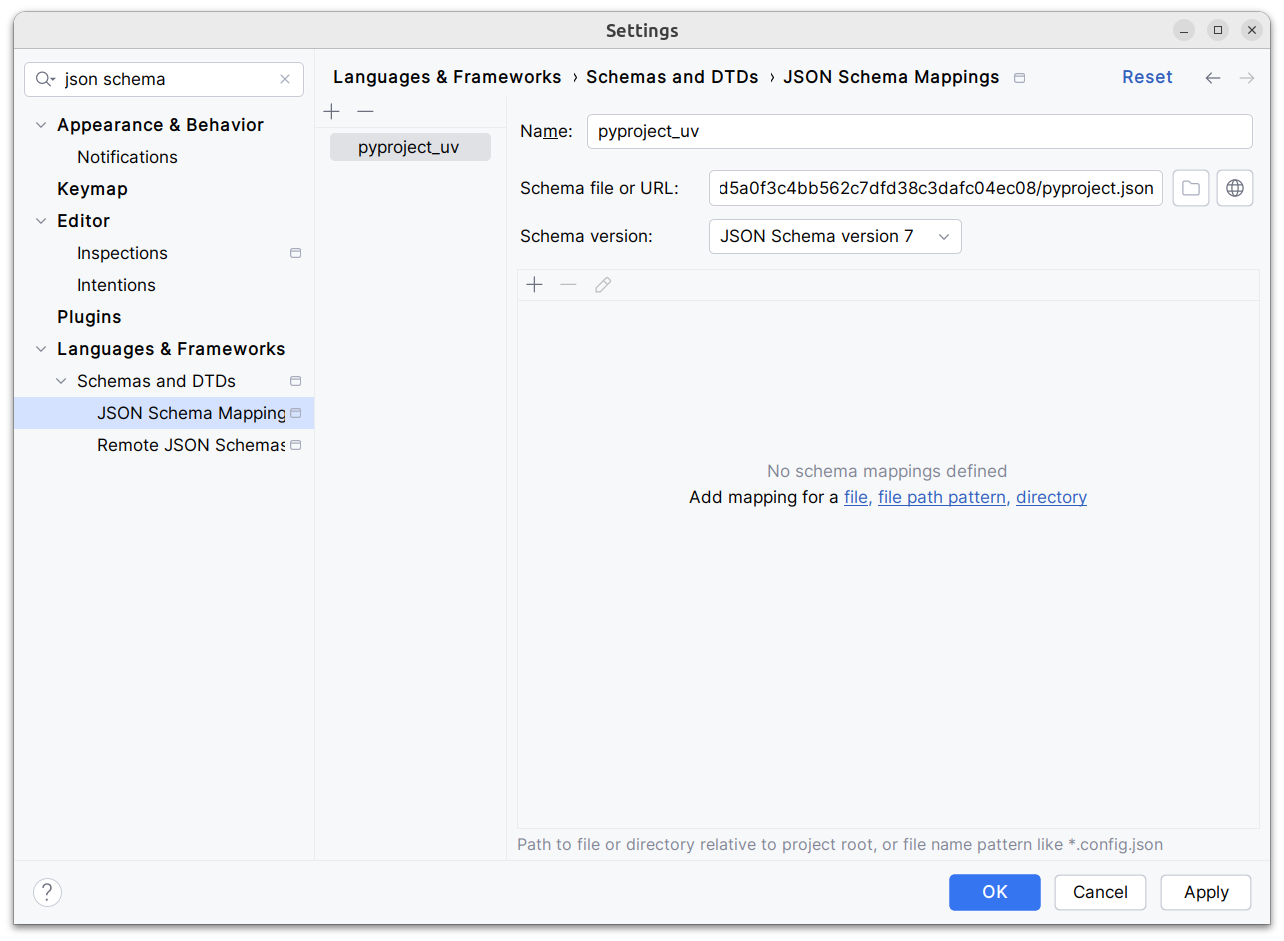

* Json schema integration (works in pycharm, see below)

* Draft user-level docs (see `docs/specifying_dependencies.md`)

It does not implement:

* No `pip compile` testing, deprioritizing towards our own lockfile

* Index pinning (stub definitions only)

* Development dependencies

* Workspace support (stub definitions only)

* Overrides in pyproject.toml

* Patching/replacing dependencies

One technically breaking change is that we now require user provided

pyproject.toml to be valid wrt to PEP 621. Included files still fall

back to PEP 517. That means `pip install -r requirements.txt` requires

it to be valid while `pip install -r requirements.txt` with `-e .` as

content falls back to PEP 517 as before.

## Implementation

The `pep508` requirement is replaced by a new `UvRequirement` (name up

for bikeshedding, not particularly attached to the uv prefix). The still

existing `pep508_rs::Requirement` type is a url format copied from pip's

requirements.txt and doesn't appropriately capture all features we

want/need to support. The bulk of the diff is changing the requirement

type throughout the codebase.

We still use `VerbatimUrl` in many places, where we would expect a

parsed/decomposed url type, specifically:

* Reading core metadata except top level pyproject.toml files, we fail a

step later instead if the url isn't supported.

* Allowed `Urls`.

* `PackageId` with a custom `CanonicalUrl` comparison, instead of

canonicalizing urls eagerly.

* `PubGrubPackage`: We eventually convert the `VerbatimUrl` back to a

`Dist` (`Dist::from_url`), instead of remembering the url.

* Source dist types: We use verbatim url even though we know and require

that these are supported urls we can and have parsed.

I tried to make improve the situation be replacing `VerbatimUrl`, but

these changes would require massive invasive changes (see e.g.

https://github.com/astral-sh/uv/pull/3253). A main problem is the ref

`VersionOrUrl` and applying overrides, which assume the same

requirement/url type everywhere. In its current form, this PR increases

this tech debt.

I've tried to split off PRs and commits, but the main refactoring is

still a single monolith commit to make it compile and the tests pass.

## Demo

Adding

d1ae3b85d5/pyproject.json

as json schema (v7) to pycharm for `pyproject.toml`, you can try the IDE

support already:

[dove.webm](https://github.com/astral-sh/uv/assets/6826232/c293c272-c80b-459d-8c95-8c46a8d198a1)

## Summary

This PR formalizes some of the concepts we use in the cache for

"pointers to things".

In the wheel cache, we have files like

`annotated_types-0.6.0-py3-none-any.http`. This represents an unzipped

wheel, cached alongside an HTTP caching policy. We now have a struct for

this to encapsulate the logic: `HttpArchivePointer`.

Similarly, we have files like `annotated_types-0.6.0-py3-none-any.rev`.

This represents an unzipped local wheel, alongside with a timestamp. We

now have a struct for this to encapsulate the logic:

`LocalArchivePointer`.

We have similar structs for source distributions too.

## Summary

This PR enables hash generation for URL requirements when the user

provides `--generate-hashes` to `pip compile`. While we include the

hashes from the registry already, today, we omit hashes for URLs.

To power hash generation, we introduce a `HashPolicy` abstraction:

```rust

#[derive(Debug, Clone, Copy, PartialEq, Eq)]

pub enum HashPolicy<'a> {

/// No hash policy is specified.

None,

/// Hashes should be generated (specifically, a SHA-256 hash), but not validated.

Generate,

/// Hashes should be validated against a pre-defined list of hashes. If necessary, hashes should

/// be generated so as to ensure that the archive is valid.

Validate(&'a [HashDigest]),

}

```

All of the methods on the distribution database now accept this policy,

instead of accepting `&'a [HashDigest]`.

Closes#2378.

## Summary

This PR modifies the distribution database to return both the

`Metadata23` and the computed hashes when clients request metadata.

No behavior changes, but this will be necessary to power

`--generate-hashes`.

## Summary

This PR adds support for hash-checking mode in `pip install` and `pip

sync`. It's a large change, both in terms of the size of the diff and

the modifications in behavior, but it's also one that's hard to merge in

pieces (at least, with any test coverage) since it needs to work

end-to-end to be useful and testable.

Here are some of the most important highlights:

- We store hashes in the cache. Where we previously stored pointers to

unzipped wheels in the `archives` directory, we now store pointers with

a set of known hashes. So every pointer to an unzipped wheel also

includes its known hashes.

- By default, we don't compute any hashes. If the user runs with

`--require-hashes`, and the cache doesn't contain those hashes, we

invalidate the cache, redownload the wheel, and compute the hashes as we

go. For users that don't run with `--require-hashes`, there will be no

change in performance. For users that _do_, the only change will be if

they don't run with `--generate-hashes` -- then they may see some

repeated work between resolution and installation, if they use `pip

compile` then `pip sync`.

- Many of the distribution types now include a `hashes` field, like

`CachedDist` and `LocalWheel`.

- Our behavior is similar to pip, in that we enforce hashes when pulling

any remote distributions, and when pulling from our own cache. Like pip,

though, we _don't_ enforce hashes if a distribution is _already_

installed.

- Hash validity is enforced in a few different places:

1. During resolution, we enforce hash validity based on the hashes

reported by the registry. If we need to access a source distribution,

though, we then enforce hash validity at that point too, prior to

running any untrusted code. (This is enforced in the distribution

database.)

2. In the install plan, we _only_ add cached distributions that have

matching hashes. If a cached distribution is missing any hashes, or the

hashes don't match, we don't return them from the install plan.

3. In the downloader, we _only_ return distributions with matching

hashes.

4. The final combination of "things we install" are: (1) the wheels from

the cache, and (2) the downloaded wheels. So this ensures that we never

install any mismatching distributions.

- Like pip, if `--require-hashes` is provided, we require that _all_

distributions are pinned with either `==` or a direct URL. We also

require that _all_ distributions have hashes.

There are a few notable TODOs:

- We don't support hash-checking mode for unnamed requirements. These

should be _somewhat_ rare, though? Since `pip compile` never outputs

unnamed requirements. I can fix this, it's just some additional work.

- We don't automatically enable `--require-hashes` with a hash exists in

the requirements file. We require `--require-hashes`.

Closes#474.

## Test Plan

I'd like to add some tests for registries that report incorrect hashes,

but otherwise: `cargo test`

To get more insights into test performance, allow instrumenting tests

with tracing-durations-export.

Usage:

```shell

# A single test

TRACING_DURATIONS_TEST_ROOT=$(pwd)/target/test-traces cargo test --features tracing-durations-export --test pip_install_scenarios no_binary -- --exact

# All tests

TRACING_DURATIONS_TEST_ROOT=$(pwd)/target/test-traces cargo nextest run --features tracing-durations-export

```

Then we can e.g. look at

`target/test-traces/pip_install_scenarios::no_binary.svg` and see the

builds it performs:

## Summary

If we build a source distribution from the registry, and the version

doesn't match that of the filename, we should error, just as we do for

mismatched package names. However, we should also backtrack here, which

we didn't previously.

Closes https://github.com/astral-sh/uv/issues/2953.

## Test Plan

Verified that `cargo run pip install docutils --verbose --no-cache

--reinstall` installs `docutils==0.21` instead of the invalid

`docutils==0.21.post1`.

In the logs, I see:

```

WARN Unable to extract metadata for docutils: Package metadata version `0.21` does not match given version `0.21.post1`

```

Needed to prevent circular dependencies in my toolchain work (#2931). I

think this is probably a reasonable change as we move towards persistent

configuration too?

Unfortunately `BuildIsolation` needs to be in `uv-types` to avoid

circular dependencies still. We might be able to resolve that in the

future.

## Summary

When you specify a source distribution via a path, it can either be a

path to an archive (like a `.tar.gz` file), or a source tree (a

directory). Right now, we handle both paths through the same methods in

the source database. This PR splits them up into separate handlers.

This will make hash generation a little easier, since we need to

generate hashes for archives, but _can't_ generate hashes for source

trees.

It also means that we can now store the unzipped source distribution in

the cache (in the case of archives), and avoid unzipping the source

distribution needlessly on every invocation; and, overall, let's un

enforce clearer expectations between the two routes (e.g., what errors

are possible vs. not), at the cost of duplicating some code.

Closes#2760 (incidentally -- not exactly the motivation for the change,

but it did accomplish it).

## Summary

I think this is a much clearer name for this concept: the set of

"versions" of a given wheel or source distribution. We also use

"Manifest" elsewhere to refer to the set of requirements, constraints,

etc., so this was overloaded.

## Summary

Upgrading `rs-async-zip` enables us to support data descriptors in

streaming. This both greatly improves performance for indexes that use

data descriptors _and_ ensures that we support them in a few other

places (e.g., zipped source distributions created in Finder).

Closes#2808.

## Summary

Rather than storing the `redirects` on the resolver, this PR just

re-uses the "convert this URL to precise" logic when we convert to a

`Resolution` after-the-fact. I think this is a lot simpler: it removes

state from the resolver, and simplifies a lot of the hooks around

distribution fetching (e.g., `get_or_build_wheel_metadata` no longer

returns `(Metadata23, Option<Url>)`).

## Summary

Now that we're resolving metadata more aggressively for local sources,

it's worth doing this. We now pull metadata from the `pyproject.toml`

directly if it's statically-defined.

Closes https://github.com/astral-sh/uv/issues/2629.

This is driving me a little crazy and is becoming a larger problem in

#2596 where I need to move more types (like `Upgrade` and `Reinstall`)

into this crate. Anything that's shared across our core resolver,

install, and build crates needs to be defined in this crate to avoid

cyclic dependencies. We've outgrown it being a single file with some

shared traits.

There are no behavioral changes here.

## Summary

`uv` was failing to install requirements defined like:

```

file://localhost/Users/crmarsh/Downloads/iniconfig-2.0.0-py3-none-any.whl

```

Closes https://github.com/astral-sh/uv/issues/2652.

## Summary

This PR enables the source distribution database to be used with unnamed

requirements (i.e., URLs without a package name). The (significant)

upside here is that we can now use PEP 517 hooks to resolve unnamed

requirement metadata _and_ reuse any computation in the cache.

The changes to `crates/uv-distribution/src/source/mod.rs` are quite

extensive, but mostly mechanical. The core idea is that we introduce a

new `BuildableSource` abstraction, which can either be a distribution,

or an unnamed URL:

```rust

/// A reference to a source that can be built into a built distribution.

///

/// This can either be a distribution (e.g., a package on a registry) or a direct URL.

///

/// Distributions can _also_ point to URLs in lieu of a registry; however, the primary distinction

/// here is that a distribution will always include a package name, while a URL will not.

#[derive(Debug, Clone, Copy)]

pub enum BuildableSource<'a> {

Dist(&'a SourceDist),

Url(SourceUrl<'a>),

}

```

All the methods on the source distribution database now accept

`BuildableSource`. `BuildableSource` has a `name()` method, but it

returns `Option<&PackageName>`, and everything is required to work with

and without a package name.

The main drawback of this approach (which isn't a terrible one) is that

we can no longer include the package name in the cache. (We do continue

to use the package name for registry-based distributions, since those

always have a name.). The package name was included in the cache route

for two reasons: (1) it's nice for debugging; and (2) we use it to power

`uv cache clean flask`, to identify the entries that are relevant for

Flask.

To solve this, I changed the `uv cache clean` code to look one level

deeper. So, when we want to determine whether to remove the cache entry

for a given URL, we now look into the directory to see if there are any

wheels that match the package name. This isn't as nice, but it does work

(and we have test coverage for it -- all passing).

I also considered removing the package name from the cache routes for

non-registry _wheels_, for consistency... But, it would require a cache

bump, and it didn't feel important enough to merit that.

## Summary

Some zip files can't be streamed; in particular, `rs-async-zip` doesn't

support data descriptors right now (though it may in the future). This

PR adds a fallback path for such zips that downloads the entire zip file

to disk, then unzips it from disk (which gives us `Seek`).

Closes https://github.com/astral-sh/uv/issues/2216.

## Test Plan

`cargo run pip install --extra-index-url https://buf.build/gen/python

hashb_foxglove_protocolbuffers_python==25.3.0.1.20240226043130+465630478360

--force-reinstall -n`

## Summary

PyPI now supports Metadata 2.2, which means distributions with Metadata

2.2-compliant metadata will start to appear. The upside is that if a

source distribution includes a `PKG-INFO` file with (1) a metadata

version of 2.2 or greater, and (2) no dynamic fields (at least, of the

fields we rely on), we can read the metadata from the `PKG-INFO` file

directly rather than running _any_ of the PEP 517 build hooks.

Closes https://github.com/astral-sh/uv/issues/2009.

## Summary

If a user provides a source distribution via a direct path, it can

either be an archive (like a `.tar.gz` or `.zip` file) or a directory.

If the former, we need to extract (e.g., unzip) the contents at some

point. Previously, this extraction was in `uv-build`; this PR lifts it

up to the distribution database.

The first benefit here is that various methods that take the

distribution are now simpler, as they can assume a directory.

The second benefit is that we no longer extract _multiple times_ when

working with a source distribution. (Previously, if we tried to get the

metadata, then fell back and built the wheel, we'd extract the wheel

_twice_.)

## Summary

This PR adds support for pip's `--no-build-isolation`. When enabled,

build requirements won't be installed during PEP 517-style builds, but

the source environment _will_ be used when executing the build steps

themselves.

Closes https://github.com/astral-sh/uv/issues/1715.

Address a few pedantic lints

lints are separated into separate commits so they can be reviewed

individually.

I've not added enforcement for any of these lints, but that could be

added if desirable.

## Summary#1562

It turns out that `hexdump` uses an invalid source distribution format

whereby the contents aren't nested in a top-level directory -- instead,

they're all just flattened at the top-level. In looking at pip's source

(51de88ca64/src/pip/_internal/utils/unpacking.py (L62)),

it only strips the top-level directory if all entries have the same

directory prefix (i.e., if it's the only thing in the directory). This

PR accommodates these "invalid" distributions.

I can't find any history on this method in `pip`. It looks like it dates

back over 15 years ago, to before `pip` was even called `pip`.

Closes https://github.com/astral-sh/uv/issues/1376.

First, replace all usages in files in-place. I used my editor for this.

If someone wants to add a one-liner that'd be fun.

Then, update directory and file names:

```

# Run twice for nested directories

find . -type d -print0 | xargs -0 rename s/puffin/uv/g

find . -type d -print0 | xargs -0 rename s/puffin/uv/g

# Update files

find . -type f -print0 | xargs -0 rename s/puffin/uv/g

```

Then add all the files again

```

# Add all the files again

git add crates

git add python/uv

# This one needs a force-add

git add -f crates/uv-trampoline

```