## Summary

This PR exposes uv's PEP 517 implementation via a `uv build` frontend,

such that you can use `uv build` to build source and binary

distributions (i.e., wheels and sdists) from a given directory.

There are some TODOs that I'll tackle in separate PRs:

- [x] Support building a wheel from a source distribution (rather than

from source) (#6898)

- [x] Stream the build output (#6912)

Closes https://github.com/astral-sh/uv/issues/1510

Closes https://github.com/astral-sh/uv/issues/1663.

Most times we compile with `cargo build`, we don't actually need

`uv-dev`. By making `uv-dev` dependent on a new `dev` feature, it

doesn't get built by default anymore, but only when passing `--features

dev`.

Hopefully a small improvement for compile times or at least system load.

Whew this is a lot.

The user-facing changes are:

- `uv toolchain` to `uv python` e.g. `uv python find`, `uv python

install`, ...

- `UV_TOOLCHAIN_DIR` to` UV_PYTHON_INSTALL_DIR`

- `<UV_STATE_DIR>/toolchains` to `<UV_STATE_DIR>/python` (with

[automatic

migration](https://github.com/astral-sh/uv/pull/4735/files#r1663029330))

- User-facing messages no longer refer to toolchains, instead using

"Python", "Python versions" or "Python installations"

The internal changes are:

- `uv-toolchain` crate to `uv-python`

- `Toolchain` no longer referenced in type names

- Dropped unused `SystemPython` type (previously replaced)

- Clarified the type names for "managed Python installations"

- (more little things)

## Summary

In a workspace, we now read configuration from the workspace root.

Previously, we read configuration from the first `pyproject.toml` or

`uv.toml` file in path -- but in a workspace, that would often be the

_project_ rather than the workspace configuration.

We need to read configuration from the workspace root, rather than its

members, because we lock the workspace globally, so all configuration

applies to the workspace globally.

As part of this change, the `uv-workspace` crate has been renamed to

`uv-settings` and its purpose has been narrowed significantly (it no

longer discovers a workspace; instead, it just reads the settings from a

directory).

If a user has a `uv.toml` in their directory or in a parent directory

but is _not_ in a workspace, we will still respect that use-case as

before.

Closes#4249.

Extends https://github.com/astral-sh/uv/pull/4121

Part of #2607

Adds support for managed toolchain fetching to `uv venv`, e.g.

```

❯ cargo run -q -- venv --python 3.9.18 --preview -v

DEBUG Searching for Python 3.9.18 in search path or managed toolchains

DEBUG Searching for managed toolchains at `/Users/zb/Library/Application Support/uv/toolchains`

DEBUG Found CPython 3.12.3 at `/opt/homebrew/bin/python3` (search path)

DEBUG Found CPython 3.9.6 at `/usr/bin/python3` (search path)

DEBUG Found CPython 3.12.3 at `/opt/homebrew/bin/python3` (search path)

DEBUG Requested Python not found, checking for available download...

DEBUG Using registry request timeout of 30s

INFO Fetching requested toolchain...

DEBUG Downloading https://github.com/indygreg/python-build-standalone/releases/download/20240224/cpython-3.9.18%2B20240224-aarch64-apple-darwin-pgo%2Blto-full.tar.zst to temporary location /Users/zb/Library/Application Support/uv/toolchains/.tmpgohKwp

DEBUG Extracting cpython-3.9.18%2B20240224-aarch64-apple-darwin-pgo%2Blto-full.tar.zst

DEBUG Moving /Users/zb/Library/Application Support/uv/toolchains/.tmpgohKwp/python to /Users/zb/Library/Application Support/uv/toolchains/cpython-3.9.18-macos-aarch64-none

Using Python 3.9.18 interpreter at: /Users/zb/Library/Application Support/uv/toolchains/cpython-3.9.18-macos-aarch64-none/install/bin/python3

Creating virtualenv at: .venv

INFO Removing existing directory

Activate with: source .venv/bin/activate

```

The preview flag is required. The fetch is performed if we can't find an

interpreter that satisfies the request. Once fetched, the toolchain will

be available for later invocations that include the `--preview` flag.

There will be follow-ups to improve toolchain management in general,

there is still outstanding work from the initial implementation.

## Summary

This PR removes the static resolver map:

```rust

static RESOLVED_GIT_REFS: Lazy<Mutex<FxHashMap<RepositoryReference, GitSha>>> =

Lazy::new(Mutex::default);

```

With a `GitResolver` struct that we now pass around on the

`BuildContext`. There should be no behavior changes here; it's purely an

internal refactor with an eye towards making it cleaner for us to

"pre-populate" the list of resolved SHAs.

With the change, we remove the special casing of workspace dependencies

and resolve `tool.uv` for all git and directory distributions. This

gives us support for non-editable workspace dependencies and path

dependencies in other workspaces. It removes a lot of special casing

around workspaces. These changes are the groundwork for supporting

`tool.uv` with dynamic metadata.

The basis for this change is moving `Requirement` from

`distribution-types` to `pypi-types` and the lowering logic from

`uv-requirements` to `uv-distribution`. This changes should be split out

in separate PRs.

I've included an example workspace `albatross-root-workspace2` where

`bird-feeder` depends on `a` from another workspace `ab`. There's a

bunch of failing tests and regressed error messages that still need

fixing. It does fix the audited package count for the workspace tests.

When parsing requirements from any source, directly parse the url parts

(and reject unsupported urls) instead of parsing url parts at a later

stage. This removes a bunch of error branches and concludes the work

parsing url parts once and passing them around everywhere.

Many usages of the assembled `VerbatimUrl` remain, but these can be

removed incrementally.

Please review commit-by-commit.

## Summary

This PR consolidates the concurrency limits used throughout `uv` and

exposes two limits, `UV_CONCURRENT_DOWNLOADS` and

`UV_CONCURRENT_BUILDS`, as environment variables.

Currently, `uv` has a number of concurrent streams that it buffers using

relatively arbitrary limits for backpressure. However, many of these

limits are conflated. We run a relatively small number of tasks overall

and should start most things as soon as possible. What we really want to

limit are three separate operations:

- File I/O. This is managed by tokio's blocking pool and we should not

really have to worry about it.

- Network I/O.

- Python build processes.

Because the current limits span a broad range of tasks, it's possible

that a limit meant for network I/O is occupied by tasks performing

builds, reading from the file system, or even waiting on a `OnceMap`. We

also don't limit build processes that end up being required to perform a

download. While this may not pose a performance problem because our

limits are relatively high, it does mean that the limits do not do what

we want, making it tricky to expose them to users

(https://github.com/astral-sh/uv/issues/1205,

https://github.com/astral-sh/uv/issues/3311).

After this change, the limits on network I/O and build processes are

centralized and managed by semaphores. All other tasks are unbuffered

(note that these tasks are still bounded, so backpressure should not be

a problem).

## Introduction

PEP 621 is limited. Specifically, it lacks

* Relative path support

* Editable support

* Workspace support

* Index pinning or any sort of index specification

The semantics of urls are a custom extension, PEP 440 does not specify

how to use git references or subdirectories, instead pip has a custom

stringly format. We need to somehow support these while still stying

compatible with PEP 621.

## `tool.uv.source`

Drawing inspiration from cargo, poetry and rye, we add `tool.uv.sources`

or (for now stub only) `tool.uv.workspace`:

```toml

[project]

name = "albatross"

version = "0.1.0"

dependencies = [

"tqdm >=4.66.2,<5",

"torch ==2.2.2",

"transformers[torch] >=4.39.3,<5",

"importlib_metadata >=7.1.0,<8; python_version < '3.10'",

"mollymawk ==0.1.0"

]

[tool.uv.sources]

tqdm = { git = "https://github.com/tqdm/tqdm", rev = "cc372d09dcd5a5eabdc6ed4cf365bdb0be004d44" }

importlib_metadata = { url = "https://github.com/python/importlib_metadata/archive/refs/tags/v7.1.0.zip" }

torch = { index = "torch-cu118" }

mollymawk = { workspace = true }

[tool.uv.workspace]

include = [

"packages/mollymawk"

]

[tool.uv.indexes]

torch-cu118 = "https://download.pytorch.org/whl/cu118"

```

See `docs/specifying_dependencies.md` for a detailed explanation of the

format. The basic gist is that `project.dependencies` is what ends up on

pypi, while `tool.uv.sources` are your non-published additions. We do

support the full range or PEP 508, we just hide it in the docs and

prefer the exploded table for easier readability and less confusing with

actual url parts.

This format should eventually be able to subsume requirements.txt's

current use cases. While we will continue to support the legacy `uv pip`

interface, this is a piece of the uv's own top level interface. Together

with `uv run` and a lockfile format, you should only need to write

`pyproject.toml` and do `uv run`, which generates/uses/updates your

lockfile behind the scenes, no more pip-style requirements involved. It

also lays the groundwork for implementing index pinning.

## Changes

This PR implements:

* Reading and lowering `project.dependencies`,

`project.optional-dependencies` and `tool.uv.sources` into a new

requirements format, including:

* Git dependencies

* Url dependencies

* Path dependencies, including relative and editable

* `pip install` integration

* Error reporting for invalid `tool.uv.sources`

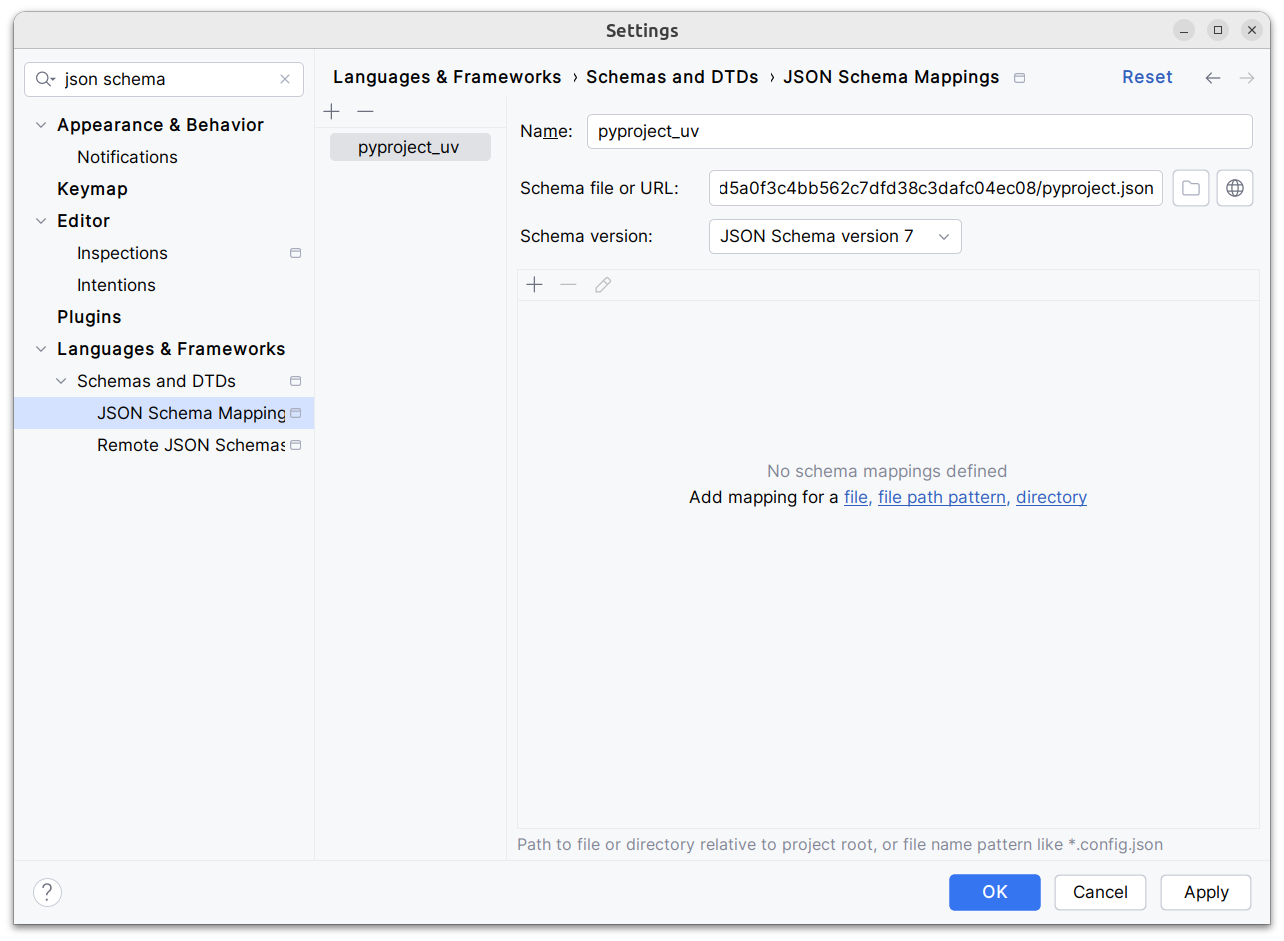

* Json schema integration (works in pycharm, see below)

* Draft user-level docs (see `docs/specifying_dependencies.md`)

It does not implement:

* No `pip compile` testing, deprioritizing towards our own lockfile

* Index pinning (stub definitions only)

* Development dependencies

* Workspace support (stub definitions only)

* Overrides in pyproject.toml

* Patching/replacing dependencies

One technically breaking change is that we now require user provided

pyproject.toml to be valid wrt to PEP 621. Included files still fall

back to PEP 517. That means `pip install -r requirements.txt` requires

it to be valid while `pip install -r requirements.txt` with `-e .` as

content falls back to PEP 517 as before.

## Implementation

The `pep508` requirement is replaced by a new `UvRequirement` (name up

for bikeshedding, not particularly attached to the uv prefix). The still

existing `pep508_rs::Requirement` type is a url format copied from pip's

requirements.txt and doesn't appropriately capture all features we

want/need to support. The bulk of the diff is changing the requirement

type throughout the codebase.

We still use `VerbatimUrl` in many places, where we would expect a

parsed/decomposed url type, specifically:

* Reading core metadata except top level pyproject.toml files, we fail a

step later instead if the url isn't supported.

* Allowed `Urls`.

* `PackageId` with a custom `CanonicalUrl` comparison, instead of

canonicalizing urls eagerly.

* `PubGrubPackage`: We eventually convert the `VerbatimUrl` back to a

`Dist` (`Dist::from_url`), instead of remembering the url.

* Source dist types: We use verbatim url even though we know and require

that these are supported urls we can and have parsed.

I tried to make improve the situation be replacing `VerbatimUrl`, but

these changes would require massive invasive changes (see e.g.

https://github.com/astral-sh/uv/pull/3253). A main problem is the ref

`VersionOrUrl` and applying overrides, which assume the same

requirement/url type everywhere. In its current form, this PR increases

this tech debt.

I've tried to split off PRs and commits, but the main refactoring is

still a single monolith commit to make it compile and the tests pass.

## Demo

Adding

d1ae3b85d5/pyproject.json

as json schema (v7) to pycharm for `pyproject.toml`, you can try the IDE

support already:

[dove.webm](https://github.com/astral-sh/uv/assets/6826232/c293c272-c80b-459d-8c95-8c46a8d198a1)

Moves all of `uv-toolchain` into `uv-interpreter`. We may split these

out in the future, but the refactoring I want to do for interpreter

discovery is easier if I don't have to deal with entanglement. Includes

some restructuring of `uv-interpreter`.

Part of #2386

See https://github.com/astral-sh/uv/issues/2617

Note this also includes:

- #2918

- #2931 (pending)

A first step towards Python toolchain management in Rust.

First, we add a new crate to manage Python download metadata:

- Adds a new `uv-toolchain` crate

- Adds Rust structs for Python version download metadata

- Duplicates the script which downloads Python version metadata

- Adds a script to generate Rust code from the JSON metadata

- Adds a utility to download and extract the Python version

I explored some alternatives like a build script using things like

`serde` and `uneval` to automatically construct the code from our

structs but deemed it to heavy. Unlike Rye, I don't generate the Rust

directly from the web requests and have an intermediate JSON layer to

speed up iteration on the Rust types.

Next, we add add a `uv-dev` command `fetch-python` to download Python

versions per the bootstrapping script.

- Downloads a requested version or reads from `.python-versions`

- Extracts to `UV_BOOTSTRAP_DIR`

- Links executables for path extension

This command is not really intended to be user facing, but it's a good

PoC for the `uv-toolchain` API. Hash checking (via the sha256) isn't

implemented yet, we can do that in a follow-up.

Finally, we remove the `scripts/bootstrap` directory, update CI to use

the new command, and update the CONTRIBUTING docs.

<img width="1023" alt="Screenshot 2024-04-08 at 17 12 15"

src="https://github.com/astral-sh/uv/assets/2586601/57bd3cf1-7477-4bb8-a8e9-802a00d772cb">

Needed to prevent circular dependencies in my toolchain work (#2931). I

think this is probably a reasonable change as we move towards persistent

configuration too?

Unfortunately `BuildIsolation` needs to be in `uv-types` to avoid

circular dependencies still. We might be able to resolve that in the

future.

This is driving me a little crazy and is becoming a larger problem in

#2596 where I need to move more types (like `Upgrade` and `Reinstall`)

into this crate. Anything that's shared across our core resolver,

install, and build crates needs to be defined in this crate to avoid

cyclic dependencies. We've outgrown it being a single file with some

shared traits.

There are no behavioral changes here.

Scott schafer got me the idea: We can avoid repeating the path for

workspaces dependencies everywhere if we declare them in the virtual

package once and treat them as workspace dependencies from there on.

## Summary

I tried out `cargo shear` to see if there are any unused dependencies

that `cargo udeps` isn't reporting. It turned out, there are a few. This

PR removes those dependencies.

## Test Plan

`cargo build`

The architecture of uv does not necessarily match that of the python

interpreter (#2326). In cross compiling/testing scenarios the operating

system can also mismatch. To solve this, we move arch and os detection

to python, vendoring the relevant pypa/packaging code, preventing

mismatches between what the python interpreter was compiled for and what

uv was compiled for.

To make the scripts more manageable, they are now a directory in a

tempdir and we run them with `python -m` . I've simplified the

pypa/packaging code since we're still building the tags in rust. A

`Platform` is now instantiated by querying the python interpreter for

its platform. The pypa/packaging files are copied verbatim for easier

updates except a `lru_cache()` python 3.7 backport.

Error handling is done by a `"result": "success|error"` field that allow

passing error details to rust:

```console

$ uv venv --no-cache

× Can't use Python at `/home/konsti/projects/uv/.venv/bin/python3`

╰─▶ Unknown operation system `linux`

```

I've used the [maturin sysconfig

collection](855f6d2cb1/sysconfig)

as reference. I'm unsure how to test these changes across the wide

variety of platforms.

Fixes#2326

Add a `--compile` option to `pip install` and `pip sync`.

I chose to implement this as a separate pass over the entire venv. If we

wanted to compile during installation, we'd have to make sure that

writing is exclusive, to avoid concurrent processes writing broken

`.pyc` files. Additionally, this ensures that the entire site-packages

are bytecode compiled, even if there are packages that aren't from this

`uv` invocation. The disadvantage is that we do not update RECORD and

rely on this comment from [PEP 491](https://peps.python.org/pep-0491/):

> Uninstallers should be smart enough to remove .pyc even if it is not

mentioned in RECORD.

If this is a problem we can change it to run during installation and

write RECORD entries.

Internally, this is implemented as an async work-stealing subprocess

worker pool. The producer is a directory traversal over site-packages,

sending each `.py` file to a bounded async FIFO queue/channel. Each

worker has a long-running python process. It pops the queue to get a

single path (or exists if the channel is closed), then sends it to

stdin, waits until it's informed that the compilation is done through a

line on stdout, and repeat. This is fast, e.g. installing `jupyter

plotly` on Python 3.12 it processes 15876 files in 319ms with 32 threads

(vs. 3.8s with a single core). The python processes internally calls

`compileall.compile_file`, the same as pip.

Like pip, we ignore and silence all compilation errors

(https://github.com/astral-sh/uv/issues/1559). There is a 10s timeout to

handle the case when the workers got stuck. For the reviewers, please

check if i missed any spots where we could deadlock, this is the hardest

part of this PR.

I've added `uv-dev compile <dir>` and `uv-dev clear-compile <dir>`

commands, mainly for my own benchmarking. I don't want to expose them in

`uv`, they almost certainly not the correct workflow and we don't want

to support them.

Fixes#1788Closes#1559Closes#1928

First, replace all usages in files in-place. I used my editor for this.

If someone wants to add a one-liner that'd be fun.

Then, update directory and file names:

```

# Run twice for nested directories

find . -type d -print0 | xargs -0 rename s/puffin/uv/g

find . -type d -print0 | xargs -0 rename s/puffin/uv/g

# Update files

find . -type f -print0 | xargs -0 rename s/puffin/uv/g

```

Then add all the files again

```

# Add all the files again

git add crates

git add python/uv

# This one needs a force-add

git add -f crates/uv-trampoline

```