## Summary

`--add-noqa` now runs in two stages: first, the linter finds all

diagnostics that need noqa comments and generate edits on a per-line

basis. Second, these edits are applied, in order, to the document.

A public-facing function, `generate_noqa_edits`, has also been

introduced, which returns noqa edits generated on a per-diagnostic

basis. This will be used by `ruff server` for noqa comment quick-fixes.

## Test Plan

Unit tests have been updated.

## Summary

This PR adds updates the semantic model to detect attribute docstring.

Refer to [PEP 258](https://peps.python.org/pep-0258/#attribute-docstrings)

for the definition of an attribute docstring.

This PR doesn't add full support for it but only considers string

literals as attribute docstring for the following cases:

1. A string literal following an assignment statement in the **global

scope**.

2. A global class attribute

For an assignment statement, it's considered an attribute docstring only

if the target expression is a name expression (`x = 1`). So, chained

assignment, multiple assignment or unpacking, and starred expression,

which are all valid in the target position, aren't considered here.

In `__init__` method, an assignment to the `self` variable like `self.x = 1`

is also a candidate for an attribute docstring. **This PR does not

support this position.**

## Test Plan

I used the following source code along with a print statement to verify

that the attribute docstring detection is correct.

Refer to the PR description for the code snippet.

I'll add this in the follow-up PR

(https://github.com/astral-sh/ruff/pull/11302) which uses this method.

## Summary

Lots of TODOs and things to clean up here, but it demonstrates the

working lint rule.

## Test Plan

```

➜ cat main.py

from typing import override

from base import B

class C(B):

@override

def method(self): pass

➜ cat base.py

class B: pass

➜ cat typing.py

def override(func):

return func

```

(We provide our own `typing.py` since we don't have typeshed vendored or

type stub support yet.)

```

➜ ./target/debug/red_knot main.py

...

1 0.012086s TRACE red_knot Main Loop: Tick

[crates/red_knot/src/main.rs:157:21] diagnostics = [

"Method C.method is decorated with `typing.override` but does not override any base class method",

]

```

If we add `def method(self): pass` to class `B` in `base.py` and run

red_knot again, there is no lint error.

---------

Co-authored-by: Micha Reiser <micha@reiser.io>

<!--

Thank you for contributing to Ruff! To help us out with reviewing,

please consider the following:

- Does this pull request include a summary of the change? (See below.)

- Does this pull request include a descriptive title?

- Does this pull request include references to any relevant issues?

-->

## Summary

Resolves#11263

Detect `pathlib.Path.open` calls which do not specify a file encoding.

## Test Plan

Test cases added to fixture.

---------

Co-authored-by: Dhruv Manilawala <dhruvmanila@gmail.com>

This PR vendors typeshed!

- The first commit vendors the stdlib directory from typeshed into a new crates/red_knot/vendored_typeshed directory.

- The second commit adjusts various linting config files to make sure that the vendored code is excluded from typo checks, formatting checks, etc.

- The LICENSE and README.md files are also vendored, but all other directories and files (stubs, scripts, tests, test_cases, etc.) are excluded. We should have no need for them (except possibly stubs/, discussed in more depth below).

- Similar to the way pyright has a commit.txt file in its vendored copy of typeshed, to indicate which typeshed commit the vendored code corresponds to, I've also added a crates/red_knot/vendored_typeshed/source_commit.txt file in the third commit of this PR.

One open question is: should we vendor the stdlib and stubs directories, or just the stdlib directory? The stubs/ directory contains stubs for 162 third-party packages outside the stdlib. Mypy and typeshed_client1 only vendor the stdlib directory; pyright and pyre vendor both the stdlib and stubs directories; pytype vendors the entire typeshed repo (scripts/, tests/ and all).

In this PR, I've chosen to copy mypy and typeshed_client. Unlike vendoring the stdlib, which is unavoidable if we want to do typechecking of the stdlib, it's not strictly necessary to vendor the stubs directory: each subdirectory in stubs is published to PyPI as a standalone stubs distribution that can be (uv)-pip-installed into a virtual environment. It might be useful for our users if we vendored those stubs anyway, but there are costs as well as benefits to doing so (apart from just the sheer amount of vendored code in the ruff repository), so I'd rather consider it separately.

Resolves https://github.com/astral-sh/ruff/issues/11313

## Summary

PLR0912(too-many-branches) did not count branches inside with: blocks.

With this fix, the branches inside with statements are also counted.

## Test Plan

Added a new test case.

## Summary

This PR removes the cyclic dev dependency some of the crates had with

the parser crate.

The cyclic dependencies are:

* `ruff_python_ast` has a **dev dependency** on `ruff_python_parser` and

`ruff_python_parser` directly depends on `ruff_python_ast`

* `ruff_python_trivia` has a **dev dependency** on `ruff_python_parser`

and `ruff_python_parser` has an indirect dependency on

`ruff_python_trivia` (`ruff_python_parser` - `ruff_python_ast` -

`ruff_python_trivia`)

Specifically, this PR does the following:

* Introduce two new crates

* `ruff_python_ast_integration_tests` and move the tests from the

`ruff_python_ast` crate which uses the parser in this crate

* `ruff_python_trivia_integration_tests` and move the tests from the

`ruff_python_trivia` crate which uses the parser in this crate

### Motivation

The main motivation for this PR is to help development. Before this PR,

`rust-analyzer` wouldn't provide any intellisense in the

`ruff_python_parser` crate regarding the symbols in `ruff_python_ast`

crate.

```

[ERROR][2024-05-03 13:47:06] .../vim/lsp/rpc.lua:770 "rpc" "/Users/dhruv/.cargo/bin/rust-analyzer" "stderr" "[ERROR project_model::workspace] cyclic deps: ruff_python_parser(Idx::<CrateData>(50)) -> ruff_python_ast(Idx::<CrateData>(37)), alternative path: ruff_python_ast(Idx::<CrateData>(37)) -> ruff_python_parser(Idx::<CrateData>(50))\n"

```

## Test Plan

Check the logs of `rust-analyzer` to not see any signs of cyclic

dependency.

## Summary

While I was here, I also updated the rule to use

`function_type::classify` rather than hard-coding `staticmethod` and

friends.

Per Carl:

> Enum instances are already referred to by the class, forming a cycle

that won't get collected until the class itself does. At which point the

`lru_cache` itself would be collected, too.

Closes https://github.com/astral-sh/ruff/issues/9912.

## Summary

Historically, we only ignored `flake8-blind-except` if you re-raised or

logged the exception as a _direct_ child statement; but it could be

nested somewhere. This was just a known limitation at the time of adding

the previous logic.

Closes https://github.com/astral-sh/ruff/issues/11289.

## Summary

A follow-up to https://github.com/astral-sh/ruff/pull/11222. `ruff

server` stalls during shutdown with Neovim because after it receives an

exit notification and closes the I/O thread, it attempts to log a

success message to `stderr`. Removing this log statement fixes this

issue.

## Test Plan

Track the instances of `ruff` in the OS task manager as you open and

close Neovim. A new instance should appear when Neovim starts and it

should disappear once Neovim is closed.

## Summary

Fixes https://github.com/astral-sh/ruff/issues/11258.

This PR fixes the settings resolver to match the expected behavior when

file-based configuration is not available.

## Test Plan

In a workspace with no file-based configuration, set a setting in your

editor and confirm that this setting is used instead of the default.

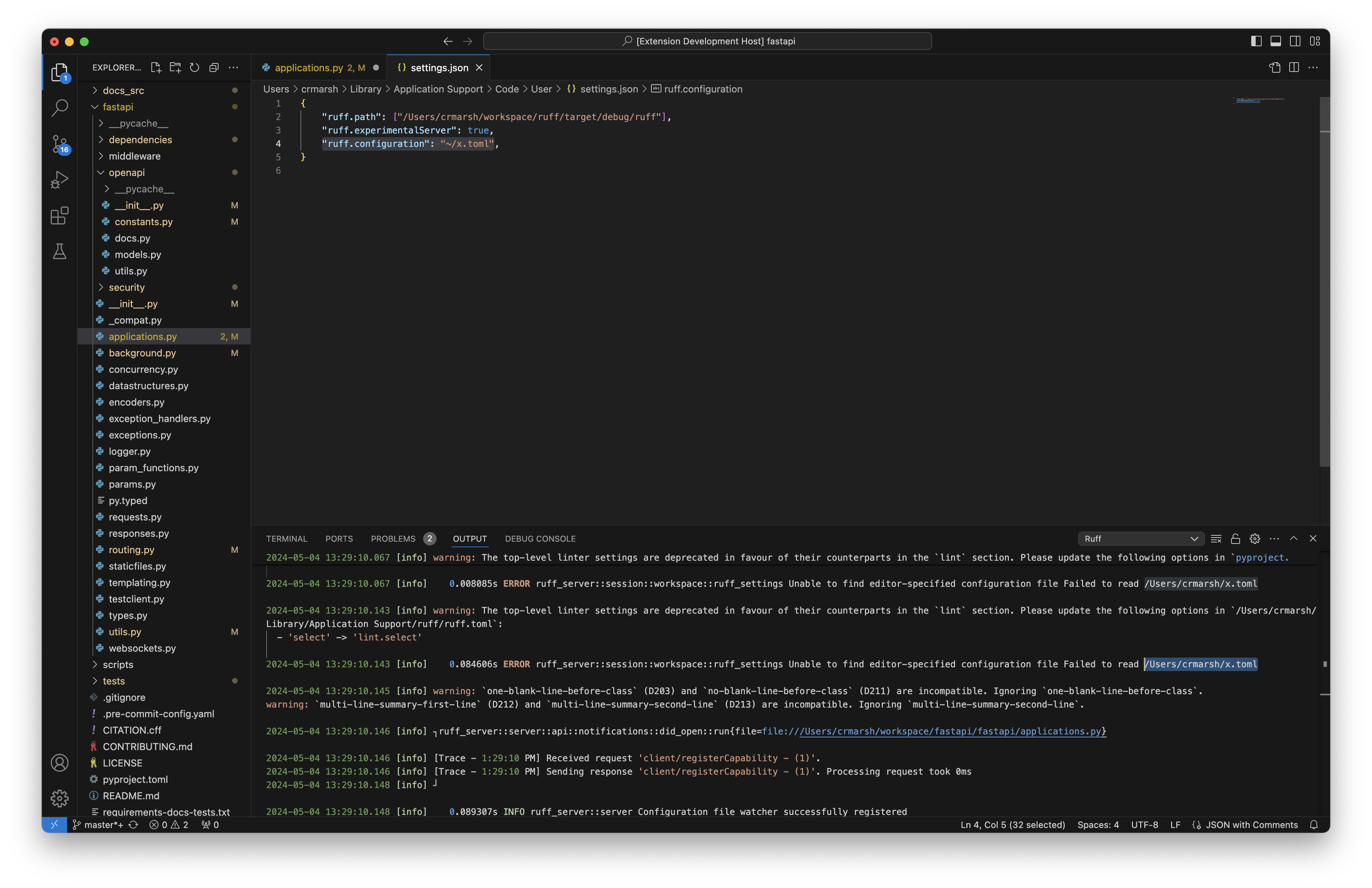

## Summary

Users can now include tildes and environment variables in the provided

path, just like with `--config`.

Closes#11277.

## Test Plan

Set the configuration path to `"ruff.configuration": "~/x.toml"`;

verified that the server attempted to read from `/Users/crmarsh/x.toml`.

## Summary

Change `hardcoded-tmp-directory-extend` example to follow the schema:

1e91a09918/ruff.schema.json (L896-L901)

<!-- What's the purpose of the change? What does it do, and why? -->

## Summary

In #9218 `Rule::NeverUnion` was partially removed from a

`checker.any_enabled` call. This makes the change consistent.

## Test Plan

`cargo test`

## Summary

Fixes https://github.com/astral-sh/ruff/issues/11207.

The server would hang after handling a shutdown request on

`IoThreads::join()` because a global sender (`MESSENGER`, used to send

`window/showMessage` notifications) would remain allocated even after

the event loop finished, which kept the writer I/O thread channel open.

To fix this, I've made a few structural changes to `ruff server`. I've

wrapped the send/receive channels and thread join handle behind a new

struct, `Connection`, which facilitates message sending and receiving,

and also runs `IoThreads::join()` after the event loop finishes. To

control the number of sender channels, the `Connection` wraps the sender

channel in an `Arc` and only allows the creation of a wrapper type,

`ClientSender`, which hold a weak reference to this `Arc` instead of

direct channel access. The wrapper type implements the channel methods

directly to prevent access to the inner channel (which would allow the

channel to be cloned). ClientSender's function is analogous to

[`WeakSender` in

`tokio`](https://docs.rs/tokio/latest/tokio/sync/mpsc/struct.WeakSender.html).

Additionally, the receiver channel cannot be accessed directly - the

`Connection` only exposes an iterator over it.

These changes will guarantee that all channels are closed before the I/O

threads are joined.

## Test Plan

Repeatedly open and close an editor utilizing `ruff server` while

observing the task monitor. The net total amount of open `ruff`

instances should be zero once all editor windows have closed.

The following logs should also appear after the server is shut down:

<img width="835" alt="Screenshot 2024-04-30 at 3 56 22 PM"

src="https://github.com/astral-sh/ruff/assets/19577865/404b74f5-ef08-4bb4-9fa2-72e72b946695">

This can be tested on VS Code by changing the settings and then checking

`Output`.

* Add `decorators: Vec<Type>` to `FunctionType` struct

* Thread decorators through two `add_function` definitions

* Populate decorators at the callsite in `infer_symbol_type`

* Small test

Resolves#10390 and starts to address #10391

# Changes to behavior

* In `__init__.py` we now offer some fixes for unused imports.

* If the import binding is first-party this PR suggests a fix to turn it

into a redundant alias.

* If the import binding is not first-party, this PR suggests a fix to

remove it from the `__init__.py`.

* The fix-titles are specific to these new suggested fixes.

* `checker.settings.ignore_init_module_imports` setting is

deprecated/ignored. There is probably a documentation change to make

that complete which I haven't done.

---

<details><summary>Old description of implementation changes</summary>

# Changes to the implementation

* In the body of the loop over import statements that contain unused

bindings, the bindings are partitioned into `to_reexport` and

`to_remove` (according to how we want to resolve the fact they're

unused) with the following predicate:

```rust

in_init && is_first_party(checker, &import.qualified_name().to_string())

// true means make it a reexport

```

* Instead of generating a single fix per import statement, we now

generate up to two fixes per import statement:

```rust

(fix_by_removing_imports(checker, node_id, &to_remove, in_init).ok(),

fix_by_reexporting(checker, node_id, &to_reexport, dunder_all).ok())

```

* The `to_remove` fixes are unsafe when `in_init`.

* The `to_explicit` fixes are safe. Currently, until a future PR, we

make them redundant aliases (e.g. `import a` would become `import a as

a`).

## Other changes

* `checker.settings.ignore_init_module_imports` is deprecated/ignored.

Instead, all fixes are gated on `checker.settings.preview.is_enabled()`.

* Got rid of the pattern match on the import-binding bound by the inner

loop because it seemed less readable than referencing fields on the

binding.

* [x] `// FIXME: rename "imports" to "bindings"` if reviewer agrees (see

code)

* [x] `// FIXME: rename "node_id" to "import_statement"` if reviewer

agrees (see code)

<details>

<summary><h2>Scope cut until a future PR</h2></summary>

* (Not implemented) The `to_explicit` fixes will be added to `__all__`

unless it doesn't exist. When `__all__` doesn't exist they're resolved

by converting to redundant aliases (e.g. `import a` would become `import

a as a`).

---

</details>

# Test plan

* [x] `crates/ruff_linter/resources/test/fixtures/pyflakes/F401_24`

contains an `__init__.py` with*out* `__all__` that exercises the

features in this PR, but it doesn't pass.

* [x]

`crates/ruff_linter/resources/test/fixtures/pyflakes/F401_25_dunder_all`

contains an `__init__.py` *with* `__all__` that exercises the features

in this PR, but it doesn't pass.

* [x] Write unit tests for the new edit functions in

`fix::edits::make_redundant_alias`.

</details>

---------

Co-authored-by: Micha Reiser <micha@reiser.io>

## Summary

This PR removes the `ImportMap` implementation and all its routing

through ruff.

The import map was added in https://github.com/astral-sh/ruff/pull/3243

but we then never ended up using it to do cross file analysis.

We are now working on adding multifile analysis to ruff, and revisit

import resolution as part of it.

```

hyperfine --warmup 10 --runs 20 --setup "./target/release/ruff clean" \

"./target/release/ruff check crates/ruff_linter/resources/test/cpython -e -s --extend-select=I" \

"./target/release/ruff-import check crates/ruff_linter/resources/test/cpython -e -s --extend-select=I"

Benchmark 1: ./target/release/ruff check crates/ruff_linter/resources/test/cpython -e -s --extend-select=I

Time (mean ± σ): 37.6 ms ± 0.9 ms [User: 52.2 ms, System: 63.7 ms]

Range (min … max): 35.8 ms … 39.8 ms 20 runs

Benchmark 2: ./target/release/ruff-import check crates/ruff_linter/resources/test/cpython -e -s --extend-select=I

Time (mean ± σ): 36.0 ms ± 0.7 ms [User: 50.3 ms, System: 58.4 ms]

Range (min … max): 34.5 ms … 37.6 ms 20 runs

Summary

./target/release/ruff-import check crates/ruff_linter/resources/test/cpython -e -s --extend-select=I ran

1.04 ± 0.03 times faster than ./target/release/ruff check crates/ruff_linter/resources/test/cpython -e -s --extend-select=I

```

I suspect that the performance improvement should even be more

significant for users that otherwise don't have any diagnostics.

```

hyperfine --warmup 10 --runs 20 --setup "cd ../ecosystem/airflow && ../../ruff/target/release/ruff clean" \

"./target/release/ruff check ../ecosystem/airflow -e -s --extend-select=I" \

"./target/release/ruff-import check ../ecosystem/airflow -e -s --extend-select=I"

Benchmark 1: ./target/release/ruff check ../ecosystem/airflow -e -s --extend-select=I

Time (mean ± σ): 53.7 ms ± 1.8 ms [User: 68.4 ms, System: 63.0 ms]

Range (min … max): 51.1 ms … 58.7 ms 20 runs

Benchmark 2: ./target/release/ruff-import check ../ecosystem/airflow -e -s --extend-select=I

Time (mean ± σ): 50.8 ms ± 1.4 ms [User: 50.7 ms, System: 60.9 ms]

Range (min … max): 48.5 ms … 55.3 ms 20 runs

Summary

./target/release/ruff-import check ../ecosystem/airflow -e -s --extend-select=I ran

1.06 ± 0.05 times faster than ./target/release/ruff check ../ecosystem/airflow -e -s --extend-select=I

```

## Test Plan

`cargo test`

## Summary

Fixes#11185Fixes#11214

Document path and package information is now forwarded to the Ruff

linter, which allows `per-file-ignores` to correctly match against the

file name. This also fixes an issue where the import sorting rule didn't

distinguish between third-party and first-party packages since we didn't

pass in the package root.

## Test Plan

`per-file-ignores` should ignore files as expected. One quick way to

check is by adding this to your `pyproject.toml`:

```toml

[tool.ruff.lint.per-file-ignores]

"__init__.py" = ["ALL"]

```

Then, confirm that no diagnostics appear when you add code to an

`__init__.py` file (besides syntax errors).

The import sorting fix can be verified by failing to reproduce the

original issue - an `I001` diagnostic should not appear in

`other_module.py`.

## Summary

Fixes https://github.com/astral-sh/ruff/issues/11158.

A settings file in the ruff user configuration directory will be used as

a configuration fallback, if it exists.

## Test Plan

Create a `pyproject.toml` or `ruff.toml` configuration file in the ruff

user configuration directory.

* On Linux, that will be `$XDG_CONFIG_HOME/ruff/` or `$HOME/.config`

* On macOS, that will be `$HOME/Library/Application Support`

* On Windows, that will be `{FOLDERID_LocalAppData}`

Then, open a file inside of a workspace with no configuration. The

settings in the user configuration file should be used.

## Summary

I think the check included here does make sense, but I don't see why we

would allow it if a value is provided for the attribute -- since, in

that case, isn't it _not_ abstract?

Closes: https://github.com/astral-sh/ruff/issues/11208.

## Summary

This PR changes the `DebugStatistics` and `ReleaseStatistics` structs so

that they implement a common `StatisticsRecorder` trait, and makes the

`KeyValueCache` struct generic over a type parameter bound to that

trait. The advantage of this approach is that it's much harder for the

`DebugStatistics` and `ReleaseStatistics` structs to accidentally grow

out of sync in the methods that they implement, which was the cause of

the release-build failure recently fixed in #11177.

## Test Plan

`cargo test -p red_knot` and `cargo build --release` both continue to

pass for me locally

* Adds `Symbol.flag` bitfield. Populates it from (the three renamed)

`add_or_update_symbol*` methods.

* Currently there are these flags supported:

* `IS_DEFINED` is set in a scope where a variable is defined.

* `IS_USED` is set in a scope where a variable is referenced. (To have

both this and `IS_DEFINED` would require two separate appearances of a

variable in the same scope-- one def and one use.)

* `MARKED_GLOBAL` and `MARKED_NONLOCAL` are **not yet implemented**.

(*TODO: While traversing, if you find these declarations, add these

flags to the variable.*)

* Adds `Symbol.kind` field (commented) and the data structure which will

populate it: `Kind` which is an enum of freevar, cellvar,

implicit_global, and implicit_local. **Not yet populated**. (*TODO: a

second pass over the scope (or the ast?) will observe the

`MARKED_GLOBAL` and `MARKED_NONLOCAL` flags to populate this field. When

that's added, we'll uncomment the field.*)

* Adds a few tests that the `IS_DEFINED` and `IS_USED` fields are

correctly set and/or merged:

* Unit test that subsequent calls to `add_or_update_symbol` will merge

the flag arguments.

* Unit test that in the statement `x = foo`, the variable `foo` is

considered used but not defined.

* Unit test that in the statement `from bar import foo`, the variable

`foo` is considered defined but not used.

---------

Co-authored-by: Carl Meyer <carl@astral.sh>

## Summary

This PR adds a basic README for the `ruff_python_parser` crate and

updates the CONTRIBUTING docs with the fuzzer and benchmark section.

Additionally, it also updates some inline documentation within the

parser crate and splits the `parse_program` function into

`parse_single_expression` and `parse_module` which will be called by

matching against the `Mode`.

This PR doesn't go into too much internal detail around the parser logic

due to the following reasons:

1. Where should the docs go? Should it be as a module docs in `lib.rs`

or in README?

2. The parser is still evolving and could include a lot of refactors

with the future work (feedback loop and improved error recovery and

resilience)

---------

Co-authored-by: Alex Waygood <Alex.Waygood@Gmail.com>

## Summary

`cargo build --release` currently fails to compile on `main`:

<details>

```

error[E0599]: no method named `hit` found for struct `ReleaseStatistics` in the current scope

--> crates/red_knot/src/cache.rs:22:29

|

22 | self.statistics.hit();

| ^^^ method not found in `ReleaseStatistics`

...

145 | pub struct ReleaseStatistics;

| ---------------------------- method `hit` not found for this struct

error[E0599]: no method named `miss` found for struct `ReleaseStatistics` in the current scope

--> crates/red_knot/src/cache.rs:25:29

|

25 | self.statistics.miss();

| ^^^^ method not found in `ReleaseStatistics`

...

145 | pub struct ReleaseStatistics;

| ---------------------------- method `miss` not found for this struct

error[E0599]: no method named `hit` found for struct `ReleaseStatistics` in the current scope

--> crates/red_knot/src/cache.rs:36:33

|

36 | self.statistics.hit();

| ^^^ method not found in `ReleaseStatistics`

...

145 | pub struct ReleaseStatistics;

| ---------------------------- method `hit` not found for this struct

error[E0599]: no method named `miss` found for struct `ReleaseStatistics` in the current scope

--> crates/red_knot/src/cache.rs:41:33

|

41 | self.statistics.miss();

| ^^^^ method not found in `ReleaseStatistics`

...

145 | pub struct ReleaseStatistics;

| ---------------------------- method `miss` not found for this struct

```

</details>

This is because in a release build, `CacheStatistics` is a type alias

for `ReleaseStatistics`, and `ReleaseStatistics` doesn't have `hit()` or

`miss()` methods. (In a debug build, `CacheStatistics` is a type alias

for `DebugStatistics`, which _does_ have those methods.)

Possibly we could make this less likely to happen in the future by

making both structs implement a common trait instead of using type

aliases that vary depending on whether it's a debug build or not? For

now, though, this PR just brings the two structs in sync w.r.t. the

methods they expose.

## Test Plan

`cargo build --release` now once again compiles for me locally

## Summary

This PR adds an override to the fixer to ensure that we apply any

`redefined-while-unused` fixes prior to `unused-import`.

Closes https://github.com/astral-sh/ruff/issues/10905.

## Summary

Implement duplicate code detection as part of `RUF100`, mirroring the

behavior of `flake8-noqa` (`NQA005`) mentioned in #850. The idea to

merge the rule into `RUF100` was suggested by @MichaReiser

https://github.com/astral-sh/ruff/pull/10325#issuecomment-2025535444.

## Test Plan

Test cases were added to the fixture.

This syntax wasn't "deprecated" in Python 3; it was removed.

I started looking at this rule because I was curious how Ruff could even

detect this without a Python 2 parser. Then I realized that

"print >> f, x" is actually valid Python 3 syntax: it creates a tuple

containing a right-shifted version of the print function.

## Summary

Based on discussion in #10850.

As it stands today `RUF100` will attempt to replace code redirects with

their target codes even though this is not the "goal" of `RUF100`. This

behavior is confusing and inconsistent, since code redirects which don't

otherwise violate `RUF100` will not be updated. The behavior is also

undocumented. Additionally, users who want to use `RUF100` but do not

want to update redirects have no way to opt out.

This PR explicitly detects redirects with a new rule `RUF101` and

patches `RUF100` to keep original codes in fixes and reporting.

## Test Plan

Added fixture.

## Summary

Closes#10985.

The server now supports a custom TOML configuration file as a client

setting. The setting must be an absolute path to a file. If the file is

called `pyproject.toml`, the server will attempt to parse it as a

pyproject file - otherwise, it will attempt to parse it as a `ruff.toml`

file, even if the file has a name besides `ruff.toml`.

If an option is set in both the custom TOML configuration file and in

the client settings directly, the latter will be used.

## Test Plan

1. Create a `ruff.toml` file outside of the workspace you are testing.

Set an option that is different from the one in the configuration for

your test workspace.

2. Set the path to the configuration in NeoVim:

```lua

require('lspconfig').ruff.setup {

init_options = {

settings = {

configuration = "absolute/path/to/your/configuration"

}

}

}

```

3. Confirm that the option in the configuration file is used, regardless

of what the option is set to in the workspace configuration.

4. Add the same option, with a different value, to the NeoVim

configuration directly. For example:

```lua

require('lspconfig').ruff.setup {

init_options = {

settings = {

configuration = "absolute/path/to/your/configuration",

lint = {

select = []

}

}

}

}

```

5. Confirm that the option set in client settings is used, regardless of

the value in either the custom configuration file or in the workspace

configuration.

## Summary

This PR fixes the bug where the formatter would format an f-string and

could potentially change the AST.

For a triple-quoted f-string, the element can't be formatted into

multiline if it has a format specifier because otherwise the newline

would be treated as part of the format specifier.

Given the following f-string:

```python

f"""aaaaaaaaaaaaaaaa bbbbbbbbbbbbbbbbbb ccccccccccc {

variable:.3f} ddddddddddddddd eeeeeeee"""

```

The formatter sees that the f-string is already multiline so it assumes

that it can contain line breaks i.e., broken into multiple lines. But,

in this specific case we can't format it as:

```python

f"""aaaaaaaaaaaaaaaa bbbbbbbbbbbbbbbbbb ccccccccccc {

variable:.3f

} ddddddddddddddd eeeeeeee"""

```

Because the format specifier string would become ".3f\n", which is not

the original string (`.3f`).

If the original source code already contained a newline, they'll be

preserved. For example:

```python

f"""aaaaaaaaaaaaaaaa bbbbbbbbbbbbbbbbbb ccccccccccc {

variable:.3f

} ddddddddddddddd eeeeeeee"""

```

The above will be formatted as:

```py

f"""aaaaaaaaaaaaaaaa bbbbbbbbbbbbbbbbbb ccccccccccc {variable:.3f

} ddddddddddddddd eeeeeeee"""

```

Note that the newline after `.3f` is part of the format specifier which

needs to be preserved.

The Python version is irrelevant in this case.

fixes: #10040

## Test Plan

Add some test cases to verify this behavior.

## Summary

This is intended to address

https://github.com/astral-sh/ruff-vscode/issues/425, and is a follow-up

to https://github.com/astral-sh/ruff/pull/11062.

A new client setting is now supported by the server,

`prioritizeFileConfiguration`. This is a boolean setting (default:

`false`) that, if set to `true`, will instruct the configuration

resolver to prioritize file configuration (aka discovered TOML files)

over configuration passed in by the editor.

A corresponding extension PR has been opened, which makes this setting

available for VS Code:

https://github.com/astral-sh/ruff-vscode/pull/457.

## Test Plan

To test this with VS Code, you'll need to check out [the VS Code

PR](https://github.com/astral-sh/ruff-vscode/pull/457) that adds this

setting.

The test process is similar to

https://github.com/astral-sh/ruff/pull/11062, but in scenarios where the

editor configuration would take priority over file configuration, file

configuration should take priority.

## Summary

Resolves#11102

The error stems from these lines

f5c7a62aa6/crates/ruff_linter/src/noqa.rs (L697-L702)

I don't really understand the purpose of incrementing the last index,

but it makes the resulting range invalid for indexing into `contents`.

For now I just detect if the index is too high in `blanket_noqa` and

adjust it if necessary.

## Test Plan

Created fixture from issue example.

## Summary

This PR updates the playground to display the AST even if it contains a

syntax error. This could be useful for development and also to give a

quick preview of what error recovery looks like.

Note that not all recovery is correct but this allows us to iterate

quickly on what can be improved.

## Test Plan

Build the playground locally and test it.

<img width="1688" alt="Screenshot 2024-04-25 at 21 02 22"

src="https://github.com/astral-sh/ruff/assets/67177269/2b94934c-4f2c-4a9a-9693-3d8460ed9d0b">

## Summary

Fixes#11114.

As part of the `onClose` handler, we publish an empty array of

diagnostics for the document being closed, similar to

[`ruff-lsp`](187d7790be/ruff_lsp/server.py (L459-L464)).

This prevent phantom diagnostics from lingering after a document is

closed. We'll only do this if the client doesn't support pull

diagnostics, because otherwise clearing diagnostics is their

responsibility.

## Test Plan

Diagnostics should no longer appear for a document in the Problems tab

after the document is closed.

## Summary

This allows `raise from` in BLE001.

```python

try:

...

except Exception as e:

raise ValueError from e

```

Fixes#10806

## Test Plan

Test case added.

## Summary

Continuation of https://github.com/astral-sh/ruff/pull/9444.

> When the formatter is fully cached, it turns out we actually spend

meaningful time mapping from file to `Settings` (since we use a

hierarchical approach to settings). Using `matchit` rather than

`BTreeMap` improves fully-cached performance by anywhere from 2-5%

depending on the project, and since these are all implementation details

of `Resolver`, it's minimally invasive.

`matchit` supports escaping routing characters so this change should now

be fully compatible.

## Test Plan

On my machine I'm seeing a ~3% improvement with this change.

```

hyperfine --warmup 20 -i "./target/release/main format ../airflow" "./target/release/ruff format ../airflow"

Benchmark 1: ./target/release/main format ../airflow

Time (mean ± σ): 58.1 ms ± 1.4 ms [User: 63.1 ms, System: 66.5 ms]

Range (min … max): 56.1 ms … 62.9 ms 49 runs

Benchmark 2: ./target/release/ruff format ../airflow

Time (mean ± σ): 56.6 ms ± 1.5 ms [User: 57.8 ms, System: 67.7 ms]

Range (min … max): 54.1 ms … 63.0 ms 51 runs

Summary

./target/release/ruff format ../airflow ran

1.03 ± 0.04 times faster than ./target/release/main format ../airflow

```

<!--

Thank you for contributing to Ruff! To help us out with reviewing,

please consider the following:

- Does this pull request include a summary of the change? (See below.)

- Does this pull request include a descriptive title?

- Does this pull request include references to any relevant issues?

-->

## Summary

Add support for hover menu to ruff_server, as requested in

[10595](https://github.com/astral-sh/ruff/issues/10595).

Majority of new code is in hover.rs.

I reused the regex from ruff-lsp's implementation. Also reused the

format_rule_text function from ruff/src/commands/rule.rs

Added capability registration in server.rs, and added the handler to

api.rs.

## Test Plan

Tested in NVIM v0.10.0-dev-2582+g2a8cef6bd, configured with lspconfig

using the default options (other than cmd pointing to my test build,

with options "server" and "--preview"). OS: Ubuntu 24.04, kernel

6.8.0-22.

---------

Co-authored-by: Jane Lewis <me@jane.engineering>

## Summary

This is a follow-up to https://github.com/astral-sh/ruff/pull/10984 that

implements configuration resolution for editor configuration. By 'editor

configuration', I'm referring to the client settings that correspond to

Ruff configuration/options, like `preview`, `select`, and so on. These

will be combined with 'project configuration' (configuration taken from

project files such as `pyproject.toml`) to generate the final linter and

formatter settings used by `RuffSettings`. Editor configuration takes

priority over project configuration.

In a follow-up pull request, I'll implement a new client setting that

allows project configuration to override editor configuration, as per

[this issue](https://github.com/astral-sh/ruff-vscode/issues/425).

## Review guide

The first commit, e38966d8843becc7234fa7d46009c16af4ba41e9, is just

doing re-arrangement so that we can pass the right things to

`RuffSettings::resolve`. The actual resolution logic is in the second

commit, 0eec9ee75c10e5ec423bd9f5ce1764f4d7a5ad86. It might help to look

at these comments individually since the diff is rather messy.

## Test Plan

For the settings to show up in VS Code, you'll need to checkout this

branch: https://github.com/astral-sh/ruff-vscode/pull/456.

To test that the resolution for a specific setting works as expected,

run through the following scenarios, setting it in project and editor

configuration as needed:

| Set in project configuration? | Set in editor configuration? |

Expected Outcome |

|-------------------------------|--------------------------------------------------|------------------------------------------------------------------------------------------|

| No | No | The editor should behave as if the setting was set to its

default value. |

| Yes | No | The editor should behave as if the setting was set to the

value in project configuration. |

| No | Yes | The editor should behave as if the setting was set to the

value in editor configuration. |

| Yes | Yes (but distinctive from project configuration) | The editor

should behave as if the setting was set to the value in editor

configuration. |

An exception to this is `extendSelect`, which does not have an analog in

TOML configuration. Instead, you should verify that `extendSelect`

amends the `select` setting. If `select` is set in both editor and

project configuration, `extendSelect` will only append to the `select`

value in editor configuration, so make sure to un-set it there if you're

testing `extendSelect` with `select` in project configuration.

## Summary

This PR refactors unary expression parsing with the following changes:

* Ability to get `OperatorPrecedence` from a unary operator (`UnaryOp`)

* Implement methods on `TokenKind`

* Add `as_unary_operator` which returns an `Option<UnaryOp>`

* Add `as_unary_arithmetic_operator` which returns an `Option<UnaryOp>`

(used for pattern parsing)

* Rename `is_unary` to `is_unary_arithmetic_operator` (used in the

linter)

resolves: #10752

## Test Plan

Verify that the existing test cases pass, no ecosystem changes, run the

Python based fuzzer on 3000 random inputs and run it on dozens of

open-source repositories.

## Summary

This PR refactors the binary expression parsing in a way to make it

readable and easy to understand. It draws inspiration from the suggested

edits in the linked messages in #10752.

### Changes

* Ability to get the precedence of an operator

* From a boolean operator (`BinOp`) to `OperatorPrecedence`

* From a binary operator (`Operator`) to `OperatorPrecedence`

* No comparison operator because all of them have the same precedence

* Implement methods on `TokenKind` to convert it to an appropriate

operator enum

* Add `as_boolean_operator` which returns an `Option<BoolOp>`

* Add `as_binary_operator` which returns an `Option<Operator>`

* No `as_comparison_operator` because it requires lookahead and I'm not

sure if `token.as_comparison_operator(peek)` is a good way to implement

it

* Introduce `BinaryLikeOperator`

* Constructed from two tokens using the methods from the second point

* Add `precedence` method using the conversion methods mentioned in the

first point

* Make most of the functions in `TokenKind` private to the module

* Use `self` instead of `&self` for `TokenKind`

fixes: #11072

## Test Plan

Refer #11088

## Summary

This PR does a few things but the main change is that is makes

associativity a property of operator precedence.

1. Rename `Precedence` -> `OperatorPrecedence`

2. Rename `parse_expression_with_precedence` ->

`parse_binary_expression_or_higher`

3. Move `current_binding_power` to `OperatorPrecedence::try_from_tokens`

[^1]

4. Add a `OperatorPrecedence::is_right_associative` method

5. Move from `increment_precedence` to using `<=` / `<` to check if the

parsing loop needs to stop [^2]

[^1]: Another alternative would be to have two separate methods to avoid

lookahead as it's required only for once case (`not in`). So,

`try_from_current_token(current).or_else(|| try_from_next_token(current,

peek))`

[^2]: This will allow us to easily make the refactors mentioned in

#10752

## Test Plan

Make sure the precedence parsing algorithm is still correct by running

the test suite, fuzz testing it and running it against a dozen or so

open-source repositories.

## Summary

This PR adds a new `ExpressionContext` struct which is used in

expression parsing.

This solves the following problem:

1. Allowing starred expression with different precedence

2. Allowing yield expression in certain context

3. Remove ambiguity with `in` keyword when parsing a `for ... in`

statement

For context, (1) was solved by adding `parse_star_expression_list` and

`parse_star_expression_or_higher` in #10623, (2) was solved by by adding

`parse_yield_expression_or_else` in #10809, and (3) was fixed in #11009.

All of the mentioned functions have been removed in favor of the context

flags.

As mentioned in #11009, an ideal solution would be to implement an

expression context which is what this PR implements. This is passed

around as function parameter and the call stack is used to automatically

reset the context.

### Recovery

How should the parser recover if the target expression is invalid when

an expression can consume the `in` keyword?

1. Should the `in` keyword be part of the target expression?

2. Or, should the expression parsing stop as soon as `in` keyword is

encountered, no matter the expression?

For example:

```python

for yield x in y: ...

# Here, should this be parsed as

for (yield x) in (y): ...

# Or

for (yield x in y): ...

# where the `in iter` part is missing

```

Or, for binary expression parsing:

```python

for x or y in z: ...

# Should this be parsed as

for (x or y) in z: ...

# Or

for (x or y in z): ...

# where the `in iter` part is missing

```

This need not be solved now, but is very easy to change. For context

this PR does the following:

* For binary, comparison, and unary expressions, stop at `in`

* For lambda, yield expressions, consume the `in`

## Test Plan

1. Add test cases for the `for ... in` statement and verify the

snapshots

2. Make sure the existing test suite pass

3. Run the fuzzer for around 3000 generated source code

4. Run the updated logic on a dozen or so open source repositories

(codename "parser-checkouts")

## Summary

Fixes#11059

Several major editors don't support [pull

diagnostics](https://microsoft.github.io/language-server-protocol/specifications/lsp/3.17/specification/#textDocument_pullDiagnostics),

a method of sending diagnostics to the client that was introduced in

version `0.3.17` of the specification. Until now, `ruff server` has only

used pull diagnostics, which resulted in diagnostics not being available

on Neovim and Helix, which don't support pull diagnostics yet (though

Neovim `10.0` will have support for this).

`ruff server` will now utilize the older method of sending diagnostics,

known as 'publish diagnostics', when pull diagnostics aren't supported

by the client. This involves re-linting a document every time it is

opened or modified, and then sending the diagnostics generated from that

lint to the client via the `textDocument/publishDiagnostics`

notification.

## Test Plan

The easiest way to test that this PR works is to check if diagnostics

show up on Neovim `<=0.9`.

## Summary

Fixes#10463

Add `FURB192` which detects violations like this:

```python

# Bad

a = sorted(l)[0]

# Good

a = min(l)

```

There is a caveat that @Skylion007 has pointed out, which is that

violations with `reverse=True` technically aren't compatible with this

change, in the edge case where the unstable behavior is intended. For

example:

```python

from operator import itemgetter

data = [('red', 1), ('blue', 1), ('red', 2), ('blue', 2)]

min(data, key=itemgetter(0)) # ('blue', 1)

sorted(data, key=itemgetter(0))[0] # ('blue', 1)

sorted(data, key=itemgetter(0), reverse=True)[-1] # ('blue, 2')

```

This seems like a rare edge case, but I can make the `reverse=True`

fixes unsafe if that's best.

## Test Plan

This is unit tested.

## References

https://github.com/dosisod/refurb/pull/333/files

---------

Co-authored-by: Charlie Marsh <charlie.r.marsh@gmail.com>

## Summary

The `operator.itemgetter` behavior changes where there's more than one

argument, such that `operator.itemgetter(0)` yields `r[0]`, rather than

`(r[0],)`.

Closes https://github.com/astral-sh/ruff/issues/11075.

## Summary

There is no class `integer` in python, nor is there a type `integer`, so

I updated the docs to remove the backticks on these references, such

that it is the representation of an integer, and not a reference.

## Summary

Move `blanket-noqa` rule from the token checker to the noqa checker.

This allows us to make use of the line directives already computed in

the noqa checker.

## Test Plan

Verified test results are unchanged.

Resolves#10187

<details>

<summary>Old PR description; accurate through commit e86dd7d; probably

best to leave this fold closed</summary>

## Description of change

In the case of a printf-style format string with only one %-placeholder

and a variable at right (e.g. `"%s" % var`):

* The new behavior attempts to dereference the variable and then match

on the bound expression to distinguish between a 1-tuple (fix), n-tuple

(bug 🐛), or a non-tuple (fix). Dereferencing is via

`analyze::typing::find_binding_value`.

* If the variable cannot be dereferenced, then the type-analysis routine

is called to distinguish only tuple (no-fix) or non-tuple (fix). Type

analysis is via `analyze::typing::is_tuple`.

* If any of the above fails, the rule still fires, but no fix is

offered.

## Alternatives

* If the reviewers think that singling out the 1-tuple case is too

complicated, I will remove that.

* The ecosystem results show that no new fixes are detected. So I could

probably delete all the variable dereferencing code and code that tries

to generate fixes, tbh.

## Changes to existing behavior

**All the previous rule-firings and fixes are unchanged except for** the

"false negatives" in

`crates/ruff_linter/resources/test/fixtures/pyupgrade/UP031_1.py`. Those

previous "false negatives" are now true positives and so I moved them to

`crates/ruff_linter/resources/test/fixtures/pyupgrade/UP031_0.py`.

<details>

<summary>Existing false negatives that are now true positives</summary>

```

crates/ruff_linter/resources/test/fixtures/pyupgrade/UP031_0.py:134:1: UP031 Use format specifiers instead of percent format

|

133 | # UP031 (no longer false negatives)

134 | 'Hello %s' % bar

| ^^^^^^^^^^^^^^^^ UP031

135 |

136 | 'Hello %s' % bar.baz

|

= help: Replace with format specifiers

crates/ruff_linter/resources/test/fixtures/pyupgrade/UP031_0.py:136:1: UP031 Use format specifiers instead of percent format

|

134 | 'Hello %s' % bar

135 |

136 | 'Hello %s' % bar.baz

| ^^^^^^^^^^^^^^^^^^^^ UP031

137 |

138 | 'Hello %s' % bar['bop']

|

= help: Replace with format specifiers

crates/ruff_linter/resources/test/fixtures/pyupgrade/UP031_0.py:138:1: UP031 Use format specifiers instead of percent format

|

136 | 'Hello %s' % bar.baz

137 |

138 | 'Hello %s' % bar['bop']

| ^^^^^^^^^^^^^^^^^^^^^^^ UP031

|

= help: Replace with format specifiers

```

One of them newly offers a fix.

```

# UP031 (no longer false negatives)

-'Hello %s' % bar

+'Hello {}'.format(bar)

```

This fix occurs because the new code dereferences `bar` to where it was

defined earlier in the file as a non-tuple:

```python

bar = {"bar": y}

```

---

</details>

## Behavior requiring new tests

Additionally, we now handle a few cases that we didn't previously test.

These cases are when a string has a single %-placeholder and the

righthand operand to the modulo operator is a variable **which can be

dereferenced.** One of those was shown in the previous section (the

"dereference non-tuple" case).

<details>

<summary>New cases handled</summary>

```

crates/ruff_linter/resources/test/fixtures/pyupgrade/UP031_0.py:126:1: UP031 [*] Use format specifiers instead of percent format

|

125 | t1 = (x,)

126 | "%s" % t1

| ^^^^^^^^^ UP031

127 | # UP031: deref t1 to 1-tuple, offer fix

|

= help: Replace with format specifiers

crates/ruff_linter/resources/test/fixtures/pyupgrade/UP031_0.py:130:1: UP031 Use format specifiers instead of percent format

|

129 | t2 = (x,y)

130 | "%s" % t2

| ^^^^^^^^^ UP031

131 | # UP031: deref t2 to n-tuple, this is a bug

|

= help: Replace with format specifiers

```

One of these offers a fix.

```

t1 = (x,)

-"%s" % t1

+"{}".format(t1[0])

# UP031: deref t1 to 1-tuple, offer fix

```

The other doesn't offer a fix because it's a bug.

---

</details>

---

</details>

## Changes to existing behavior

In the case of a string with a single %-placeholder and a single

ambiguous righthand argument to the modulo operator, (e.g. `"%s" % var`)

the rule now fires and offers a fix. We explain about this in the "fix

safety" section of the updated documentation.

## Documentation changes

I swapped the order of the "known problems" and the "examples" sections

so that the examples which describe the rule are first, before the

exceptions to the rule are described. I also tweaked the language to be

more explicit, as I had trouble understanding the documentation at

first. The "known problems" section is now "fix safety" but the content

is largely similar.

The diff of the documentation changes looks a little difficult unless

you look at the individual commits.

## Summary

I happened to notice that we box `TypeParams` on `StmtClassDef` but not

on `StmtFunctionDef` and wondered why, since `StmtFunctionDef` is bigger

and sets the size of `Stmt`.

@charliermarsh found that at the time we started boxing type params on

classes, classes were the largest statement type (see #6275), but that's

no longer true.

So boxing type-params also on functions reduces the overall size of

`Stmt`.

## Test Plan

The `<=` size tests are a bit irritating (since their failure doesn't

tell you the actual size), but I manually confirmed that the size is

actually 120 now.

Occasionally you intentionally have iterables of differing lengths. The

rule permits this by explicitly adding `strict=False`, but this was not

documented.

## Summary

The rule does not currently document how to avoid it when having

differing length iterables is intentional. This PR adds that to the rule

documentation.

## Summary

This fixes a bug where the parser would panic when there is a "gap" in

the token source.

What's a gap?

The reason it's `<=` instead of just `==` is because there could be

whitespaces between

the two tokens. For example:

```python

# last token end

# | current token (newline) start

# v v

def foo \n

# ^

# assume there's trailing whitespace here

```

Or, there could tokens that are considered "trivia" and thus aren't

emitted by the token

source. These are comments and non-logical newlines. For example:

```python

# last token end

# v

def foo # comment\n

# ^ current token (newline) start

```

In either of the above cases, there's a "gap" between the end of the

last token and start

of the current token.

## Test Plan

Add test cases and update the snapshots.

## Summary

This PR adds a new `Clause::Case` and uses it to parse the body of a

`case` block. Earlier, it was using `Match` which would give an

incorrect error message like:

```

|

1 | match subject:

2 | case 1:

3 | case 2: ...

| ^^^^ Syntax Error: Expected an indented block after `match` statement

|

```

## Test Plan

Add test case and update the snapshot.

Add pylint rule invalid-hash-returned (PLE0309)

See https://github.com/astral-sh/ruff/issues/970 for rules

Test Plan: `cargo test`

TBD: from the description: "Strictly speaking `bool` is a subclass of

`int`, thus returning `True`/`False` is valid. To be consistent with

other rules (e.g.

[PLE0305](https://github.com/astral-sh/ruff/pull/10962)

invalid-index-returned), ruff will raise, compared to pylint which will

not raise."

## Summary

This PR fixes the bug in with items parsing where it would fail to

recognize that the parenthesized expression is part of a large binary

expression.

## Test Plan

Add test cases and verified the snapshots.

## Summary

This PR fixes the bug in parenthesized with items parsing where the `if`

expression would result into a syntax error.

The reason being that once we identify that the ambiguous left

parenthesis belongs to the context expression, the parser converts the

parsed with item into an equivalent expression. Then, the parser

continuous to parse any postfix expressions. Now, attribute, subscript,

and call are taken into account as they're grouped in

`parse_postfix_expression` but `if` expression has it's own parsing

function.

Use `parse_if_expression` once all postfix expressions have been parsed.

Ideally, I think that `if` could be included in postfix expression

parsing as they can be chained as well (`x if True else y if True else

z`).

## Test Plan

Add test cases and verified the snapshots.

## Summary

This PR fixes a bug in the new parser which involves the parser context

w.r.t. for statement. This is specifically around the `in` keyword which

can be present in the target expression and shouldn't be considered to

be part of the `for` statement header. Ideally it should use a context

which is passed between functions, thus using a call stack to set /

unset a specific variant which will be done in a follow-up PR as it

requires some amount of refactor.

## Test Plan

Add test cases and update the snapshots.

(Supersedes #9152, authored by @LaBatata101)

## Summary

This PR replaces the current parser generated from LALRPOP to a

hand-written recursive descent parser.

It also updates the grammar for [PEP

646](https://peps.python.org/pep-0646/) so that the parser outputs the

correct AST. For example, in `data[*x]`, the index expression is now a

tuple with a single starred expression instead of just a starred

expression.

Beyond the performance improvements, the parser is also error resilient

and can provide better error messages. The behavior as seen by any

downstream tools isn't changed. That is, the linter and formatter can

still assume that the parser will _stop_ at the first syntax error. This

will be updated in the following months.

For more details about the change here, refer to the PR corresponding to

the individual commits and the release blog post.

## Test Plan

Write _lots_ and _lots_ of tests for both valid and invalid syntax and

verify the output.

## Acknowledgements

- @MichaReiser for reviewing 100+ parser PRs and continuously providing

guidance throughout the project

- @LaBatata101 for initiating the transition to a hand-written parser in

#9152

- @addisoncrump for implementing the fuzzer which helped

[catch](https://github.com/astral-sh/ruff/pull/10903)

[a](https://github.com/astral-sh/ruff/pull/10910)

[lot](https://github.com/astral-sh/ruff/pull/10966)

[of](https://github.com/astral-sh/ruff/pull/10896)

[bugs](https://github.com/astral-sh/ruff/pull/10877)

---------

Co-authored-by: Victor Hugo Gomes <labatata101@linuxmail.org>

Co-authored-by: Micha Reiser <micha@reiser.io>

## Summary

The following client settings have been introduced to the language

server:

* `lint.preview`

* `format.preview`

* `lint.select`

* `lint.extendSelect`

* `lint.ignore`

* `exclude`

* `lineLength`

`exclude` and `lineLength` apply to both the linter and formatter.

This does not actually use the settings yet, but makes them available

for future use.

## Test Plan

Snapshot tests have been updated.

## Summary

A setup guide has been written for NeoVim under a new

`crates/ruff_server/docs/setup` folder, where future setup guides will

also go. This setup guide was adapted from the [`ruff-lsp`

guide](https://github.com/astral-sh/ruff-lsp?tab=readme-ov-file#example-neovim).

---------

Co-authored-by: Dhruv Manilawala <dhruvmanila@gmail.com>

Add pylint rule invalid-length-returned (PLE0303)

See https://github.com/astral-sh/ruff/issues/970 for rules

Test Plan: `cargo test`

TBD: from the description: "Strictly speaking `bool` is a subclass of

`int`, thus returning `True`/`False` is valid. To be consistent with

other rules (e.g.

[PLE0305](https://github.com/astral-sh/ruff/pull/10962)

invalid-index-returned), ruff will raise, compared to pylint which will

not raise."

## Summary

If the user is analyzing a script (i.e., we have no module path), it

seems reasonable to use the script name when trying to identify paths to

objects defined _within_ the script.

Closes https://github.com/astral-sh/ruff/issues/10960.

## Test Plan

Ran:

```shell

check --isolated --select=B008 \

--config 'lint.flake8-bugbear.extend-immutable-calls=["test.A"]' \

test.py

```

On:

```python

class A: pass

def f(a=A()):

pass

```

## Summary

The server now requests a [workspace diagnostic

refresh](https://microsoft.github.io/language-server-protocol/specifications/lsp/3.17/specification/#diagnostic_refresh)

when a configuration file gets changed. This means that diagnostics for

all open files will be automatically re-requested by the client on a

config change.

## Test Plan

You can test this by opening several files in VS Code, setting `select`

in your file configuration to `[]`, and observing that the diagnostics

go away once the file is saved (besides any `Pylance` diagnostics).

Restore it to what it was before, and you should see the diagnostics

automatically return once a save happens.

## Summary

I've added support for configuring the `ruff check` output file via the

environment variable `RUFF_OUTPUT_FILE` akin to #1731.

This is super useful when, e.g., generating a [GitLab code quality

report](https://docs.gitlab.com/ee/ci/testing/code_quality.html#implement-a-custom-tool)

while running Ruff as a pre-commit hook. Usually, `ruff check` should

print its human-readable output to `stdout`, but when run through

`pre-commit` _in a GitLab CI job_ it should write its output in `gitlab`

format to a file. So, to override these two settings only during CI,

environment variables come handy, and `RUFF_OUTPUT_FORMAT` already

exists but `RUFF_OUTPUT_FILE` has been missing.

A (simplified) GitLab CI job config for this scenario might look like

this:

```yaml

pre-commit:

stage: test

image: python

variables:

RUFF_OUTPUT_FILE: gl-code-quality-report.json

RUFF_OUTPUT_FORMAT: gitlab

before_script:

- pip install pre-commit

script:

- pre-commit run --all-files --show-diff-on-failure

artifacts:

reports:

codequality: gl-code-quality-report.json

```

## Test Plan

I tested it manually.

## Summary

This PR switches more callsites of `SemanticModel::is_builtin` to move

over to the new methods I introduced in #10919, which are more concise

and more accurate. I missed these calls in the first PR.