## Summary

This was just a bug in the parser ranges, probably since it was

initially implemented. Given `match n % 3, n % 5: ...`, the "subject"

(i.e., the tuple of two binary operators) was using the entire range of

the `match` statement.

Closes https://github.com/astral-sh/ruff/issues/8091.

## Test Plan

`cargo test`

## Summary

This PR updates our E721 implementation and semantics to match the

updated `pycodestyle` logic, which I think is an improvement.

Specifically, we now allow `type(obj) is int` for exact type

comparisons, which were previously impossible. So now, we're largely

just linting against code like `type(obj) == int`.

This change is gated to preview mode.

Closes https://github.com/astral-sh/ruff/issues/7904.

## Test Plan

Updated the test fixture and ensured parity with latest Flake8.

## Summary

This PR updates our documentation for the upcoming formatter release.

Broadly, the documentation is now structured as follows:

- Overview

- Tutorial

- Installing Ruff

- The Ruff Linter

- Overview

- `ruff check`

- Rule selection

- Error suppression

- Exit codes

- The Ruff Formatter

- Overview

- `ruff format`

- Philosophy

- Configuration

- Format suppression

- Exit codes

- Black compatibility

- Known deviations

- Configuring Ruff

- pyproject.toml

- File discovery

- Configuration discovery

- CLI

- Shell autocompletion

- Preview

- Rules

- Settings

- Integrations

- `pre-commit`

- VS Code

- LSP

- PyCharm

- GitHub Actions

- FAQ

- Contributing

The major changes include:

- Removing the "Usage" section from the docs, and instead folding that

information into "Integrations" and the new Linter and Formatter

sections.

- Breaking up "Configuration" into "Configuring Ruff" (for generic

configuration), and new Linter- and Formatter-specific sections.

- Updating all example configurations to use `[tool.ruff.lint]` and

`[tool.ruff.format]`.

My suggestion is to pull and build the docs locally, and review by

reading them in the browser rather than trying to parse all the code

changes.

Closes https://github.com/astral-sh/ruff/issues/7235.

Closes https://github.com/astral-sh/ruff/issues/7647.

Adds a new `ruff version` sub-command which displays long version

information in the style of `cargo` and `rustc`. We include the number

of commits since the last release tag if its a development build, in the

style of Python's versioneer.

```

❯ ruff version

ruff 0.1.0+14 (947940e91 2023-10-18)

```

```

❯ ruff version --output-format json

{

"version": "0.1.0",

"commit_info": {

"short_commit_hash": "947940e91",

"commit_hash": "947940e91269f20f6b3f8f8c7c63f8e914680e80",

"commit_date": "2023-10-18",

"last_tag": "v0.1.0",

"commits_since_last_tag": 14

}

}%

```

```

❯ cargo version

cargo 1.72.1 (103a7ff2e 2023-08-15)

```

## Test plan

I've tested this manually locally, but want to at least add unit tests

for the message formatting. We'd also want to check the next release to

ensure the information is correct.

I checked build behavior with a detached head and branches.

## Future work

We could include rustc and cargo versions from the build, the current

Python version, and other diagnostic information for bug reports.

The `--version` and `-V` output is unchanged. However, we could update

it to display the long ruff version without the rust and cargo versions

(this is what cargo does). We'll need to be careful to ensure this does

not break downstream packages which parse our version string.

```

❯ ruff --version

ruff 0.1.0

```

The LSP should be updated to use `ruff version --output-format json`

instead of parsing `ruff --version`.

This is my first PR and I'm new at rust, so feel free to ask me to

rewrite everything if needed ;)

The rule must be called after deferred lambas have been visited because

of the last check (whether the lambda parameters are used in the body of

the function that's being called). I didn't know where to do it, so I

did what I could to be able to work on the rule itself:

- added a `ruff_linter::checkers::ast::analyze::lambda` module

- build a vec of visited lambdas in `visit_deferred_lambdas`

- call `analyze::lambda` on the vec after they all have been visited

Building that vec of visited lambdas was necessary so that bindings

could be properly resolved in the case of nested lambdas.

Note that there is an open issue in pylint for some false positives, do

we need to fix that before merging the rule?

https://github.com/pylint-dev/pylint/issues/8192

Also, I did not provide any fixes (yet), maybe we want do avoid merging

new rules without fixes?

## Summary

Checks for lambdas whose body is a function call on the same arguments

as the lambda itself.

### Bad

```python

df.apply(lambda x: str(x))

```

### Good

```python

df.apply(str)

```

## Test Plan

Added unit test and snapshot.

Manually compared pylint and ruff output on pylint's test cases.

## References

- [pylint

documentation](https://pylint.readthedocs.io/en/latest/user_guide/messages/warning/unnecessary-lambda.html)

- [pylint

implementation](https://github.com/pylint-dev/pylint/blob/main/pylint/checkers/base/basic_checker.py#L521-L587)

- https://github.com/astral-sh/ruff/issues/970

## Summary

The lint checks for number of arguments in a function *definition*, but

the message says “function *call*”

## Test Plan

See what breaks and change the tests

Given `print(*a_list_with_elements, sep="\n")`, we can't remove the

separator (unlike in `print(a, sep="\n")`), since we don't know how many

arguments were provided.

Closes https://github.com/astral-sh/ruff/issues/8078.

- Add changelog entry for 0.1.1

- Bump version to 0.1.1

- Require preview for fix added in #7967

- Allow duplicate headings in changelog (markdownlint setting)

<!--

Thank you for contributing to Ruff! To help us out with reviewing,

please consider the following:

- Does this pull request include a summary of the change? (See below.)

- Does this pull request include a descriptive title?

- Does this pull request include references to any relevant issues?

-->

## Summary

Fixes https://github.com/astral-sh/ruff/issues/7448

Fixes https://github.com/astral-sh/ruff/issues/7892

I've removed automatic dangling comment formatting, we're doing manual

dangling comment formatting everywhere anyway (the

assert-all-comments-formatted ensures this) and dangling comments would

break the formatting there.

## Test Plan

New test file.

---------

Co-authored-by: Micha Reiser <micha@reiser.io>

Split out of #8044: In preview style, ellipsis are also collapsed in

non-stub files. This should only affect function/class contexts since

for other statements stub are generally not used. I've updated our tests

to use `pass` instead to reflect this, which makes tracking the preview

style changes much easier.

## Summary

Given an expression like `[x for (x) in y]`, we weren't skipping over

parentheses when searching for the `in` between `(x)` and `y`.

Closes https://github.com/astral-sh/ruff/issues/8053.

## Summary

In #6157 a warning was introduced when users use `ruff: noqa`

suppression in-line instead of at the file-level. I had this trigger

today after forgetting about it, and the warning is an excellent

improvement.

I knew immediately what the issue was because I raised it previously,

but on reading the warning I'm not sure it would be so obvious to all

users. This PR extends the error with a short sentence explaining that

line-level suppression should omit the `ruff:` prefix.

## Test Plan

Not sure it's necessary for such a trivial change :)

**Summary** `ruff format --diff` is similar to `ruff format --check`,

but we don't only error with the list of file that would be formatted,

but also show a diff between the unformatted input and the formatted

output.

```console

$ ruff format --diff scratch.py scratch.pyi scratch.ipynb

warning: `ruff format` is not yet stable, and subject to change in future versions.

--- scratch.ipynb

+++ scratch.ipynb

@@ -1,3 +1,4 @@

import numpy

-maths = (numpy.arange(100)**2).sum()

-stats= numpy.asarray([1,2,3,4]).median()

+

+maths = (numpy.arange(100) ** 2).sum()

+stats = numpy.asarray([1, 2, 3, 4]).median()

--- scratch.py

+++ scratch.py

@@ -1,3 +1,3 @@

x = 1

-y=2

+y = 2

z = 3

2 files would be reformatted, 1 file left unchanged

```

With `--diff`, the summary message gets printed to stderr to allow e.g.

`ruff format --diff . > format.patch`.

At the moment, jupyter notebooks are formatted as code diffs, while

everything else is a real diff that could be applied. This means that

the diffs containing jupyter notebooks are not real diffs and can't be

applied. We could change this to json diffs, but they are hard to read.

We could also split the diff option into a human diff option, where we

deviate from the machine readable diff constraints, and a proper machine

readable, appliable diff output that you can pipe into other tools.

To make the tests work, the results (and errors, if any) are sorted

before printing them. Previously, the print order was random, i.e. two

identical runs could have different output.

Open question: Should this go into the markdown docs? Or will this be

subsumed by the integration of the formatter into `ruff check`?

**Test plan** Fixtures for the change and no change cases, including a

jupyter notebook and for file input and stdin.

Fixes#7231

---------

Co-authored-by: Micha Reiser <micha@reiser.io>

**Summary** Insert a newline after nested function and class

definitions, unless there is a trailing own line comment.

We need to e.g. format

```python

if platform.system() == "Linux":

if sys.version > (3, 10):

def f():

print("old")

else:

def f():

print("new")

f()

```

as

```python

if platform.system() == "Linux":

if sys.version > (3, 10):

def f():

print("old")

else:

def f():

print("new")

f()

```

even though `f()` is directly preceded by an if statement, not a

function or class definition. See the comments and fixtures for trailing

own line comment handling.

**Test Plan** I checked that the new content of `newlines.py` matches

black's formatting.

---------

Co-authored-by: Charlie Marsh <charlie.r.marsh@gmail.com>

## Summary

When linting, we store a map from file path to fixes, which we then use

to show a fix summary in the printer.

In the printer, we assume that if the map is non-empty, then we have at

least one fix. But this isn't enforced by the fix struct, since you can

have an entry from (file path) to (empty fix table). In practice, this

only bites us when linting from `stdin`, since when linting across

multiple files, we have an `AddAssign` on `Diagnostics` that avoids

adding empty entries to the map. When linting from `stdin`, we create

the map directly, and so it _is_ possible to have a non-empty map that

doesn't contain any fixes, leading to a panic.

This PR introduces a dedicated struct to make these constraints part of

the formal interface.

Closes https://github.com/astral-sh/ruff/issues/8027.

## Test Plan

`cargo test` (notice two failures are removed)

<!--

Thank you for contributing to Ruff! To help us out with reviewing,

please consider the following:

- Does this pull request include a summary of the change? (See below.)

- Does this pull request include a descriptive title?

- Does this pull request include references to any relevant issues?

-->

## Summary

In https://github.com/astral-sh/ruff/pull/7968, I introduced a

regression whereby we started to treat imports used _only_ in type

annotation bounds (with `__future__` annotations) as unused.

The root of the issue is that I started using `visit_annotation` for

these bounds. So we'd queue up the bound in the list of deferred type

parameters, then when visiting, we'd further queue it up in the list of

deferred type annotations... Which we'd then never visit, since deferred

type annotations are visited _before_ deferred type parameters.

Anyway, the better solution here is to use a dedicated flag for these,

since they have slightly different behavior than type annotations.

I've also fixed what I _think_ is a bug whereby we previously failed to

resolve `Callable` in:

```python

type RecordCallback[R: Record] = Callable[[R], None]

from collections.abc import Callable

```

IIUC, the values in type aliases should be evaluated lazily, like type

parameters.

Closes https://github.com/astral-sh/ruff/issues/8017.

## Test Plan

`cargo test`

## Summary

Rule B005 of flake8-bugbear docs has a typo in one of the examples that

leads to a confusion in the correctness of `.strip()` method

```python

# Wrong output (used in docs)

"text.txt".strip(".txt") # "ex"

# Correct output

"text.txt".strip(".txt") # "e"

```

## Summary

Fix a typo in the docs for quote style.

> a = "a string without any quotes"

> b = "It's monday morning"

> Ruff will change a to use single quotes when using quote-style =

"single". However, a will be unchanged, as converting to single quotes

would require the inner ' to be escaped, which leads to less readable

code: 'It\'s monday morning'.

It should read "However, **b** will be unchanged".

## Test Plan

N/A.

## Summary

### What it does

This rule triggers an error when a bare raise statement is not in an

except or finally block.

### Why is this bad?

If raise statement is not in an except or finally block, there is no

active exception to

re-raise, so it will fail with a `RuntimeError` exception.

### Example

```python

def validate_positive(x):

if x <= 0:

raise

```

Use instead:

```python

def validate_positive(x):

if x <= 0:

raise ValueError(f"{x} is not positive")

```

## Test Plan

Added unit test and snapshot.

Manually compared ruff and pylint outputs on pylint's tests.

## References

- [pylint

documentation](https://pylint.pycqa.org/en/stable/user_guide/messages/error/misplaced-bare-raise.html)

- [pylint

implementation](https://github.com/pylint-dev/pylint/blob/main/pylint/checkers/exceptions.py#L339)

See the provided breaking changes note for details.

Removes support for the deprecated `--format`option in the `ruff check`

CLI, `format` inference as `output-format` in the configuration file,

and the `RUFF_FORMAT` environment variable.

The error message for use of `format` in the configuration file could be

better, but would require some awkward serde wrappers and it seems hard

to present the correct schema to the user still.

## Summary

Given `type RecordOrThings = Record | int | str`, the right-hand side

won't be evaluated at runtime. Same goes for `Record` in `type

RecordCallback[R: Record] = Callable[[R], None]`. This PR modifies the

visitation logic to treat them as typing-only.

Closes https://github.com/astral-sh/ruff/issues/7966.

## Summary

Unlike other filepath-based settings, the `cache-dir` wasn't being

resolved relative to the project root, when specified as an absolute

path.

Closes https://github.com/astral-sh/ruff/issues/7958.

## Summary

This PR adds a new `cell` field to the JSON output format which

indicates the Notebook cell this diagnostic (and fix) belongs to. It

also updates the location for the diagnostic and fixes as per the

`NotebookIndex`. It will be used in the VSCode extension to display the

diagnostic in the correct cell.

The diagnostic and edit start and end source locations are translated

for the notebook as per the `NotebookIndex`. The end source location for

an edit needs some special handling.

### Edit end location

To understand this, the following context is required:

1. Visible lines in Jupyter Notebook vs JSON array strings: The newline

is part of the string in the JSON format. This means that if there are 3

visible lines in a cell where the last line is empty then the JSON would

contain 2 strings in the source array, both ending with a newline:

**JSON format:**

```json

[

"# first line\n",

"# second line\n",

]

```

**Notebook view:**

```python

1 # first line

2 # second line

3

```

2. If an edit needs to remove an entire line including the newline, then

the end location would be the start of the next row.

To remove a statement in the following code:

```python

import os

```

The edit would be:

```

start: row 1, col 1

end: row 2, col 1

```

Now, here's where the problem lies. The notebook index doesn't have any

information for row 2 because it doesn't exists in the actual notebook.

The newline was added by Ruff to concatenate the source code and it's

removed before writing back. But, the edit is computed looking at that

newline.

This means that while translating the end location for an edit belong to

a Notebook, we need to check if both the start and end location belongs

to the same cell. If not, then the end location should be the first

character of the next row and if so, translate that back to the last

character of the previous row. Taking the above example, the translated

location for Notebook would be:

```

start: row 1, col 1

end: row 1, col 10

```

## Test Plan

Add test cases for notebook output in the JSON format and update

existing snapshots.

## Summary

This PR refactors the `NotebookIndex` struct to use `OneIndexed` to make

the

intent of the code clearer.

## Test Plan

Update the existing test case and run `cargo test` to verify the change.

- [x] Verify `--diff` output

- [x] Verify the diagnostics output

- [x] Verify `--show-source` output

**Summary** Handle comment before the default values of function

parameters correctly by inserting a line break instead of space after

the equals sign where required.

```python

def f(

a = # parameter trailing comment; needs line break

1,

b =

# default leading comment; needs line break

2,

c = ( # the default leading can only be end-of-line with parentheses; no line break

3

),

d = (

# own line leading comment with parentheses; no line break

4

)

)

```

Fixes#7603

**Test Plan** Added the different cases and one more complex case as

fixtures.

## Summary

This PR fixes the bug where the rule `E251` was being triggered on a equal token

inside a f-string which was used in the context of debug expressions.

For example, the following was being flagged before the fix:

```python

print(f"{foo = }")

```

But, now it is not. This leads to false negatives such as:

```python

print(f"{foo(a = 1)}")

```

One solution would be to know if the opened parentheses was inside a f-string or

not. If it was then we can continue flagging until it's closed. If not, then we

should not flag it.

## Test Plan

Add new test cases and check that they don't raise any false positives.

fixes: #7882

## Summary

`foo(**{})` was an overlooked edge case for `PIE804` which introduced a

crash within the Fix, introduced in #7884.

I've made it so that `foo(**{})` turns into `foo()` when applied with

`--fix`, but is that desired/expected? 🤔 Should we just ignore instead?

## Test Plan

`cargo test`

Closes https://github.com/astral-sh/ruff/issues/7572

Drops formatting specific rules from the default rule set as they

conflict with formatters in general (and in particular, conflict with

our formatter). Most of these rules are in preview, but the removal of

`line-too-long` and `mixed-spaces-and-tabs` is a change to the stable

rule set.

## Example

The following no longer raises `E501`

```

echo "xxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxx = 1" | ruff check -

```

## Summary

Throughout the codebase, we have this pattern:

```rust

let mut diagnostic = ...

if checker.patch(Rule::UnusedVariable) {

// Do the fix.

}

diagnostics.push(diagnostic)

```

This was helpful when we computed fixes lazily; however, we now compute

fixes eagerly, and this is _only_ used to ensure that we don't generate

fixes for rules marked as unfixable.

We often forget to add this, and it leads to bugs in enforcing

`--unfixable`.

This PR instead removes all of these checks, moving the responsibility

of enforcing `--unfixable` up to `check_path`. This is similar to how

@zanieb handled the `--extend-unsafe` logic: we post-process the

diagnostics to remove any fixes that should be ignored.

## Summary

Add autofix for `PLR1714` using tuples.

If added complexity is desired, we can lean into the `set` part by doing

some kind of builtin check on all of the comparator elements for

starters, since we otherwise don't know if something's hashable.

## Test Plan

`cargo test`, and manually.

**Summary** Remove spaces from import statements such as

```python

import tqdm . tqdm

from tqdm . auto import tqdm

```

See also #7760 for a better solution.

**Test Plan** New fixtures

**Summary** Quoting of f-strings can change if they are triple quoted

and only contain single quotes inside.

Fixes#6841

**Test Plan** New fixtures

---------

Co-authored-by: Dhruv Manilawala <dhruvmanila@gmail.com>

<!--

Thank you for contributing to Ruff! To help us out with reviewing,

please consider the following:

- Does this pull request include a summary of the change? (See below.)

- Does this pull request include a descriptive title?

- Does this pull request include references to any relevant issues?

-->

## Summary

<!-- What's the purpose of the change? What does it do, and why? -->

closes https://github.com/astral-sh/ruff/issues/7912

## Test Plan

<!-- How was it tested? -->

Adds two configuration-file only settings `extend-safe-fixes` and

`extend-unsafe-fixes` which can be used to promote and demote the

applicability of fixes for rules.

Fixes with `Never` applicability cannot be promoted.

## Summary

Given:

```python

baz: Annotated[

str,

[qux for qux in foo],

]

```

We treat `baz` as `BindingKind::Annotation`, to ensure that references

to `baz` are marked as unbound. However, we were _also_ treating `qux`

as `BindingKind::Annotation`, which meant that the load in the

comprehension _also_ errored.

Closes https://github.com/astral-sh/ruff/issues/7879.

## Summary

This PR upgrades some rules from "sometimes" to "always" fixes, now that

we're getting ready to ship support in the CLI. The focus here was on

identifying rules for which the diagnostic itself is high-confidence,

and the fix itself is too (assuming that the diagnostic is correct).

This is _unlike_ rules that _may_ be a false positive, like those that

(e.g.) assume an object is a dictionary when you call `.values()` on it.

Specifically, I upgraded:

- A bunch of rules that only apply to `.pyi` files.

- Rules that rewrite deprecated imports or aliases.

- Some other misc. rules, like: `empty-print-string`, `unused-noqa`,

`getattr-with-constant`.

Open to feedback on any of these.

## Summary

Adds autofix to `PYI030`

Closes#7854.

Unsure if the cloning method I chose is the best solution here, feel

free to suggest alternatives!

## Test Plan

`cargo test` as well as manually

## Summary

Restores functionality of #7875 but in the correct place. Closes#7877.

~~I couldn't figure out how to get cargo fmt to work, so hopefully

that's run in CI.~~ Nevermind, figured it out.

## Test Plan

Can see output of json.

## Summary

This PR fixes a bug to disallow f-strings in match pattern literal.

```

literal_pattern ::= signed_number

| signed_number "+" NUMBER

| signed_number "-" NUMBER

| strings

| "None"

| "True"

| "False"

| signed_number: NUMBER | "-" NUMBER

```

Source:

https://docs.python.org/3/reference/compound_stmts.html#grammar-token-python-grammar-literal_pattern

Also,

```console

$ python /tmp/t.py

File "/tmp/t.py", line 4

case "hello " f"{name}":

^^^^^^^^^^^^^^^^^^

SyntaxError: patterns may only match literals and attribute lookups

```

## Test Plan

Update existing test case and accordingly the snapshots. Also, add a new

test case to verify that the parser does raise an error.

## Summary

Fixes#7853.

The old and new source files were reversed in the call to

`TextDiff::from_lines`, so the diff output of the CLI was also reversed.

## Test Plan

Two snapshots were updated in the process, so any reversal should be

caught :)

Closes https://github.com/astral-sh/ruff/issues/7491

Users found it confusing that warnings were displayed when ignoring a

preview rule (which has no effect without `--preview`). While we could

retain the warning with different messaging, I've opted to remove it for

now. With this pull request, we will only warn on `--select` and

`--extend-select` but not `--fixable`, `--unfixable`, `--ignore`, or

`--extend-fixable`.

## Summary

Resolves https://github.com/astral-sh/ruff/issues/7618.

The list of builtin iterator is not exhaustive.

## Test Plan

`cargo test`

``` python

a = [1, 2]

examples = [

enumerate(a),

filter(lambda x: x, a),

map(int, a),

reversed(a),

zip(a),

iter(a),

]

for example in examples:

print(next(example))

```

## Summary

Implement

[`no-single-item-in`](https://github.com/dosisod/refurb/blob/master/refurb/checks/iterable/no_single_item_in.py)

as `single-item-membership-test` (`FURB171`).

Uses the helper function `generate_comparison` from the `pycodestyle`

implementations; this function should probably be moved, but I am not

sure where at the moment.

Update: moved it to `ruff_python_ast::helpers`.

Related to #1348.

## Test Plan

`cargo test`

I noticed that `tracing::instrument` wasn't available with only the

`"std"` feature enabled when trying to run `cargo doc -p

ruff_formatter`.

I could be misunderstanding something, but I couldn't even run the tests

for the crate.

```

ruff on ruff-formatter-tracing [$] is 📦 v0.0.292 via 🦀 v1.72.0

❯ cargo test -p ruff_formatter

Compiling ruff_formatter v0.0.0 (/Users/chrispryer/github/ruff/crates/ruff_formatter)

error[E0433]: failed to resolve: could not find `instrument` in `tracing`

--> crates/ruff_formatter/src/printer/mod.rs:57:16

|

57 | #[tracing::instrument(name = "Printer::print", skip_all)]

| ^^^^^^^^^^ could not find `instrument` in `tracing`

|

note: found an item that was configured out

--> /Users/chrispryer/.cargo/registry/src/index.crates.io-6f17d22bba15001f/tracing-0.1.37/src/lib.rs:959:29

|

959 | pub use tracing_attributes::instrument;

| ^^^^^^^^^^

= note: the item is gated behind the `attributes` feature

For more information about this error, try `rustc --explain E0433`.

error: could not compile `ruff_formatter` (lib) due to previous error

warning: build failed, waiting for other jobs to finish...

error: could not compile `ruff_formatter` (lib test) due to previous error

```

Maybe the idea is to keep this crate minimal, but I figured I'd at least

point this out.

## Summary

Document the performance effects of `itertools.starmap`, including that

it is actually slower than comprehensions in Python 3.12.

Closes#7771.

## Test Plan

`python scripts/check_docs_formatted.py`

After working with the previous change in

https://github.com/astral-sh/ruff/pull/7821 I found the names a bit

unclear and their relationship with the user-facing API muddied. Since

the applicability is exposed to the user directly in our JSON output, I

think it's important that these names align with our configuration

options. I've replaced `Manual` or `Never` with `Display` which captures

our intent for these fixes (only for display). Here, we create room for

future levels, such as `HasPlaceholders`, which wouldn't fit into the

`Always`/`Sometimes`/`Never` levels.

Unlike https://github.com/astral-sh/ruff/pull/7819, this retains the

flat enum structure which is easier to work with.

Previously we just omitted diagnostic summaries when using `--fix` or

`--diff` with a stdin file. Now, we still write the summaries to stderr

instead of the main writer (which is generally stdout but could be

changed by `--output-file`).

Rebase of https://github.com/astral-sh/ruff/pull/5119 authored by

@evanrittenhouse with additional refinements.

## Changes

- Adds `--unsafe-fixes` / `--no-unsafe-fixes` flags to `ruff check`

- Violations with unsafe fixes are not shown as fixable unless opted-in

- Fix applicability is respected now

- `Applicability::Never` fixes are no longer applied

- `Applicability::Sometimes` fixes require opt-in

- `Applicability::Always` fixes are unchanged

- Hints for availability of `--unsafe-fixes` added to `ruff check`

output

## Examples

Check hints at hidden unsafe fixes

```

❯ ruff check example.py --no-cache --select F601,W292

example.py:1:14: F601 Dictionary key literal `'a'` repeated

example.py:2:15: W292 [*] No newline at end of file

Found 2 errors.

[*] 1 fixable with the `--fix` option (1 hidden fix can be enabled with the `--unsafe-fixes` option).

```

We could add an indicator for which violations have hidden fixes in the

future.

Check treats unsafe fixes as applicable with opt-in

```

❯ ruff check example.py --no-cache --select F601,W292 --unsafe-fixes

example.py:1:14: F601 [*] Dictionary key literal `'a'` repeated

example.py:2:15: W292 [*] No newline at end of file

Found 2 errors.

[*] 2 fixable with the --fix option.

```

Also can be enabled in the config file

```

❯ cat ruff.toml

unsafe-fixes = true

```

And opted-out per invocation

```

❯ ruff check example.py --no-cache --select F601,W292 --no-unsafe-fixes

example.py:1:14: F601 Dictionary key literal `'a'` repeated

example.py:2:15: W292 [*] No newline at end of file

Found 2 errors.

[*] 1 fixable with the `--fix` option (1 hidden fix can be enabled with the `--unsafe-fixes` option).

```

Diff does not include unsafe fixes

```

❯ ruff check example.py --no-cache --select F601,W292 --diff

--- example.py

+++ example.py

@@ -1,2 +1,2 @@

x = {'a': 1, 'a': 1}

-print(('foo'))

+print(('foo'))

\ No newline at end of file

Would fix 1 error.

```

Unless there is opt-in

```

❯ ruff check example.py --no-cache --select F601,W292 --diff --unsafe-fixes

--- example.py

+++ example.py

@@ -1,2 +1,2 @@

-x = {'a': 1}

-print(('foo'))

+x = {'a': 1, 'a': 1}

+print(('foo'))

\ No newline at end of file

Would fix 2 errors.

```

https://github.com/astral-sh/ruff/pull/7790 will improve the diff

messages following this pull request

Similarly, `--fix` and `--fix-only` require the `--unsafe-fixes` flag to

apply unsafe fixes.

## Related

Replaces #5119

Closes https://github.com/astral-sh/ruff/issues/4185

Closes https://github.com/astral-sh/ruff/issues/7214

Closes https://github.com/astral-sh/ruff/issues/4845

Closes https://github.com/astral-sh/ruff/issues/3863

Addresses https://github.com/astral-sh/ruff/issues/6835

Addresses https://github.com/astral-sh/ruff/issues/7019

Needs follow-up https://github.com/astral-sh/ruff/issues/6962

Needs follow-up https://github.com/astral-sh/ruff/issues/4845

Needs follow-up https://github.com/astral-sh/ruff/issues/7436

Needs follow-up https://github.com/astral-sh/ruff/issues/7025

Needs follow-up https://github.com/astral-sh/ruff/issues/6434

Follow-up #7790

Follow-up https://github.com/astral-sh/ruff/pull/7792

---------

Co-authored-by: Evan Rittenhouse <evanrittenhouse@gmail.com>

## Summary

This PR updates the parser definition to use the precise location when reporting

an invalid f-string conversion flag error.

Taking the following example code:

```python

f"{foo!x}"

```

On earlier version,

```

Error: f-string: invalid conversion character at byte offset 6

```

Now,

```

Error: f-string: invalid conversion character at byte offset 7

```

This becomes more useful when there's whitespace between `!` and the flag value

although that is not valid but we can't detect that now.

## Test Plan

As mentioned above.

## Summary

This PR resolves an issue raised in

https://github.com/astral-sh/ruff/discussions/7810, whereby we don't fix

an f-string that exceeds the line length _even if_ the resultant code is

_shorter_ than the current code.

As part of this change, I've also refactored and extracted some common

logic we use around "ensuring a fix isn't breaking the line length

rules".

## Test Plan

`cargo test`

## Summary

The implementation here differs from the non-`stdin` version -- this is

now more consistent.

## Test Plan

```

❯ cat Untitled.ipynb | cargo run -p ruff_cli -- check --stdin-filename Untitled.ipynb --diff -n

Finished dev [unoptimized + debuginfo] target(s) in 0.11s

Running `target/debug/ruff check --stdin-filename Untitled.ipynb --diff -n`

--- Untitled.ipynb:cell 2

+++ Untitled.ipynb:cell 2

@@ -1 +0,0 @@

-import os

--- Untitled.ipynb:cell 4

+++ Untitled.ipynb:cell 4

@@ -1 +0,0 @@

-import sys

```

## Summary

This PR fixes the bug where the formatter would panic if a class/function with

decorators had a suppression comment.

The fix is to use to correct start location to find the `async`/`def`/`class`

keyword when decorators are present which is the end of the last

decorator.

## Test Plan

Add test cases for the fix and update the snapshots.

- Only trigger for immediately adjacent isinstance() calls with the same

target

- Preserve order of or conditions

Two existing tests changed:

- One was incorrectly reordering the or conditions, and is now correct.

- Another was combining two non-adjacent isinstance() calls. It's safe

enough in that example,

but this isn't safe to do in general, and it feels low-value to come up

with a heuristic for

when it is safe, so it seems better to not combine the calls in that

case.

Fixes https://github.com/astral-sh/ruff/issues/7797

## Summary

We now list each changed file when running with `--check`.

Closes https://github.com/astral-sh/ruff/issues/7782.

## Test Plan

```

❯ cargo run -p ruff_cli -- format foo.py --check

Compiling ruff_cli v0.0.292 (/Users/crmarsh/workspace/ruff/crates/ruff_cli)

rgo + Finished dev [unoptimized + debuginfo] target(s) in 1.41s

Running `target/debug/ruff format foo.py --check`

warning: `ruff format` is a work-in-progress, subject to change at any time, and intended only for experimentation.

Would reformat: foo.py

1 file would be reformatted

```

## Summary

Check that the sequence type is a list, set, dict, or tuple before

recommending replacing the `enumerate(...)` call with `range(len(...))`.

Document behaviour so users are aware of the type inference limitation

leading to false negatives.

Closes#7656.

## Summary

This PR fixes a bug in the lexer for f-string format spec where it would

consider the `{{` (double curly braces) as an escape pattern.

This is not the case as evident by the

[PEP](https://peps.python.org/pep-0701/#how-to-produce-these-new-tokens)

as well but I missed the part:

> [..]

> * **If in “format specifier mode” (see step 3), an opening brace ({)

or a closing brace (}).**

> * If not in “format specifier mode” (see step 3), an opening brace ({)

or a closing brace (}) that is not immediately followed by another

opening/closing brace.

## Test Plan

Add a test case to verify the fix and update the snapshot.

fixes: #7778

## Summary

Two of the three listed examples were wrong: one was semantically

incorrect, another was _correct_ but not actually within the scope of

the rule.

Good motivation for us to start linting documentation examples :)

Closes https://github.com/astral-sh/ruff/issues/7773.

## Summary

We'll revert back to the crates.io release once it's up-to-date, but

better to get this out now that Python 3.12 is released.

## Test Plan

`cargo test`

## Summary

This PR enables `ruff format` to format Jupyter notebooks.

Most of the work is contained in a new `format_source` method that

formats a generic `SourceKind`, then returns `Some(transformed)` if the

source required formatting, or `None` otherwise.

Closes https://github.com/astral-sh/ruff/issues/7598.

## Test Plan

Ran `cat foo.py | cargo run -p ruff_cli -- format --stdin-filename

Untitled.ipynb`; verified that the console showed a reasonable error:

```console

warning: Failed to read notebook Untitled.ipynb: Expected a Jupyter Notebook, which must be internally stored as JSON, but this file isn't valid JSON: EOF while parsing a value at line 1 column 0

```

Ran `cat Untitled.ipynb | cargo run -p ruff_cli -- format

--stdin-filename Untitled.ipynb`; verified that the JSON output

contained formatted source code.

## Summary

When writing back notebooks via `stdout`, we need to write back the

entire JSON content, not _just_ the fixed source code. Otherwise,

writing the output _back_ to the file will yield an invalid notebook.

Closes https://github.com/astral-sh/ruff/issues/7747

## Test Plan

`cargo test`

## Summary

It turns out that _some_ identifiers can contain newlines --

specifically, dot-delimited import identifiers, like:

```python

import foo\

.bar

```

At present, we print all identifiers verbatim, which causes us to retain

the `\` in the formatted output. This also leads to violating some debug

assertions (see the linked issue, though that's a symptom of this

formatting failure).

This PR adds detection for import identifiers that contain newlines, and

formats them via `text` (slow) rather than `source_code_slice` (fast) in

those cases.

Closes https://github.com/astral-sh/ruff/issues/7734.

## Test Plan

`cargo test`

## Summary

There's no way for users to fix this warning if they're intentionally

using an "invalid" PEP 593 annotation, as is the case in CPython. This

is a symptom of having warnings that aren't themselves diagnostics. If

we want this to be user-facing, we should add a diagnostic for it!

## Test Plan

Ran `cargo run -p ruff_cli -- check foo.py -n` on:

```python

from typing import Annotated

Annotated[int]

```

## Summary

If we have, e.g.:

```python

sum((

factor.dims for factor in bases

), [])

```

We generate three edits: two insertions (for the `operator` and

`functools` imports), and then one replacement (for the `sum` call

itself). We need to ensure that the insertions come before the

replacement; otherwise, the edits will appear overlapping and

out-of-order.

Closes https://github.com/astral-sh/ruff/issues/7718.

## Summary

This PR fixes a bug where if a Windows newline (`\r\n`) character was

escaped, then only the `\r` was consumed and not `\n` leading to an

unterminated string error.

## Test Plan

Add new test cases to check the newline escapes.

fixes: #7632

## Summary

This PR fixes the bug where the value of a string node type includes the

escaped mac/windows newline character.

Note that the token value still includes them, it's only removed when

parsing the string content.

## Test Plan

Add new test cases for the string node type to check that the escapes

aren't being included in the string value.

fixes: #7723

## Summary

This PR modifies the `line-too-long` and `doc-line-too-long` rules to

ignore lines that are too long due to the presence of a pragma comment

(e.g., `# type: ignore` or `# noqa`). That is, if a line only exceeds

the limit due to the pragma comment, it will no longer be flagged as

"too long". This behavior mirrors that of the formatter, thus ensuring

that we don't flag lines under E501 that the formatter would otherwise

avoid wrapping.

As a concrete example, given a line length of 88, the following would

_no longer_ be considered an E501 violation:

```python

# The string literal is 88 characters, including quotes.

"shape:shape:shape:shape:shape:shape:shape:shape:shape:shape:shape:shape:shape:shape:sh" # type: ignore

```

This, however, would:

```python

# The string literal is 89 characters, including quotes.

"shape:shape:shape:shape:shape:shape:shape:shape:shape:shape:shape:shape:shape:shape:sha" # type: ignore

```

In addition to mirroring the formatter, this also means that adding a

pragma comment (like `# noqa`) won't _cause_ additional violations to

appear (namely, E501). It's very common for users to add a `# type:

ignore` or similar to a line, only to find that they then have to add a

suppression comment _after_ it that was required before, as in `# type:

ignore # noqa: E501`.

Closes https://github.com/astral-sh/ruff/issues/7471.

## Test Plan

`cargo test`

## Summary

This PR fixes the bug where the `NotebookIndex` was not being computed

when

using stdin as the input source.

## Test Plan

On `main`, the diagnostic output won't include the cell number when

using stdin

while it'll be included after this fix.

### `main`

```console

$ cat ~/playground/ruff/notebooks/test.ipynb | cargo run --bin ruff -- check --isolated --no-cache - --stdin-filename ~/playground/ruff/notebooks/test.ipynb

/Users/dhruv/playground/ruff/notebooks/test.ipynb:2:8: F401 [*] `math` imported but unused

/Users/dhruv/playground/ruff/notebooks/test.ipynb:7:8: F811 Redefinition of unused `random` from line 1

/Users/dhruv/playground/ruff/notebooks/test.ipynb:8:8: F401 [*] `pprint` imported but unused

/Users/dhruv/playground/ruff/notebooks/test.ipynb:12:4: F632 [*] Use `==` to compare constant literals

/Users/dhruv/playground/ruff/notebooks/test.ipynb:13:38: F632 [*] Use `==` to compare constant literals

Found 5 errors.

[*] 4 potentially fixable with the --fix option.

```

### `dhruv/notebook-index-stdin`

```console

$ cat ~/playground/ruff/notebooks/test.ipynb | cargo run --bin ruff -- check --isolated --no-cache - --stdin-filename ~/playground/ruff/notebooks/test.ipynb

/Users/dhruv/playground/ruff/notebooks/test.ipynb:cell 3:2:8: F401 [*] `math` imported but unused

/Users/dhruv/playground/ruff/notebooks/test.ipynb:cell 5:1:8: F811 Redefinition of unused `random` from line 1

/Users/dhruv/playground/ruff/notebooks/test.ipynb:cell 5:2:8: F401 [*] `pprint` imported but unused

/Users/dhruv/playground/ruff/notebooks/test.ipynb:cell 6:2:4: F632 [*] Use `==` to compare constant literals

/Users/dhruv/playground/ruff/notebooks/test.ipynb:cell 6:3:38: F632 [*] Use `==` to compare constant literals

Found 5 errors.

[*] 4 potentially fixable with the --fix option.

```

## Summary

This PR implements a variety of optimizations to improve performance of

the Eradicate rule, which always shows up in all-rules benchmarks and

bothers me. (These improvements are not hugely important, but it was

kind of a fun Friday thing to spent a bit of time on.)

The improvements include:

- Doing cheaper work first (checking for some explicit substrings

upfront).

- Using `aho-corasick` to speed an exact substring search.

- Merging multiple regular expressions using a `RegexSet`.

- Removing some unnecessary `\s*` and other pieces from the regular

expressions (since we already trim strings before matching on them).

## Test Plan

I benchmarked this function in a standalone crate using a variety of

cases. Criterion reports that this version is up to 80% faster, and

almost every case is at least 50% faster:

```

Eradicate/Detection/# Warn if we are installing over top of an existing installation. This can

time: [101.84 ns 102.32 ns 102.82 ns]

change: [-77.166% -77.062% -76.943%] (p = 0.00 < 0.05)

Performance has improved.

Found 3 outliers among 100 measurements (3.00%)

3 (3.00%) high mild

Eradicate/Detection/#from foo import eradicate

time: [74.872 ns 75.096 ns 75.314 ns]

change: [-84.180% -84.131% -84.079%] (p = 0.00 < 0.05)

Performance has improved.

Found 1 outliers among 100 measurements (1.00%)

1 (1.00%) high mild

Eradicate/Detection/# encoding: utf8

time: [46.522 ns 46.862 ns 47.237 ns]

change: [-29.408% -28.918% -28.471%] (p = 0.00 < 0.05)

Performance has improved.

Found 7 outliers among 100 measurements (7.00%)

6 (6.00%) high mild

1 (1.00%) high severe

Eradicate/Detection/# Issue #999

time: [16.942 ns 16.994 ns 17.058 ns]

change: [-57.243% -57.064% -56.815%] (p = 0.00 < 0.05)

Performance has improved.

Found 3 outliers among 100 measurements (3.00%)

2 (2.00%) high mild

1 (1.00%) high severe

Eradicate/Detection/# type: ignore

time: [43.074 ns 43.163 ns 43.262 ns]

change: [-17.614% -17.390% -17.152%] (p = 0.00 < 0.05)

Performance has improved.

Found 5 outliers among 100 measurements (5.00%)

3 (3.00%) high mild

2 (2.00%) high severe

Eradicate/Detection/# user_content_type, _ = TimelineEvent.objects.using(db_alias).get_or_create(

time: [209.40 ns 209.81 ns 210.23 ns]

change: [-32.806% -32.630% -32.470%] (p = 0.00 < 0.05)

Performance has improved.

Eradicate/Detection/# this is = to that :(

time: [72.659 ns 73.068 ns 73.473 ns]

change: [-68.884% -68.775% -68.655%] (p = 0.00 < 0.05)

Performance has improved.

Found 9 outliers among 100 measurements (9.00%)

7 (7.00%) high mild

2 (2.00%) high severe

Eradicate/Detection/#except Exception:

time: [92.063 ns 92.366 ns 92.691 ns]

change: [-64.204% -64.052% -63.909%] (p = 0.00 < 0.05)

Performance has improved.

Found 4 outliers among 100 measurements (4.00%)

2 (2.00%) high mild

2 (2.00%) high severe

Eradicate/Detection/#print(1)

time: [68.359 ns 68.537 ns 68.725 ns]

change: [-72.424% -72.356% -72.278%] (p = 0.00 < 0.05)

Performance has improved.

Found 2 outliers among 100 measurements (2.00%)

1 (1.00%) low mild

1 (1.00%) high mild

Eradicate/Detection/#'key': 1 + 1,

time: [79.604 ns 79.865 ns 80.135 ns]

change: [-69.787% -69.667% -69.549%] (p = 0.00 < 0.05)

Performance has improved.

```

## Summary

The parser now uses the raw source code as global context and slices

into it to parse debug text. It turns out we were always passing in the

_old_ source code, so when code was fixed, we were making invalid

accesses. This PR modifies the call to use the _fixed_ source code,

which will always be consistent with the tokens.

Closes https://github.com/astral-sh/ruff/issues/7711.

## Test Plan

`cargo test`

## Summary

This wasn't necessary in the past, since we _only_ applied this rule to

bodies that contained two statements, one of which was a `pass`. Now

that it applies to any `pass` in a block with multiple statements, we

can run into situations in which we remove both passes, and so need to

apply the fixes in isolation.

See:

https://github.com/astral-sh/ruff/issues/7455#issuecomment-1741107573.

## Summary

The markdown documentation was present, but in the wrong place, so was

not displaying on the website. I moved it and added some references.

Related to #2646.

## Test Plan

`python scripts/check_docs_formatted.py`

Previously attempted to repair these tests at

https://github.com/astral-sh/ruff/pull/6992 but I don't think we should

prioritize that and instead I would like to remove this dead code.

## Summary

Extend `unnecessary-pass` (`PIE790`) to trigger on all unnecessary

`pass` statements by checking for `pass` statements in any class or

function body with more than one statement.

Closes#7600.

## Test Plan

`cargo test`

Part of #1646.

## Summary

Implement `S505`

([`weak_cryptographic_key`](https://bandit.readthedocs.io/en/latest/plugins/b505_weak_cryptographic_key.html))

rule from `bandit`.

For this rule, `bandit` [reports the issue

with](https://github.com/PyCQA/bandit/blob/1.7.5/bandit/plugins/weak_cryptographic_key.py#L47-L56):

- medium severity for DSA/RSA < 2048 bits and EC < 224 bits

- high severity for DSA/RSA < 1024 bits and EC < 160 bits

Since Ruff does not handle severities for `bandit`-related rules, we

could either report the issue if we have lower values than medium

severity, or lower values than high one. Two reasons led me to choose

the first option:

- a medium severity issue is still a security issue we would want to

report to the user, who can then decide to either handle the issue or

ignore it

- `bandit` [maps the EC key algorithms to their respective key lengths

in

bits](https://github.com/PyCQA/bandit/blob/1.7.5/bandit/plugins/weak_cryptographic_key.py#L112-L133),

but there is no value below 160 bits, so technically `bandit` would

never report medium severity issues for EC keys, only high ones

Another consideration is that as shared just above, for EC key

algorithms, `bandit` has a mapping to map the algorithms to their

respective key lengths. In the implementation in Ruff, I rather went

with an explicit list of EC algorithms known to be vulnerable (which

would thus be reported) rather than implementing a mapping to retrieve

the associated key length and comparing it with the minimum value.

## Test Plan

Snapshot tests from

https://github.com/PyCQA/bandit/blob/1.7.5/examples/weak_cryptographic_key_sizes.py.

## Summary

Extend the `task-tags` checking logic to ignore TODO tags (with or

without parentheses). For example,

```python

# TODO(tjkuson): Rewrite in Rust

```

is no longer flagged as commented-out code.

Closes#7031.

I also updated the documentation to inform users that the rule is prone

to false positives like this!

EDIT: Accidentally linked to the wrong issue when first opening this PR,

now corrected.

## Test Plan

`cargo test`

## Summary

When lexing a number like `0x995DC9BBDF1939FA` that exceeds our small

number representation, we were only storing the portion after the base

(in this case, `995DC9BBDF1939FA`). When using that representation in

code generation, this could lead to invalid syntax, since

`995DC9BBDF1939FA)` on its own is not a valid integer.

This PR modifies the code to store the full span, including the radix

prefix.

See:

https://github.com/astral-sh/ruff/issues/7455#issuecomment-1739802958.

## Test Plan

`cargo test`

Closes#7434

Replaces the `PREVIEW` selector (removed in #7389) with a configuration

option `explicit-preview-rules` which requires selectors to use exact

rule codes for all preview rules. This allows users to enable preview

without opting into all preview rules at once.

## Test plan

Unit tests

## Summary

At present, `quote-style` is used universally. However, [PEP

8](https://peps.python.org/pep-0008/) and [PEP

257](https://peps.python.org/pep-0257/) suggest that while either single

or double quotes are acceptable in general (as long as they're

consistent), docstrings and triple-quoted strings should always use

double quotes. In our research, the vast majority of Ruff users that

enable the `flake8-quotes` rules only enable them for inline strings

(i.e., non-triple-quoted strings).

Additionally, many Black forks (like Blue and Pyink) use double quotes

for docstrings and triple-quoted strings.

Our decision for now is to always prefer double quotes for triple-quoted

strings (which should include docstrings). Based on feedback, we may

consider adding additional options (e.g., a `"preserve"` mode, to avoid

changing quotes; or a `"multiline-quote-style"` to override this).

Closes https://github.com/astral-sh/ruff/issues/7615.

## Test Plan

`cargo test`

## Summary

Extends the pragma comment detection in the formatter to support

case-insensitive `noqa` (as supposed by Ruff), plus a variety of other

pragmas (`isort:`, `nosec`, etc.).

Also extracts the detection out into the trivia crate so that we can

reuse it in the linter (see:

https://github.com/astral-sh/ruff/issues/7471).

## Test Plan

`cargo test`

## Summary

No-op refactor, but we can evaluate early if the first part of

`preserve_parentheses || has_comments` is `true`, and thus avoid looking

up the node comments.

## Test Plan

`cargo test`

## Summary

The formatting for tuple patterns is now intended to match that of `for`

loops:

- Always parenthesize single-element tuples.

- Don't break on the trailing comma in single-element tuples.

- For other tuples, preserve the parentheses, and insert if-breaks.

Closes https://github.com/astral-sh/ruff/issues/7681.

## Test Plan

`cargo test`

## Summary

`PGH002`, which checks for use of deprecated `logging.warn` calls, did

not check for calls made on the attribute `warn` yet. Since

https://github.com/astral-sh/ruff/pull/7521 we check both cases for

similar rules wherever possible. To be consistent this PR expands PGH002

to do the same.

## Test Plan

Expanded existing fixtures with `logger.warn()` calls

## Issue links

Fixes final inconsistency mentioned in

https://github.com/astral-sh/ruff/issues/7502

## Summary

As we bind the `ast::ExprCall` in the big `match expr` in

`expression.rs`

```rust

Expr::Call(

call @ ast::ExprCall {

...

```

There is no need for additional `let/if let` checks on `ExprCall` in

downstream rules. Found a few older rules which still did this while

working on something else. This PR removes the redundant check from

these rules.

## Test Plan

`cargo test`

## Summary

It's common practice to name derive macros the same as the trait that they implement (`Debug`, `Display`, `Eq`, `Serialize`, ...).

This PR renames the `ConfigurationOptions` derive macro to `OptionsMetadata` to match the trait name.

## Test Plan

`cargo build`

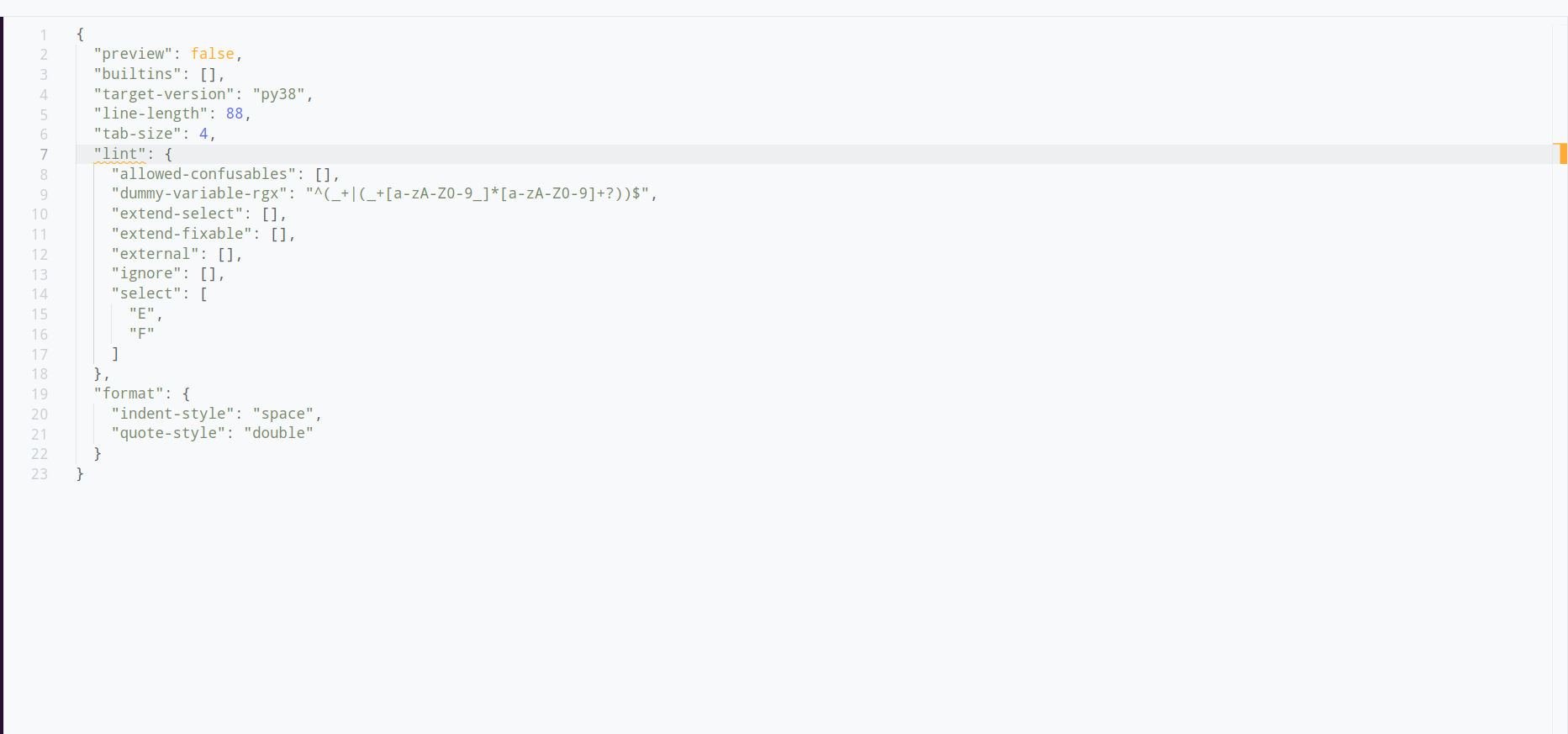

## Summary

This PR adds a new `lint` section to the configuration that groups all linter-specific settings. The existing top-level configurations continue to work without any warning because the `lint.*` settings are experimental.

The configuration merges the top level and `lint.*` settings where the settings in `lint` have higher precedence (override the top-level settings). The reasoning behind this is that the settings in `lint.` are more specific and more specific settings should override less specific settings.

I decided against showing the new `lint.*` options on our website because it would make the page extremely long (it's technically easy to do, just attribute `lint` with `[option_group`]). We may want to explore adding an `alias` field to the `option` attribute and show the alias on the website along with its regular name.

## Test Plan

* I added new integration tests

* I verified that the generated `options.md` is identical

* Verified the default settings in the playground

## Summary

This PR adds support for named expressions when analyzing `__all__`

assignments, as per https://github.com/astral-sh/ruff/issues/7672. It

also loosens the enforcement around assignments like: `__all__ =

list(some_other_expression)`. We shouldn't flag these as invalid, even

though we can't analyze the members, since we _know_ they evaluate to a

`list`.

Closes https://github.com/astral-sh/ruff/issues/7672.

## Test Plan

`cargo test`

## Summary

Fixes#7616 by ensuring that

[B006](https://docs.astral.sh/ruff/rules/mutable-argument-default/#mutable-argument-default-b006)

fixes are inserted after module imports.

I have created a new test file, `B006_5.py`. This is mainly because I

have been working on this on and off, and the merge conflicts were

easier to handle in a separate file. If needed, I can move it into

another file.

## Test Plan

`cargo test`

## Summary

Expands several rules to also check for `Expr::Name` values. As they

would previously not consider:

```python

from logging import error

error("foo")

```

as potential violations

```python

import logging

logging.error("foo")

```

as potential violations leading to inconsistent behaviour.

The rules impacted are:

- `BLE001`

- `TRY400`

- `TRY401`

- `PLE1205`

- `PLE1206`

- `LOG007`

- `G001`-`G004`

- `G101`

- `G201`

- `G202`

## Test Plan

Fixtures for all impacted rules expanded.

## Issue Link

Refers: https://github.com/astral-sh/ruff/issues/7502

<!--

Thank you for contributing to Ruff! To help us out with reviewing,

please consider the following:

- Does this pull request include a summary of the change? (See below.)

- Does this pull request include a descriptive title?

- Does this pull request include references to any relevant issues?

-->

## Summary

<!-- What's the purpose of the change? What does it do, and why? -->

The note about rules being in preview was not being displayed for legacy

nursery rules.

Adds a link to the new preview documentation as well.

## Test Plan

<!-- How was it tested? -->

Built locally and checked a nursery rule e.g.

http://127.0.0.1:8000/ruff/rules/no-indented-block-comment/

## Summary

Pass around a `Settings` struct instead of individual members to

simplify function signatures and to make it easier to add new settings.

This PR was suggested in [this

comment](https://github.com/astral-sh/ruff/issues/1567#issuecomment-1734182803).

## Note on the choices

I chose which functions to modify based on which seem most likely to use

new settings, but suggestions on my choices are welcome!

## Summary

This PR fixes the bug where the cell indices displayed in the `--diff` output

and the ones in the normal output were different. This was due to the fact that

the `--diff` output was using the `enumerate` function to iterate over

the cells which starts at 0.

## Test Plan

Ran the following command with and without the `--diff` flag:

```console

cargo run --bin ruff -- check --no-cache --isolated ~/playground/ruff/notebooks/test.ipynb

```

### `main`

<details><summary>Diagnostics output:</summary>

<p>

```console

$ cargo run --bin ruff -- check --no-cache --isolated ~/playground/ruff/notebooks/test.ipynb

/Users/dhruv/playground/ruff/notebooks/test.ipynb:cell 3:2:8: F401 [*] `math` imported but unused

/Users/dhruv/playground/ruff/notebooks/test.ipynb:cell 5:1:8: F811 Redefinition of unused `random` from line 1

/Users/dhruv/playground/ruff/notebooks/test.ipynb:cell 5:2:8: F401 [*] `pprint` imported but unused

/Users/dhruv/playground/ruff/notebooks/test.ipynb:cell 6:2:4: F632 [*] Use `==` to compare constant literals

/Users/dhruv/playground/ruff/notebooks/test.ipynb:cell 6:3:38: F632 [*] Use `==` to compare constant literals

Found 5 errors.

[*] 4 potentially fixable with the --fix option.

```

</p>

</details>

<details><summary>Diff output:</summary>

<p>

```console

$ cargo run --bin ruff -- check --no-cache --isolated ~/playground/ruff/notebooks/test.ipynb --diff

--- /Users/dhruv/playground/ruff/notebooks/test.ipynb:cell 2

+++ /Users/dhruv/playground/ruff/notebooks/test.ipynb:cell 2

@@ -1,2 +1 @@

-import random

-import math

+import random

--- /Users/dhruv/playground/ruff/notebooks/test.ipynb:cell 4

+++ /Users/dhruv/playground/ruff/notebooks/test.ipynb:cell 4

@@ -1,4 +1,3 @@

import random

-import pprint

random.randint(10, 20)

--- /Users/dhruv/playground/ruff/notebooks/test.ipynb:cell 5

+++ /Users/dhruv/playground/ruff/notebooks/test.ipynb:cell 5

@@ -1,3 +1,3 @@

foo = 1

-if foo is 2:

- raise ValueError(f"Invalid foo: {foo is 1}")

+if foo == 2:

+ raise ValueError(f"Invalid foo: {foo == 1}")

Would fix 4 errors.

```

</p>

</details>

### `dhruv/consistent-cell-indices`

<details><summary>Diagnostic output:</summary>

<p>

```console

$ cargo run --bin ruff -- check --no-cache --isolated ~/playground/ruff/notebooks/test.ipynb

/Users/dhruv/playground/ruff/notebooks/test.ipynb:cell 3:2:8: F401 [*] `math` imported but unused

/Users/dhruv/playground/ruff/notebooks/test.ipynb:cell 5:1:8: F811 Redefinition of unused `random` from line 1

/Users/dhruv/playground/ruff/notebooks/test.ipynb:cell 5:2:8: F401 [*] `pprint` imported but unused

/Users/dhruv/playground/ruff/notebooks/test.ipynb:cell 6:2:4: F632 [*] Use `==` to compare constant literals

/Users/dhruv/playground/ruff/notebooks/test.ipynb:cell 6:3:38: F632 [*] Use `==` to compare constant literals

Found 5 errors.

[*] 4 potentially fixable with the --fix option.

```

</p>

</details>

<details><summary>Diff output:</summary>

<p>

```console

$ cargo run --bin ruff -- check --no-cache --isolated ~/playground/ruff/notebooks/test.ipynb --diff

--- /Users/dhruv/playground/ruff/notebooks/test.ipynb:cell 3

+++ /Users/dhruv/playground/ruff/notebooks/test.ipynb:cell 3

@@ -1,2 +1 @@

-import random

-import math

+import random

--- /Users/dhruv/playground/ruff/notebooks/test.ipynb:cell 5

+++ /Users/dhruv/playground/ruff/notebooks/test.ipynb:cell 5

@@ -1,4 +1,3 @@

import random

-import pprint

random.randint(10, 20)

--- /Users/dhruv/playground/ruff/notebooks/test.ipynb:cell 6

+++ /Users/dhruv/playground/ruff/notebooks/test.ipynb:cell 6

@@ -1,3 +1,3 @@

foo = 1

-if foo is 2:

- raise ValueError(f"Invalid foo: {foo is 1}")

+if foo == 2:

+ raise ValueError(f"Invalid foo: {foo == 1}")

Would fix 4 errors.

```

</p>

</details>

fixes: #6673

I got confused and refactored a bit, now the naming should be more

consistent. This is the basis for the range formatting work.

Chages:

* `format_module` -> `format_module_source` (format a string)

* `format_node` -> `format_module_ast` (format a program parsed into an

AST)

* Added `parse_ok_tokens` that takes `Token` instead of `Result<Token>`

* Call the source code `source` consistently

* Added a `tokens_and_ranges` helper

* `python_ast` -> `module` (because that's the type)

**Summary** Check that `closefd` and `opener` aren't being used with

`builtin.open()` before suggesting `Path.open()` because pathlib doesn't

support these arguments.

Closes#7620

**Test Plan** New cases in the fixture.

## Summary

This is a follow-up to #7469 that attempts to achieve similar gains, but

without introducing malachite. Instead, this PR removes the `BigInt`

type altogether, instead opting for a simple enum that allows us to

store small integers directly and only allocate for values greater than

`i64`:

```rust

/// A Python integer literal. Represents both small (fits in an `i64`) and large integers.

#[derive(Clone, PartialEq, Eq, Hash)]

pub struct Int(Number);

#[derive(Debug, Clone, PartialEq, Eq, Hash)]

pub enum Number {

/// A "small" number that can be represented as an `i64`.

Small(i64),

/// A "large" number that cannot be represented as an `i64`.

Big(Box<str>),

}

impl std::fmt::Display for Number {

fn fmt(&self, f: &mut std::fmt::Formatter<'_>) -> std::fmt::Result {

match self {

Number::Small(value) => write!(f, "{value}"),

Number::Big(value) => write!(f, "{value}"),

}

}

}

```

We typically don't care about numbers greater than `isize` -- our only

uses are comparisons against small constants (like `1`, `2`, `3`, etc.),

so there's no real loss of information, except in one or two rules where

we're now a little more conservative (with the worst-case being that we

don't flag, e.g., an `itertools.pairwise` that uses an extremely large

value for the slice start constant). For simplicity, a few diagnostics

now show a dedicated message when they see integers that are out of the

supported range (e.g., `outdated-version-block`).

An additional benefit here is that we get to remove a few dependencies,

especially `num-bigint`.

## Test Plan

`cargo test`

## Summary

This is whitespace as per `is_python_whitespace`, and right now it tends

to lead to panics in the formatter. Seems reasonable to treat it as

whitespace in the `SimpleTokenizer` too.

Closes .https://github.com/astral-sh/ruff/issues/7624.

## Summary

Given:

```python

if True:

if True:

pass

else:

pass

# a

# b

# c

else:

pass

```

We want to preserve the newline after the `# c` (before the `else`).

However, the `last_node` ends at the `pass`, and the comments are

trailing comments on the `pass`, not trailing comments on the

`last_node` (the `if`). As such, when counting the trailing newlines on

the outer `if`, we abort as soon as we see the comment (`# a`).

This PR changes the logic to skip _all_ comments (even those with

newlines between them). This is safe as we know that there are no

"leading" comments on the `else`, so there's no risk of skipping those

accidentally.

Closes https://github.com/astral-sh/ruff/issues/7602.

## Test Plan

No change in compatibility.

Before:

| project | similarity index | total files | changed files |

|--------------|------------------:|------------------:|------------------:|

| cpython | 0.76083 | 1789 | 1631 |

| django | 0.99983 | 2760 | 36 |

| transformers | 0.99963 | 2587 | 319 |

| twine | 1.00000 | 33 | 0 |

| typeshed | 0.99979 | 3496 | 22 |

| warehouse | 0.99967 | 648 | 15 |

| zulip | 0.99972 | 1437 | 21 |

After:

| project | similarity index | total files | changed files |

|--------------|------------------:|------------------:|------------------:|

| cpython | 0.76083 | 1789 | 1631 |

| django | 0.99983 | 2760 | 36 |

| transformers | 0.99963 | 2587 | 319 |

| twine | 1.00000 | 33 | 0 |

| typeshed | 0.99983 | 3496 | 18 |

| warehouse | 0.99967 | 648 | 15 |

| zulip | 0.99972 | 1437 | 21 |

## Summary

This PR fixes the autofix behavior for `PT022` to create an additional

edit for the return type if it's present. The edit will update the

return type from `Generator[T, ...]` to `T`. As per the [official

documentation](https://docs.python.org/3/library/typing.html?highlight=typing%20generator#typing.Generator),

the first position is the yield type, so we can ignore other positions.

```python

typing.Generator[YieldType, SendType, ReturnType]

```

## Test Plan

Add new test cases, `cargo test` and review the snapshots.

fixes: #7610

## Summary

Implement

[`simplify-print`](https://github.com/dosisod/refurb/blob/master/refurb/checks/builtin/print.py)

as `print-empty-string` (`FURB105`).

Extends the original rule in that it also checks for multiple empty

string positional arguments with an empty string separator.

Related to #1348.

## Test Plan

`cargo test`

Similar to tuples, a generator _can_ be parenthesized or

unparenthesized. Only search for bracketed comments if it contains its

own parentheses.

Closes https://github.com/astral-sh/ruff/issues/7623.

## Summary

Given:

```python

if True:

if True:

if True:

pass

#a

#b

#c

else:

pass

```

When determining the placement of the various comments, we compute the

indentation depth of each comment, and then compare it to the depth of

the previous statement. It turns out this can lead to reordering

comments, e.g., above, `#b` is assigned as a trailing comment of `pass`,

and so gets reordered above `#a`.

This PR modifies the logic such that when we compute the indentation

depth of `#b`, we limit it to at most the indentation depth of `#a`. In

other words, when analyzing comments at the end of branches, we don't

let successive comments go any _deeper_ than their preceding comments.

Closes https://github.com/astral-sh/ruff/issues/7602.

## Test Plan

`cargo test`

No change in similarity.

Before:

| project | similarity index | total files | changed files |

|--------------|------------------:|------------------:|------------------:|

| cpython | 0.76083 | 1789 | 1631 |

| django | 0.99983 | 2760 | 36 |

| transformers | 0.99963 | 2587 | 319 |

| twine | 1.00000 | 33 | 0 |

| typeshed | 0.99979 | 3496 | 22 |

| warehouse | 0.99967 | 648 | 15 |

| zulip | 0.99972 | 1437 | 21 |

After:

| project | similarity index | total files | changed files |

|--------------|------------------:|------------------:|------------------:|

| cpython | 0.76083 | 1789 | 1631 |

| django | 0.99983 | 2760 | 36 |

| transformers | 0.99963 | 2587 | 319 |

| twine | 1.00000 | 33 | 0 |

| typeshed | 0.99979 | 3496 | 22 |

| warehouse | 0.99967 | 648 | 15 |

| zulip | 0.99972 | 1437 | 21 |

## Summary

Given:

```python

# -*- coding: utf-8 -*-

import random

# Defaults for arguments are defined here

# args.threshold = None;

logger = logging.getLogger("FastProject")

```

We want to count the number of newlines after `import random`, to ensure

that there's _at least one_, but up to two.

Previously, we used the end range of the statement (then skipped

trivia); instead, we need to use the end of the _last comment_. This is

similar to #7556.

Closes https://github.com/astral-sh/ruff/issues/7604.

## Summary

B005 only flags `.strip()` calls for which the argument includes

duplicate characters. This is consistent with bugbear, but isn't

explained in the documentation.

## Summary

Currently, this happens

```sh

$ echo "print()" | ruff format -

#Notice that nothing went to stdout

```

Which does not match `ruff check --fix - ` behavior and deletes my code

every time I format it (more or less 5 times per minute 😄).

I just checked that my example works as the change was very

straightforward.

It is apparently possible to add files to the git index, even if they

are part of the gitignore (see e.g.

https://stackoverflow.com/questions/45400361/why-is-gitignore-not-ignoring-my-files,

even though it's strange that the gitignore entries existed before the

files were added, i wouldn't know how to get them added in that case). I

ran

```

git rm -r --cached .

```

then change the gitignore not actually ignore those files with the

exception of

`crates/ruff_cli/resources/test/fixtures/cache_mutable/source.py`, which

is actually a generated file.

## Summary

This is only used for the `level` field in relative imports (e.g., `from

..foo import bar`). It seems unnecessary to use a wrapper here, so this

PR changes to a `u32` directly.

## Summary

When we format the trailing comments on a clause body, we check if there

are any newlines after the last statement; if not, we insert one.

This logic didn't take into account that the last statement could itself

have trailing comments, as in:

```python

if True:

pass

# comment

else:

pass

```

We were thus inserting a newline after the comment, like:

```python

if True:

pass

# comment

else:

pass

```

In the context of function definitions, this led to an instability,

since we insert a newline _after_ a function, which would in turn lead

to the bug above appearing in the second formatting pass.

Closes https://github.com/astral-sh/ruff/issues/7465.

## Test Plan

`cargo test`

Small improvement in `transformers`, but no regressions.

Before:

| project | similarity index | total files | changed files |

|--------------|------------------:|------------------:|------------------:|

| cpython | 0.76083 | 1789 | 1631 |

| django | 0.99983 | 2760 | 36 |

| transformers | 0.99956 | 2587 | 404 |

| twine | 1.00000 | 33 | 0 |

| typeshed | 0.99983 | 3496 | 18 |

| warehouse | 0.99967 | 648 | 15 |

| zulip | 0.99972 | 1437 | 21 |

After:

| project | similarity index | total files | changed files |

|--------------|------------------:|------------------:|------------------:|

| cpython | 0.76083 | 1789 | 1631 |

| django | 0.99983 | 2760 | 36 |

| **transformers** | **0.99957** | **2587** | **402** |

| twine | 1.00000 | 33 | 0 |

| typeshed | 0.99983 | 3496 | 18 |

| warehouse | 0.99967 | 648 | 15 |

| zulip | 0.99972 | 1437 | 21 |

## Summary

If a function has no parameters (and no comments within the parameters'

`()`), we're supposed to wrap the return annotation _whenever_ it

breaks. However, our `empty_parameters` test didn't properly account for

the case in which the parameters include a newline (but no other

content), like: